Understanding meaning computer data.

Information - Communication Technology.

Communication Technology.

The technical communication technology is a prerequisite for modern information processing.

🔰 the most logical

begin anchor.

This is a just technical part.

Being enablers there should be no dependicies for business processes. The only excpetions are the business proces as enablers.

Contents

| Reference | Topic | Squad |

| Intro | Communication Technology. | 01.01 |

| Basic | Data, Information Exchange. | 02.01 |

| Tech usage | Communication technology, the beginning. | 03.01 |

| de-encrypt | Using automatization in messaging. | 04.01 |

| encoding | Data, information representations. | 05.01 |

| evolutions | The yellow brick road. | 06.01 |

Progress

- 2020 week:27

- Building up as result from split.

What is missed in old pages for later to add.

Data, Information Exchange.

There are many ways to exchange data between processes. A clear decoupling plan is helpful with creating normalized systems.

Normalized systems have the intention in an easy transition by some "application" in another.

It is possible to send a human messenger with the message. In nearby distance or when needing trustworthiness this is a good solution.

In other situations a standard message system is more adequate due to the environment with mandatory distance or time limit requirements.

signal flags

International maritime signal flags

are various flags used to communicate with ships. The principal system of flags and associated codes is the International Code of Signals.

Various navies have flag systems with additional flags and codes, and other flags are used in special uses, or have historical significance.

There are various methods by which the flags can be used as signals:

- A series of flags can spell out a message, each flag representing a letter

- Individual flags have specific and standard meanings

- One or more flags form a code word whose meaning can be looked up in a code book held by both parties

- n yacht racing and dinghy racing, flags have other meanings; for example, the P flag is used as the "preparatory" flag to indicate an imminent start

The associated illustration is not have a rela meaning ohter than that there is party soemthing to celebrate.

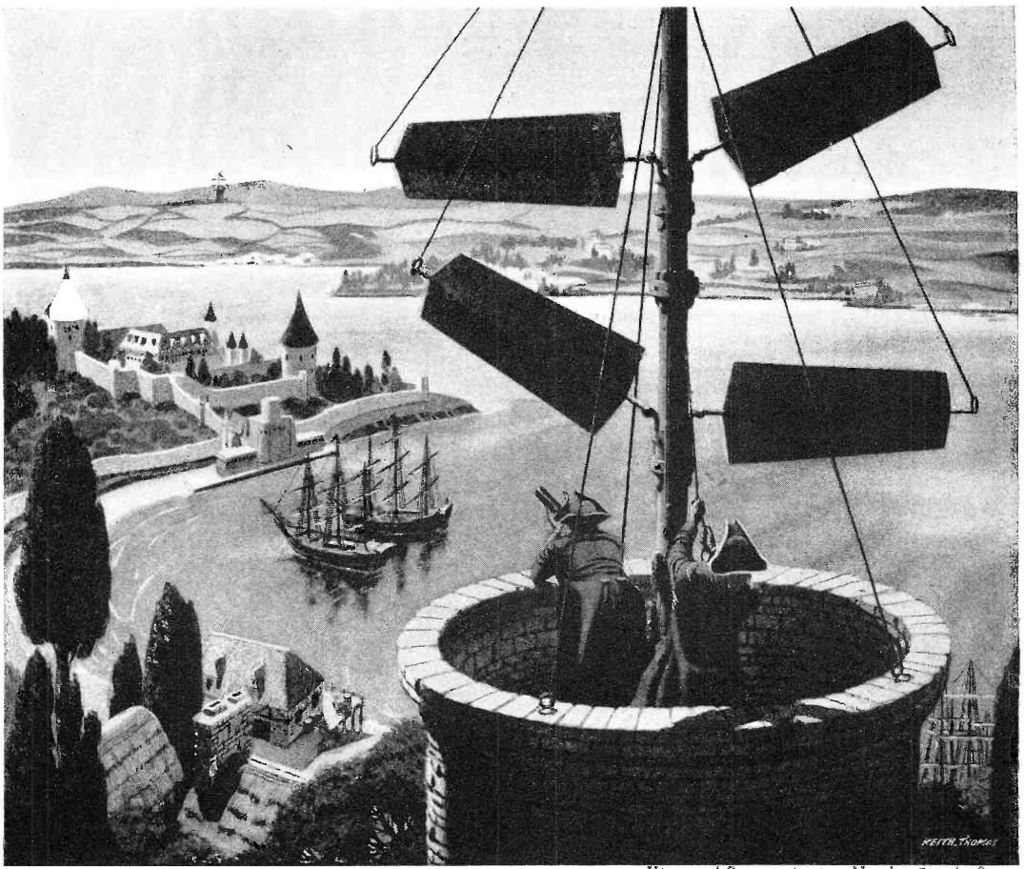

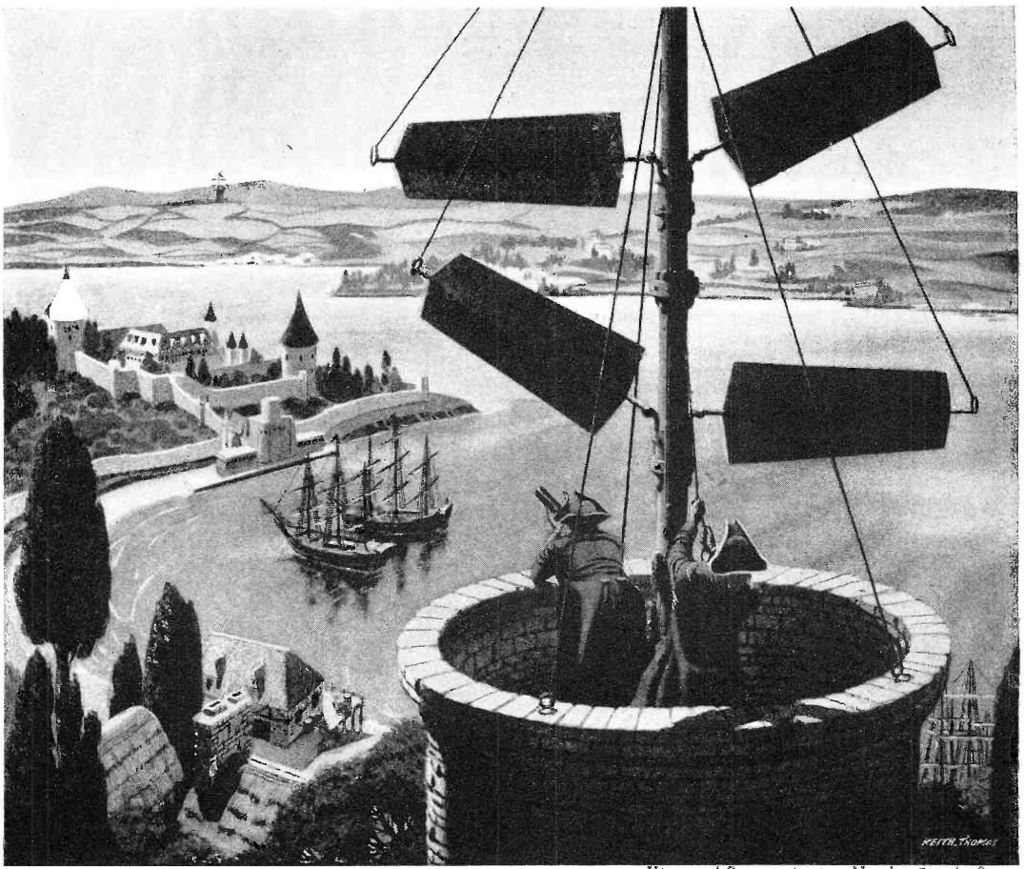

optical telegraph

An optical telegraph is a line of stations, typically towers, for the purpose of conveying textual information by means of visual signals. There are two main types of such systems;

the semaphore telegraph which uses pivoted indicator arms and conveys information according to the direction the indicators point, and the shutter telegraph which uses panels that can be rotated to block or pass the light from the sky behind to convey information.

The illustration is of the Napolean era. These kind of system was used widely all over the world in ancient times.

Communication technology, the beginning.

Doing communications in a more advanced way than using basic human way of noice (the word) visability (letters, images signs) started after the industralisation.

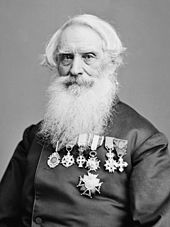

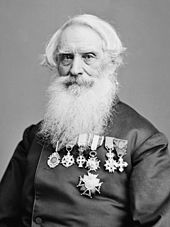

Morse

Samuel Morse Samuel Morse

The Morse system for telegraphy, which was first used in about 1844, was designed to make indentations on a paper tape when electric currents were received.

Morse´s original telegraph receiver used a mechanical clockwork to move a paper tape. When an electrical current was received, an electromagnet engaged an armature that pushed a stylus onto the moving paper tape, making an indentation on the tape.

When the current was interrupted, a spring retracted the stylus and that portion of the moving tape remained unmarked.

Morse code was developed so that operators could translate the indentations marked on the paper tape into text messages.

In his earliest code, Morse had planned to transmit only numerals and to use a codebook to look up each word according to the number which had been sent.

However, the code was soon expanded by Alfred Vail in 1840 to include letters and special characters so it could be used more generally.

Vail estimated the frequency of use of letters in the English language by counting the movable type he found in the type-cases of a local newspaper in Morristown, New Jersey.

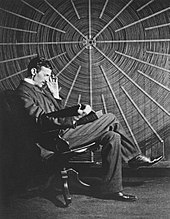

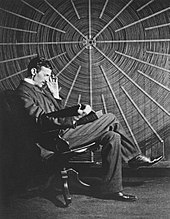

Nikola Tesla

Nikola Tesla

The three big firms, Westinghouse, Edison, and Thomson-Houston, were trying to grow in a capital-intensive business while financially undercutting each other.

There was even a "war of currents&qut propaganda campaign going on with Edison Electric trying to claim their direct current system was better and safer than the Westinghouse alternating current system.

Competing in this market meant Westinghouse would not have the cash or engineering resources to develop Tesla´s motor and the related polyphase system right away.

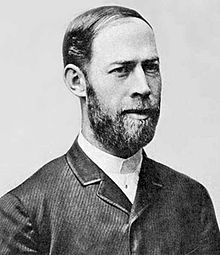

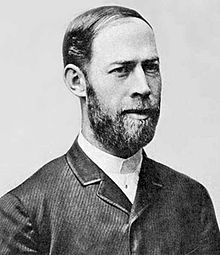

Henrich Herz

Heinrich Rudolf Hertz

Between 1886 and 1889 Hertz would conduct a series of experiments that would prove the effects he was observing were results of Maxwell's predicted electromagnetic waves.

Starting in November 1887 with his paper "On Electromagnetic Effects Produced by Electrical Disturbances in Insulators",

Hertz would send a series of papers to Helmholtz at the Berlin Academy, including papers in 1888 that showed transverse free space electromagnetic waves traveling at a finite speed over a distance.

Marconi

Guglielmo Marconi

Late one night, in December 1894, Marconi demonstrated a radio transmitter and receiver to his mother, a set-up that made a bell ring on the other side of the room by pushing a telegraphic button on a bench.

Supported by his father, Marconi continued to read through the literature and picked up on the ideas of physicists who were experimenting with radio waves.

He developed devices, such as portable transmitters and receiver systems, that could work over long distances, turning what was essentially a laboratory experiment into a useful communication system.

Marconi came up with a functional system with many components

Using automatization in messaging.

Being able to communicate using machines and more of the technical realisations solving the limits previous exist a new problem arose.

How to keep the information that is send in transmission types easily tapped by others as a secret?

Encrypting decrypting machines.

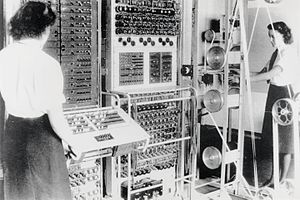

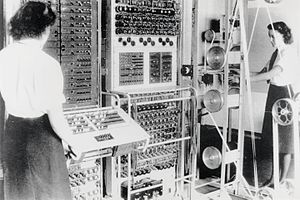

Colossus

Colossus was a set of computers developed by British codebreakers in the years 1943-1945 to help in the cryptanalysis of the Lorenz cipher.

Colossus used thermionic valves (vacuum tubes) to perform Boolean and counting operations.

Colossus is thus regarded as the world´s first programmable, electronic, digital computer, although it was programmed by switches and plugs and not by a stored program.

This was not the decryption of the engigma machine but the mechanical transformed telex machines (Lorenz machines - tunny).

Decrypting Enigma

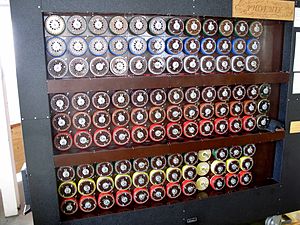

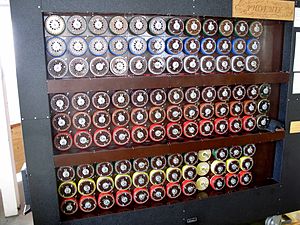

British bombe

The British bombe was an electromechanical device designed by Alan Turing soon after he arrived at Bletchley Park in September 1939.

Harold "Doc" Keen of the British Tabulating Machine Company (BTM) in Letchworth (35 kilometres (22 mi) from Bletchley) was the engineer who turned Turing´s ideas into a working machine—under the codename CANTAB.

Turin´s specification developed the ideas of the Poles´ bomba kryptologiczna but was designed for the much more general crib-based decryption.

The enigma code has never been broken, sloppy procedures leaking basic conventions decreased the number of options to verify sufficient for getting enough decrypted in time.

The impact of reading what another did not want to let known was huge

While Germany introduced a series of improvements to Enigma over the years, and these hampered decryption efforts to varying degrees,

they did not ultimately prevent Britain and its allies from exploiting Enigma-encoded messages as a major source of intelligence during the war.

Many commentators say the flow of communications intelligence from Ultra´s decryption of Enigma, Lorenz and other ciphers shortened the war significantly and may even have altered its outcome.

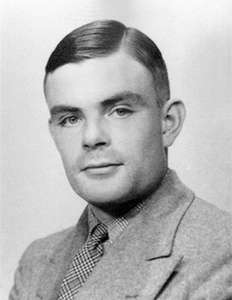

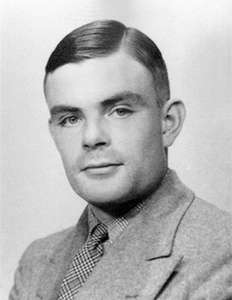

Turing

Alan-Turing (brittanica) The best known person for using computers.

Honored by:

Turing award

What mathematicians called an "effective" method for solving a problem was simply one that could be carried by a human mathematical clerk working by rote.

In Turing´s time, those rote-workers were in fact called "computers," and human computers carried out some aspects of the work later done by electronic computers.

The Entscheidungsproblem sought an effective method for solving the fundamental mathematical problem of determining exactly which mathematical statements are provable within a given formal mathematical system and which are not.

A method for determining this is called a decision method. In 1936 Turing and Church independently showed that, in general, the Entscheidungsproblem problem has no resolution, proving that no consistent formal system of arithmetic has an effective decision method.

Turing%27s proof

It was the second proof (after Church´ss theorem) of the conjecture that some purely mathematical yes-no questions can never be answered by computation;

more technically, that some decision problems are "undecidable" in the sense that there is no single algorithm that infallibly gives a correct "yes" or "no" answer to each instance of the problem.

In Turing´s own words: "...what I shall prove is quite different from the well-known results of Gödel ... I shall now show that there is no general method which tells whether a given formula U is provable in K [Principia Mathematica]..."e (Undecidable, p. 145).

May be not what has become mainstream computer science, code breaking.

For sure the foundation for AI Artificial Intelligence. To cite:

Turing was a founding father of artificial intelligence and of modern cognitive science, and he was a leading early exponent of the hypothesis that the human brain is in large part a digital computing machine.

He theorized that the cortex at birth is an "unorganised machine" that through "training" becomes organized into a universal machine or something like it.

Turing proposed what subsequently became known as the Turing test as a criterion for whether an artificial computer is thinking (1950).

Data, information representations.

The spoken en wriiten language for humans is a continuous development.

The technical implementations in supporting those did change when technology changed.

The ones that should be common these days are limited standards.

Ascii - Ebdic - Single byte

These are encodings: interpretation of a single byte 8 bits, 256 different values)

Properties:

- Every byte can be processed by the computer as valid a valid byte / character.

- Not all interpretations are existing in defined Ascii tables or Ebcdic tables.

transcoding without loss of information is not always possible.

Unicode character representation - Multi byte

Many versions several implementations.

Unicode

- Not Every byte can be processed by the computer as valid.

- a character is made up in varying between 1-4 bytes.

- UTF8 - varibele 1-4 bytes, the lower 127, old Ascii interpretation.

The first 32 being control characters not being used as text characters.

- UTF16LE UT16BE 2,4 bytes. BE big-endian or Le little-endian.

the endianess is following the order of the preferred hardware chip architecture (LE) left to right or (BE) right to left.

- UTF32LE UT32BE 4 bytes. BE big-endian or LE little-endian.

Unicode is becoming the defacto standard.

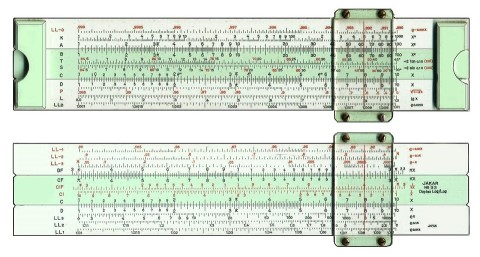

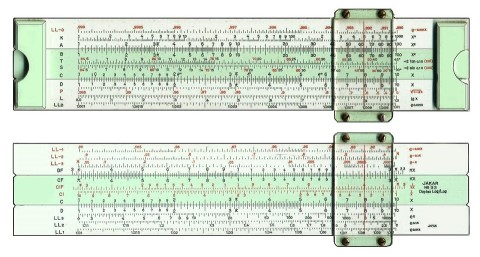

Floating, Math in ICT

Atbasic issues in ICT with math: inaccuracy with calculations, Floating numbers.

Using a slider you had to think about that and by that knowing the possible impact.

Using a computer everybody trust the results until the surprising wrong results getting complaints by feed back respsonses.

IEEE 754

The standard provides for many closely related formats, differing in only a few details. Five of these formats are called basic formats, and others are termed extended precision formats and extendable precision format.

Three formats are especially widely used in computer hardware and languages:

- Single precision, has a precision of 24 bits (about 7 decimal digits)

- Double precision, has a precision of 53 bits (about 16 decimal digits).

- Double precision.

The common format in programming is double format. Using a GPU (NVIDIA) it is half precision. A difference of less than 1 promille is for image procession seen as sufficiënt.

Noice & synchronisation

Although everything is told to be digital the transmission are using real world phenomes in an analog world.

Shannon-Hartley

In information theory, the Shannon–Hartley theorem tells the maximum rate at which information can be transmitted over a communications channel of a specified bandwidth in the presence of noise.

It is an application of the noisy-channel coding theorem to the archetypal case of a continuous-time analog communications channel subject to Gaussian noise.

High-Level Data Link Control Is vo synchronzing clocks without using clocks.

This bit-stuffing serves a second purpose, that of ensuring a sufficient number of signal transitions.

On synchronous links, the data is NRZI encoded, so that a 0-bit is transmitted as a change in the signal on the line, and a 1-bit is sent as no change.

Thus, each 0 bit provides an opportunity for a receiving modem to synchronize its clock via a phase-locked loop.

If there are too many 1-bits in a row, the receiver can lose count. Bit-stuffing provides a minimum of one transition per six bit times during transmission of data, and one transition per seven bit times during transmission of a flag.

This is really technical but on the low technical level necessary and in use at all kind of common devices.

This "change-on-zero" is used by High-Level Data Link Control and USB.

They both avoid long periods of no transitions (even when the data contains long sequences of 1 bits) by using zero-bit insertion.

HDLC transmitters insert a 0 bit after 5 contiguous 1 bits (except when transmitting the frame delimiter "01111110"). USB transmitters insert a 0 bit after 6 consecutive 1 bits.

The receiver at the far end uses every transition-both from 0 bits in the data and these extra non-data 0 bits — to maintain clock synchronization.

The yellow brick road.

Monetizing Data: Follow the Yellow Brick Road

While the tools have vastly improved, and the power of BI buttressed by AI and Machine Learning has helped greatly with incorporating challenges like unstructured and disparate data (internal and external),

that Yellow Brick Road journey still requires cultural and operational steps including empowerment of associated teams.

There is not a lot of room for autocracy in achieving the best results.

Foundational elements work best, and collaboration is a must.

Invisibility Technical communications.

New technology still is evolving.

In telecommunications, 5G is the fifth generation technology standard for cellular networks, which cellular phone companies began deploying worldwide in 2019, the planned successor to the 4G networks which provide connectivity to most current cellphones.

Like its predecessors, 5G networks are cellular networks, in which the service area is divided into small geographical areas called cells.

Not understanding technology brings old types of conspiracies

During the COVID-19 pandemic, several conspiracy theories circulating online posited a link between severe acute respiratory syndrome coronavirus 2 (SARS-CoV-2) and 5G.

This has led to dozens of arson attacks being made on telecom masts in the Netherlands (Amsterdam, Rotterdam, etc.), Ireland (Cork,[ etc.), Cyprus, the United Kingdom (Dagenham, Huddersfield, Birmingham, Belfast and Liverpool), Belgium (Pelt), Italy (Maddaloni), Croatia (Bibinje) and Sweden.

It led to at least 61 suspected arson attacks against telephone masts in the United Kingdom alone and over twenty in The Netherlands.

When steam trains were introduced a lot of conspiracies were made in trying to halt chnage.

🔰 the most logical

begin anchor.

The technical communication technology is a prerequisite for modern information processing.

The technical communication technology is a prerequisite for modern information processing.  There are many ways to exchange data between processes. A clear decoupling plan is helpful with creating normalized systems.

Normalized systems have the intention in an easy transition by some "application" in another.

There are many ways to exchange data between processes. A clear decoupling plan is helpful with creating normalized systems.

Normalized systems have the intention in an easy transition by some "application" in another.

Atbasic issues in ICT with math: inaccuracy with calculations, Floating numbers.

Atbasic issues in ICT with math: inaccuracy with calculations, Floating numbers.