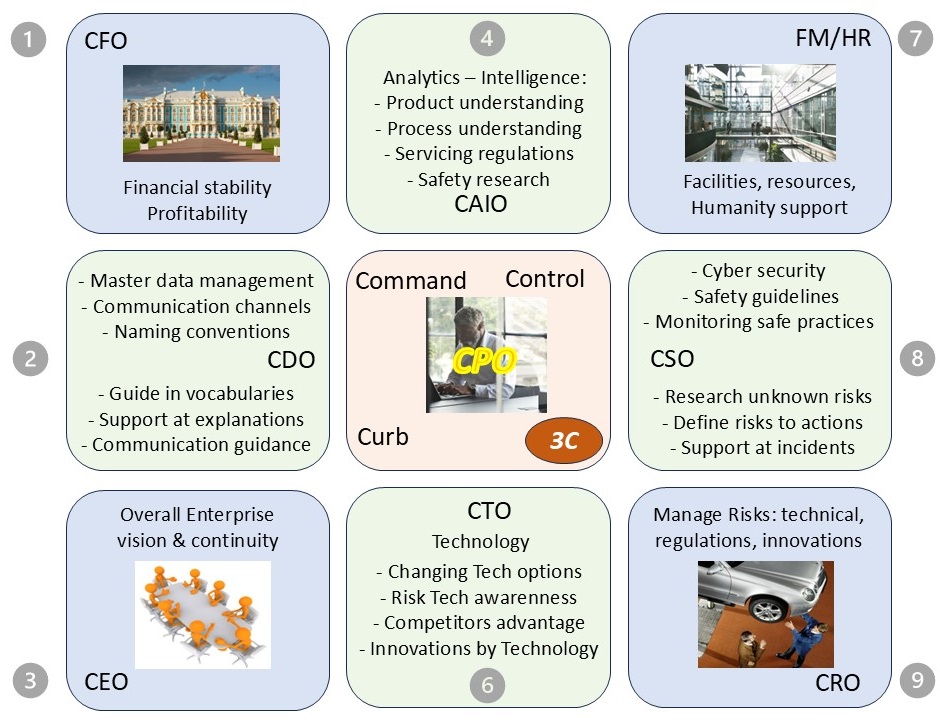

Command & Control Lead

M-1 Command & Control - investigation situation

M-1.1 Contents

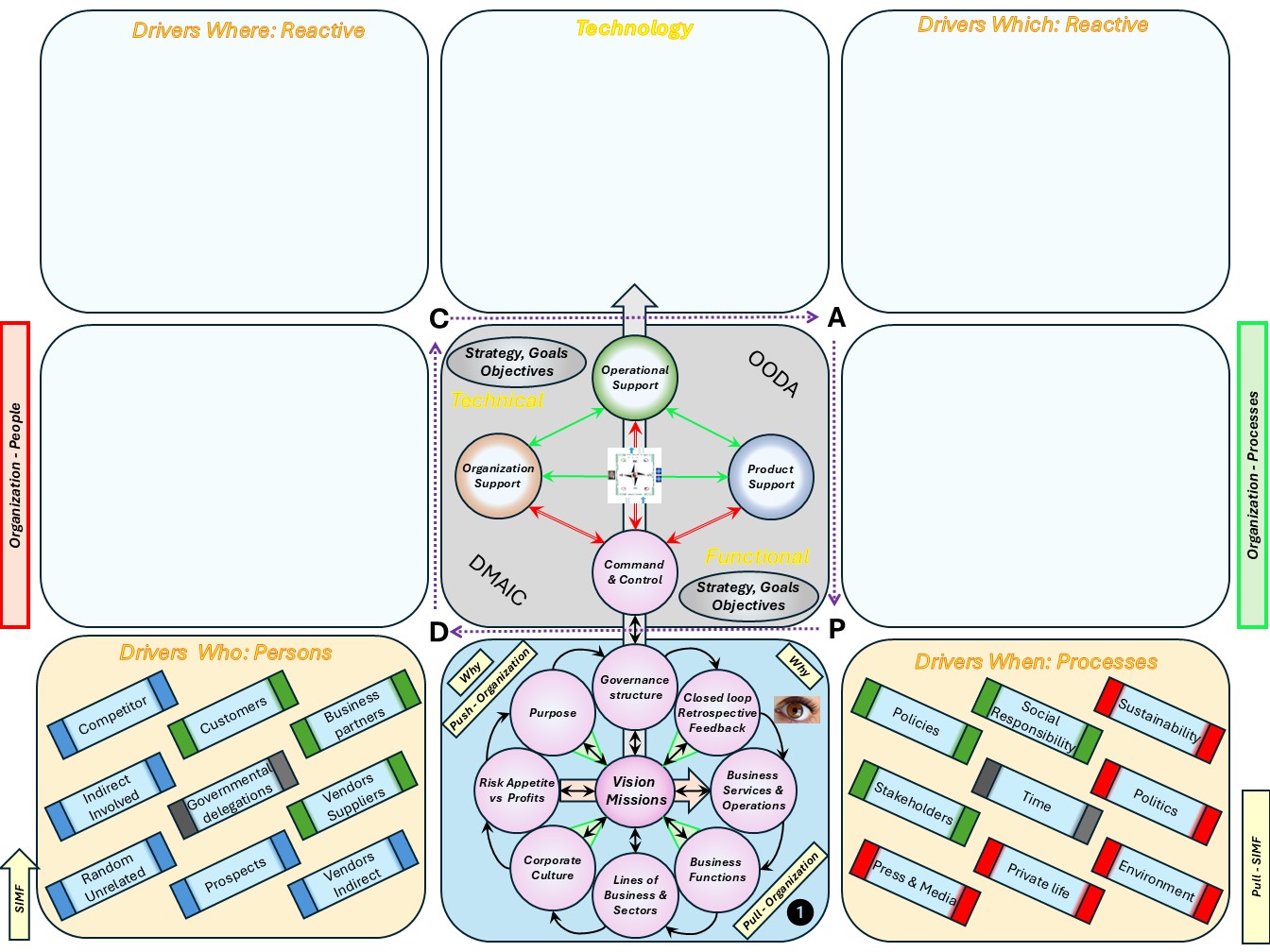

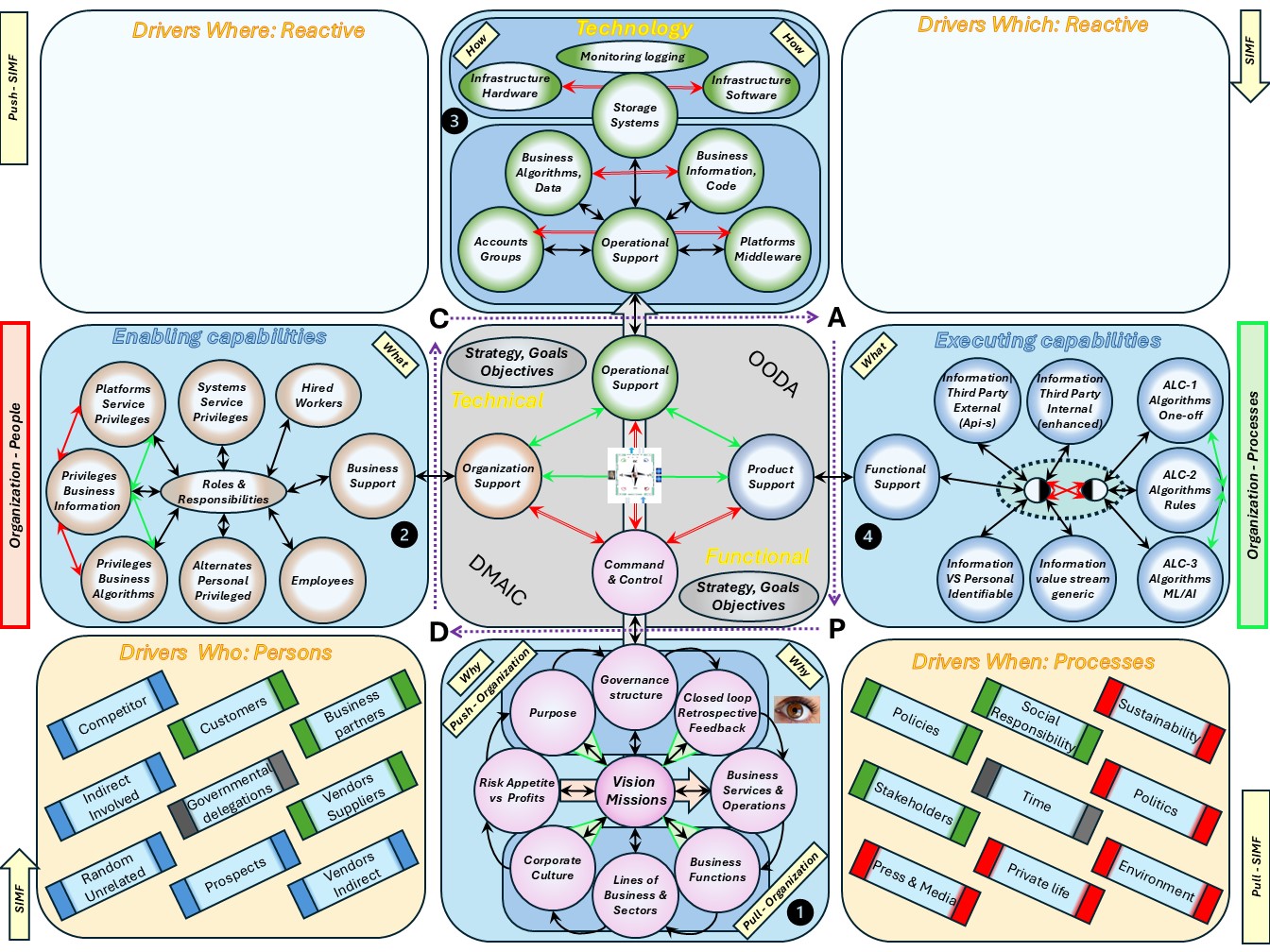

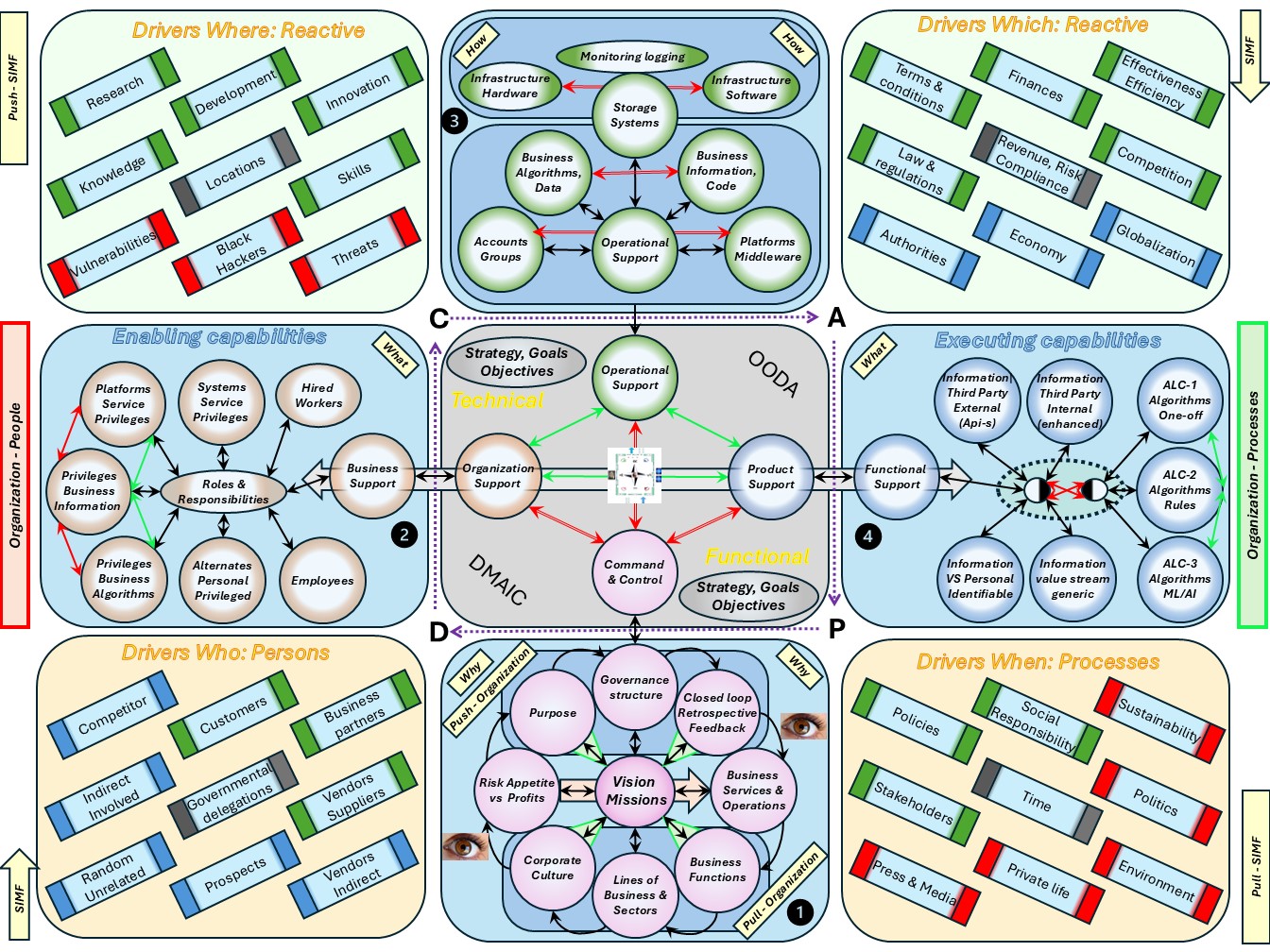

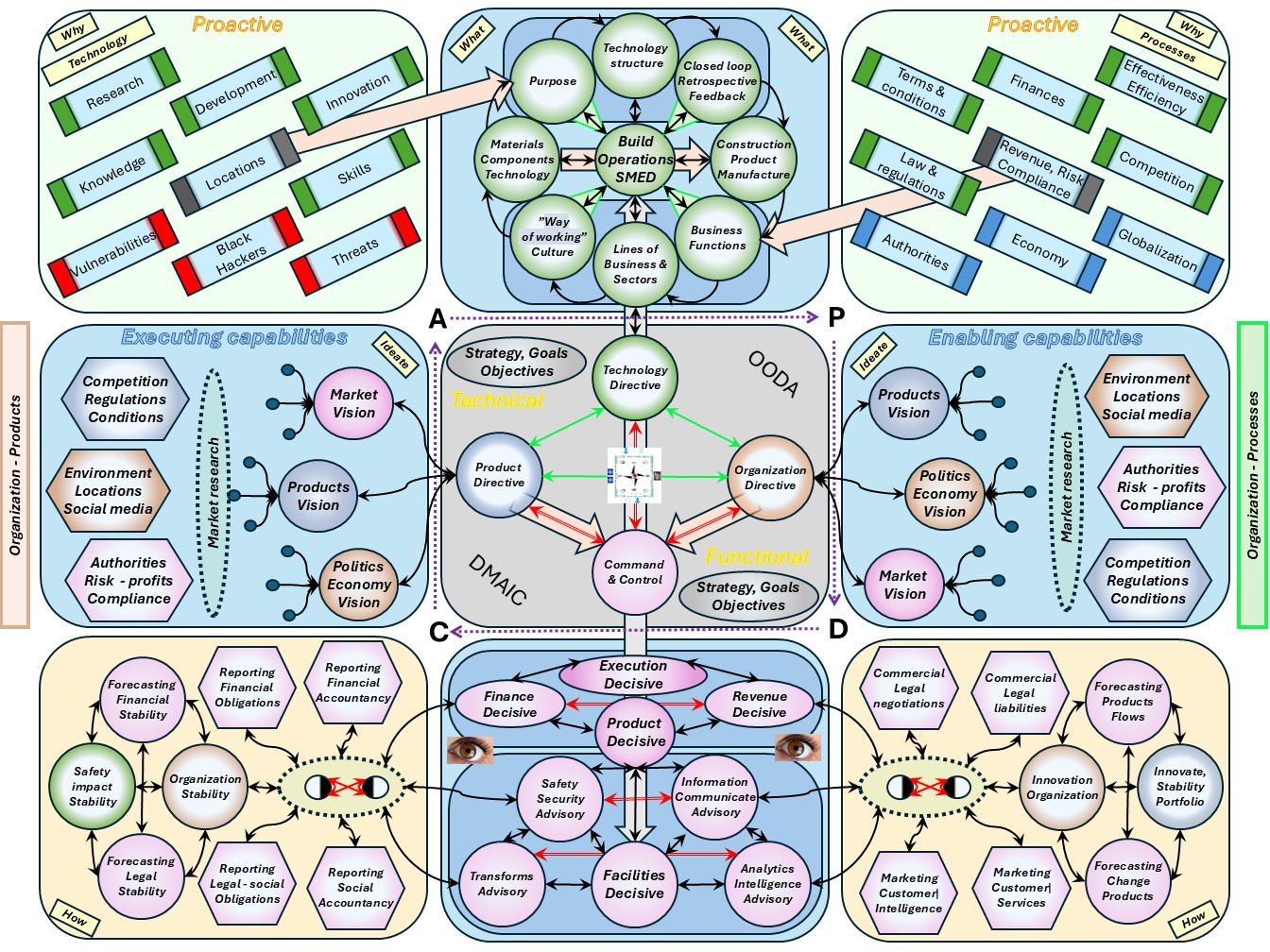

⚙ M-1.1.1 Global content

Command & Control: describing the processes being processed.

The information explosion. The change is the amount we are collecting measuring processes as new information (edge).

📚 Information questions Why.

⚙ how to do measurements for information.

🎭 What to do with new information insight?

⚖ legally & ethical acceptable?

When wanting going logical backward:

🔰 Too fast ..

previous.

⚙ M-1.1.2 Local content

| Reference | Squad | Abbrevation |

| M-1 Command & Control - investigation situation | |

| M-1.1 Contents | contents | Contents |

| M-1.1.1 Global content | |

| M-1.1.2 Local content | |

| M-1.1.3 Guide reading this page | |

| M-1.1.4 Progress | |

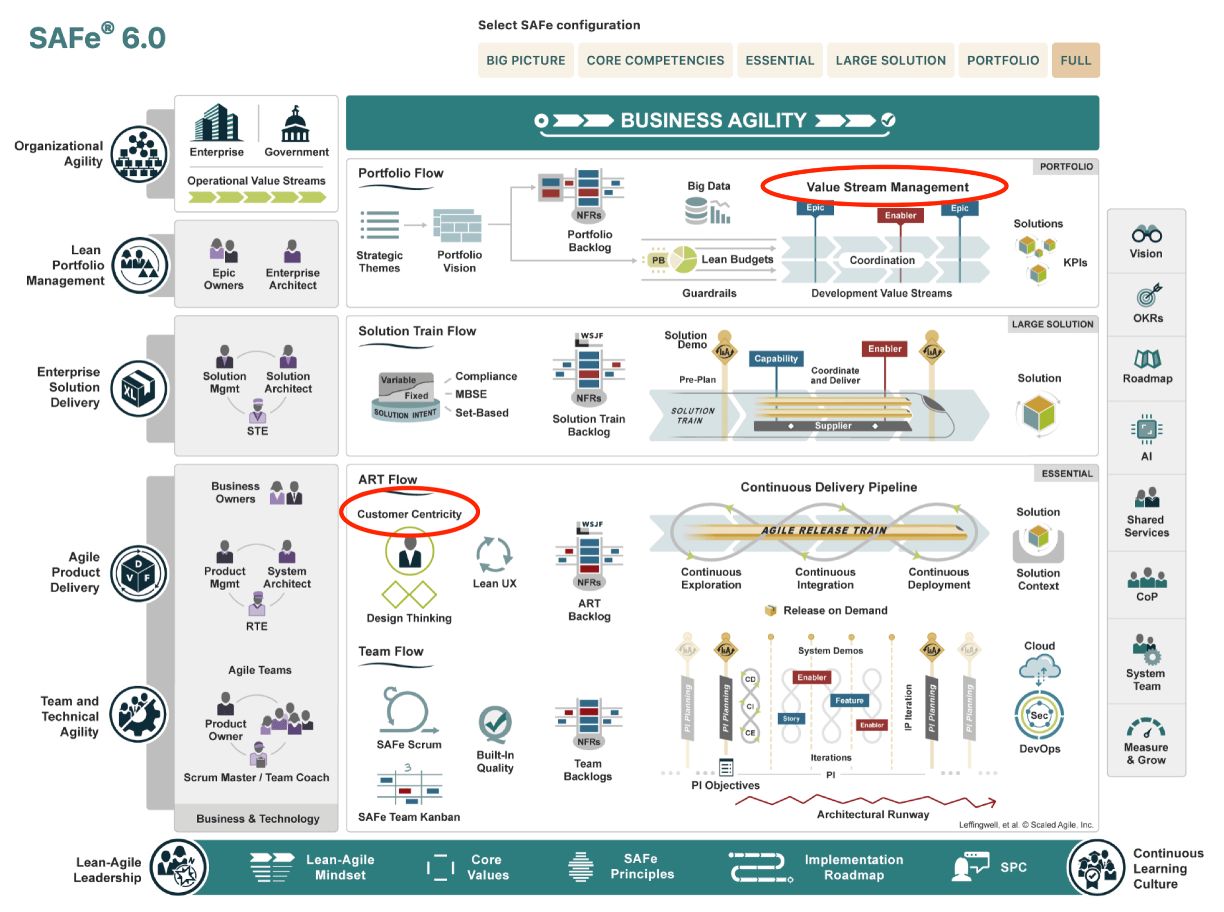

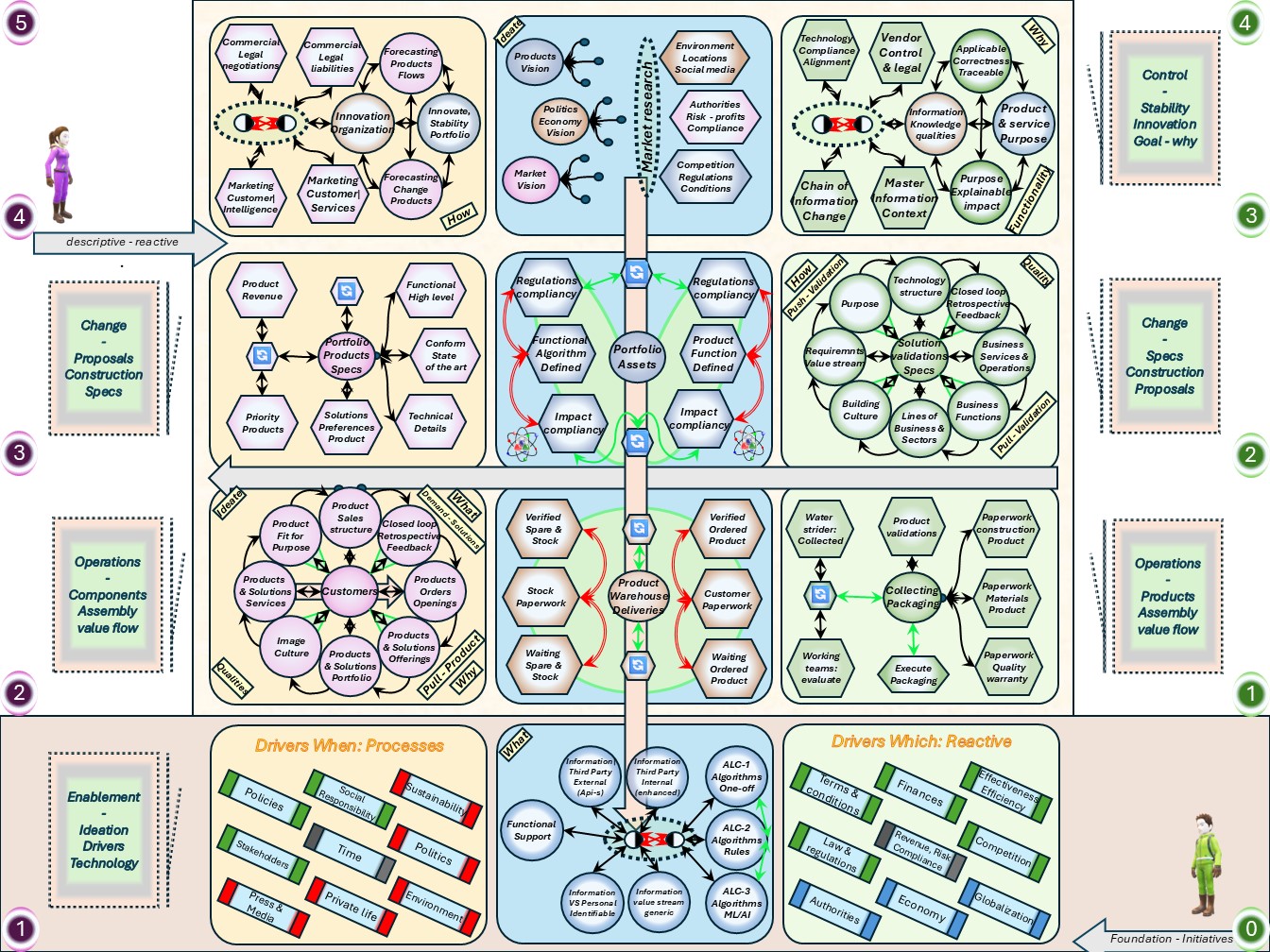

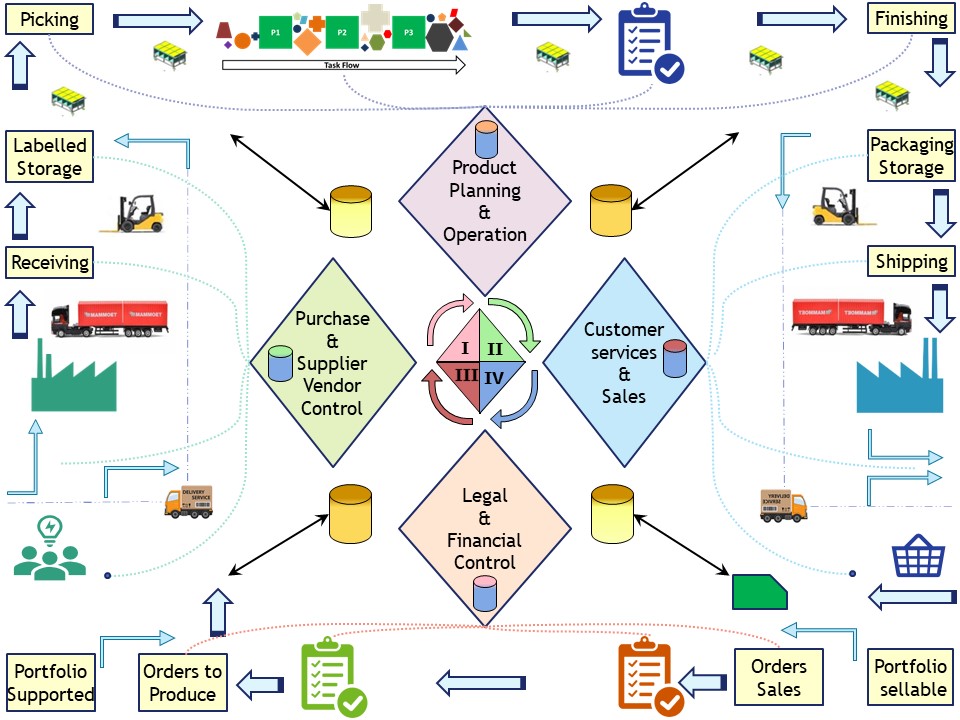

| M-1.2 Floor plans, ordered dimensions | C6isrist_02 | I_Floor |

| M-1.2.1 Multidimensional contexts | |

| M-1.2.2 The Siar model | |

| M-1.2.3 Multidimensional ordered contexts | |

| M-1.2.4 Advanced PDCA DMAIC understanding: Lean | |

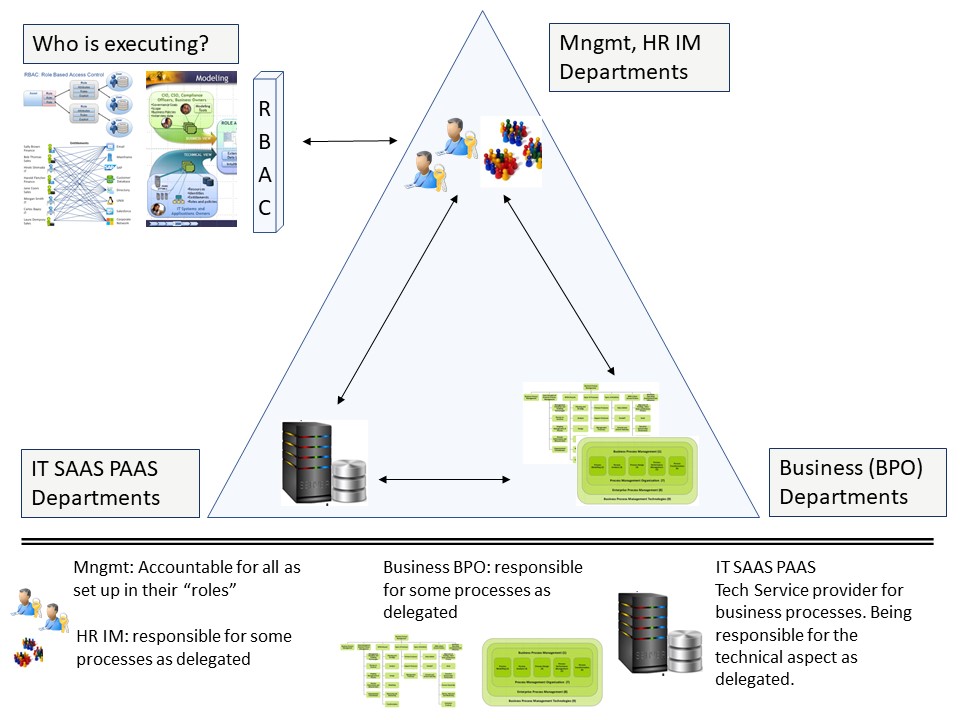

| M-1.3 Steering roles tasks | C6isrist_03 | C_Tasks |

| M-1.3.1 With what to start understand steering? | |

| M-1.3.2 How tot steer, understanding improvements | |

| M-1.3.3 Strategy Who: Hoshin Kanri, Vision, Mission | |

| M-1.3.4 Strategy Levels in the organisations | |

| M-1.4 Culture building people | C6isrist_04 | H_Culture |

| M-1.4.1 Understanding, awareness of the situation | |

| M-1.4.2 Having respect for people | |

| M-1.4.3 Understanding the strategy the goal | |

| M-1.4.4 Strategy execution with insight | |

| M-1.5 Sound underpinned theory, foundation | C6isrist_05 | C_Theory |

| M-1.5.1 Information processes basic lean requirements | |

| M-1.5.2 Ideas from an anatomy, routes by maps, usefulness | |

| M-1.5.3 Process foundation, the fundaments basic creation | |

| M-1.5.4 Information processes controlled evaluated changes | |

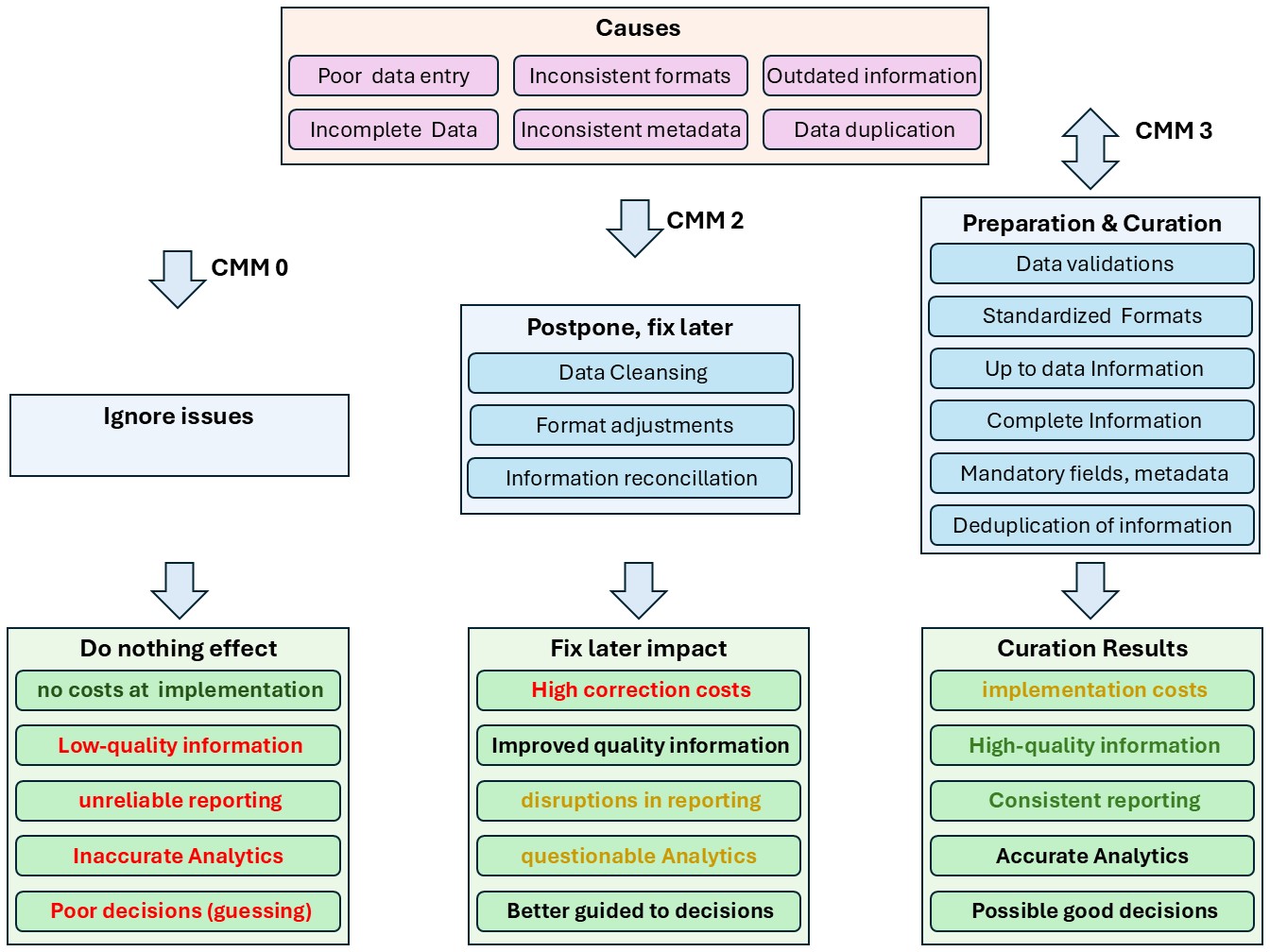

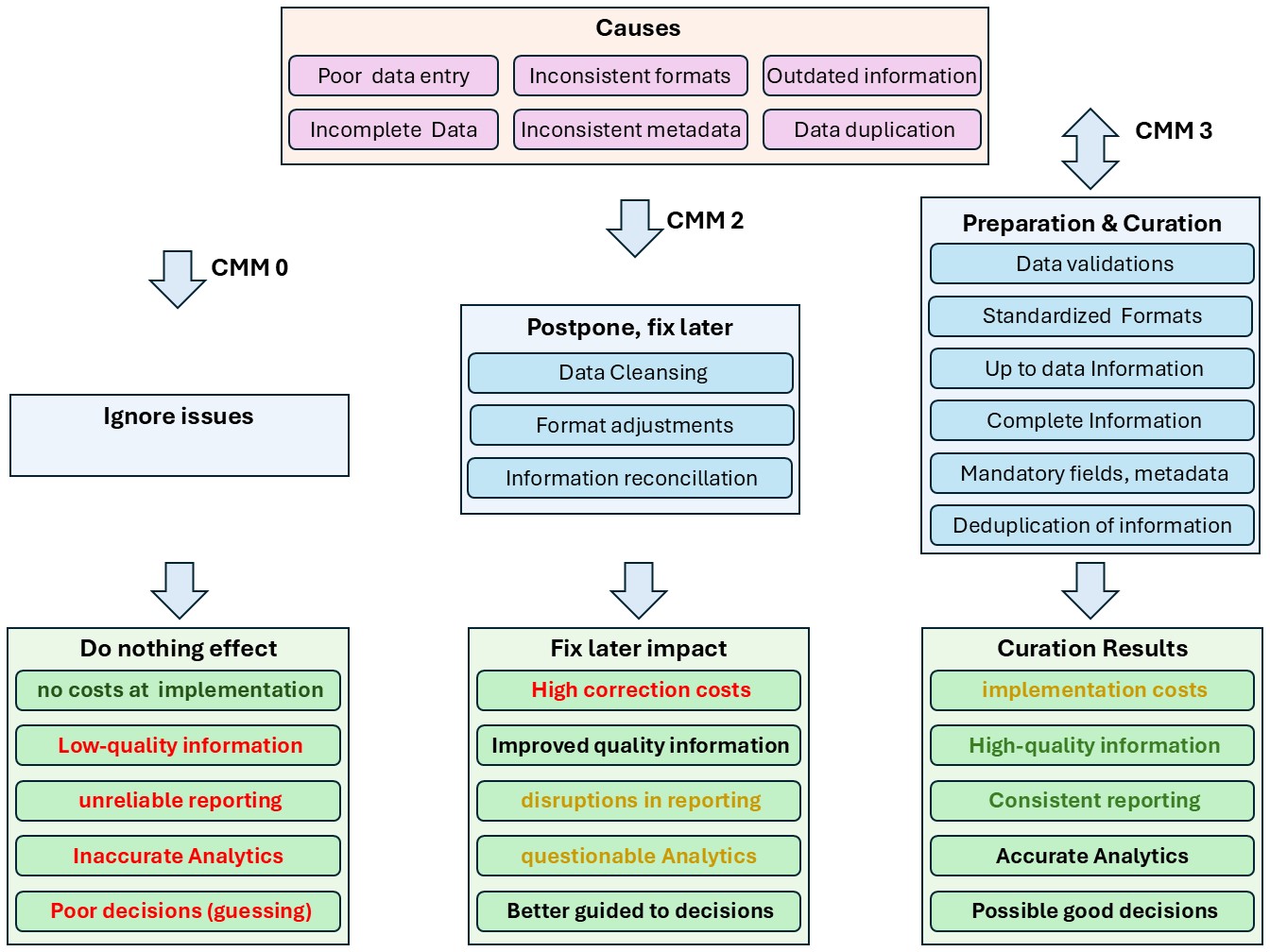

| M-1.6 Maturity 0: Strategy impact understood | C6isrist_06 | CMM0-SIM |

| M-1.6.1 Determining the position, the situation | |

| M-1.6.2 Determining the destination, strategy | |

| M-1.6.3 Managing Uncertainties, confusion, ambiguities (MUCA) | |

| M-1.6.4 Ambiguity in dimensions: foundation floorplan | |

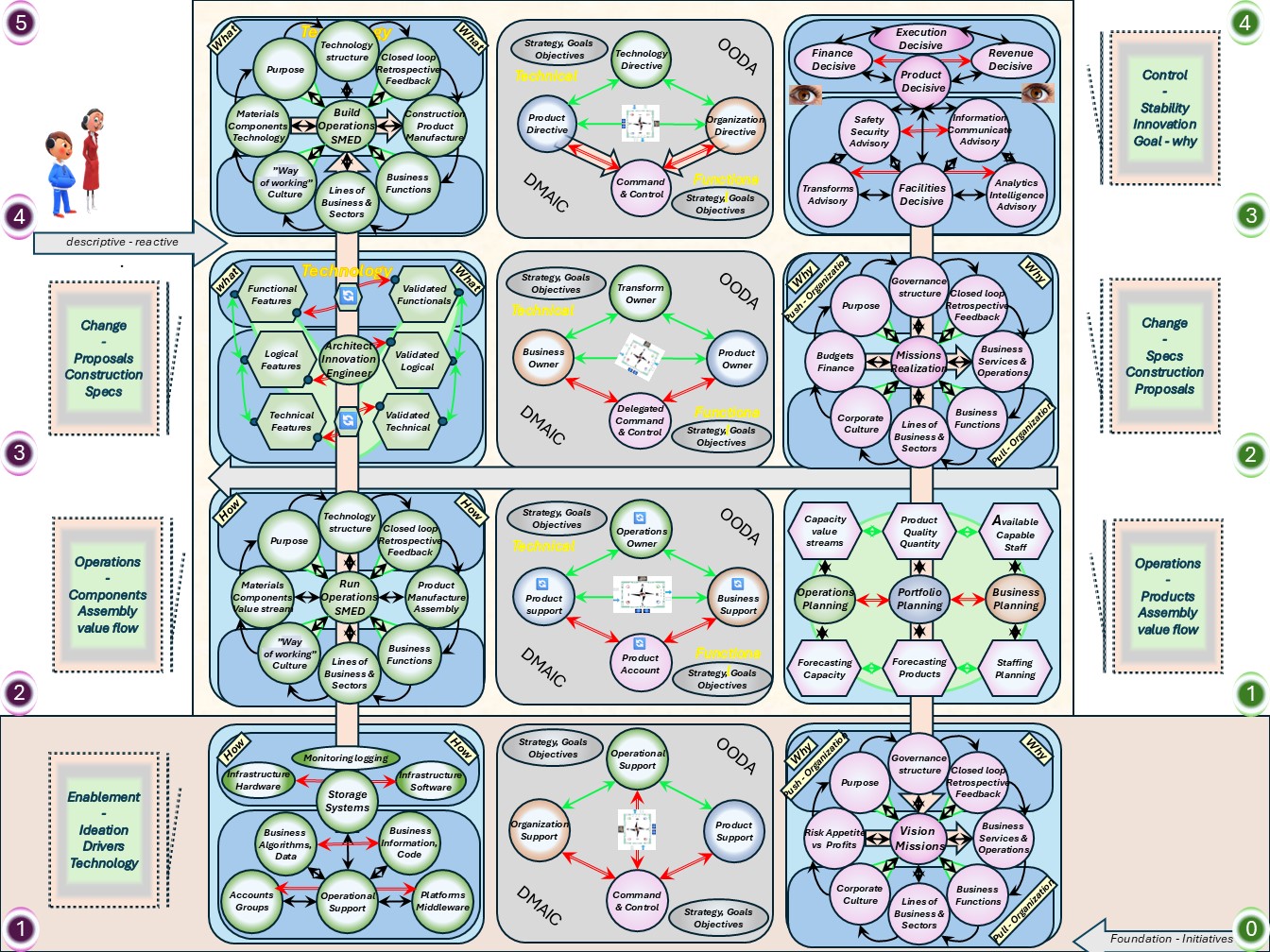

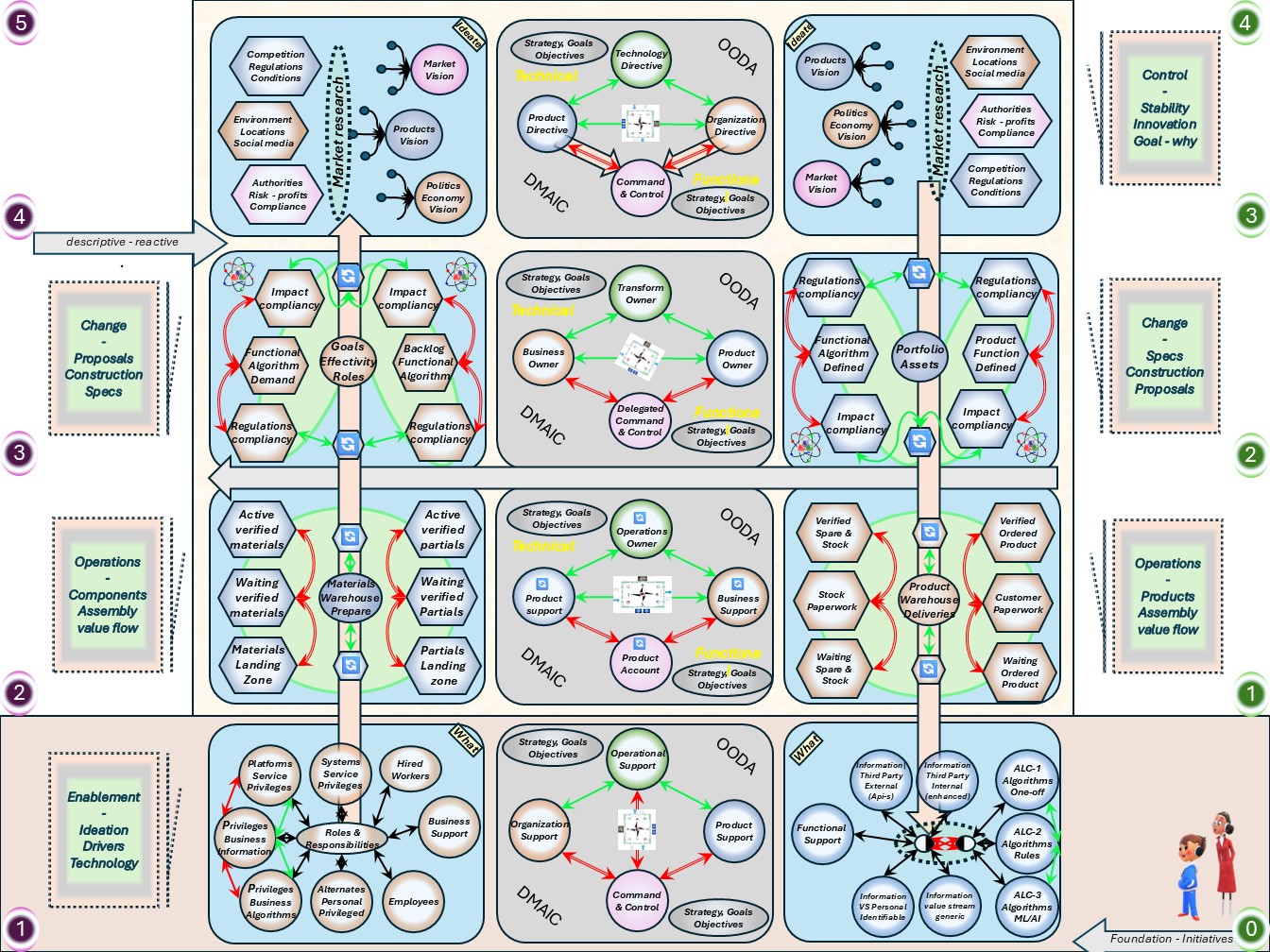

| M-2 Command & Control gaps understanding and solutions | |

| M-2.1 Seeing ICT Service Gap types | C6isrhow_01 | 👁-GAP |

| M-2.2.1 Understanding safety, functionality by requirements | |

| M-2.2.2 Changing safety or functionality | |

| M-2.2.3 Safety technology alignment | |

| M-2.2.4 Safety information, data, alignment | |

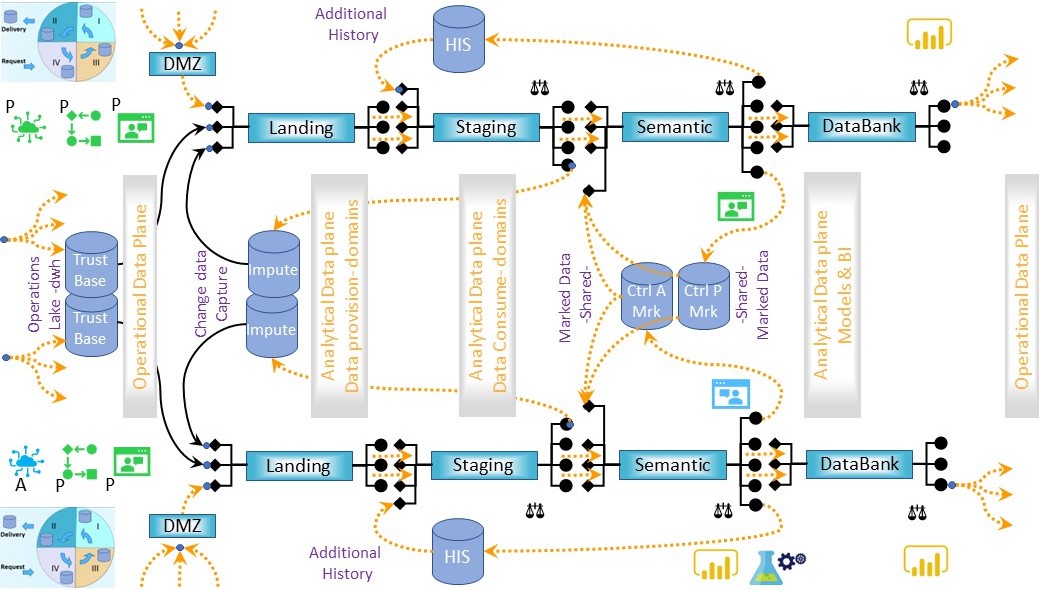

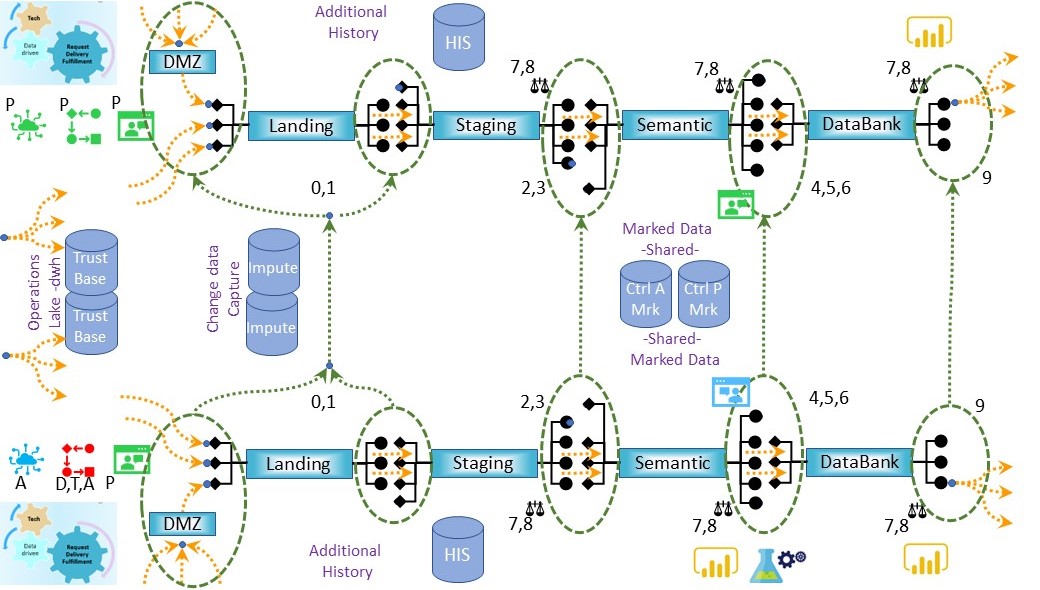

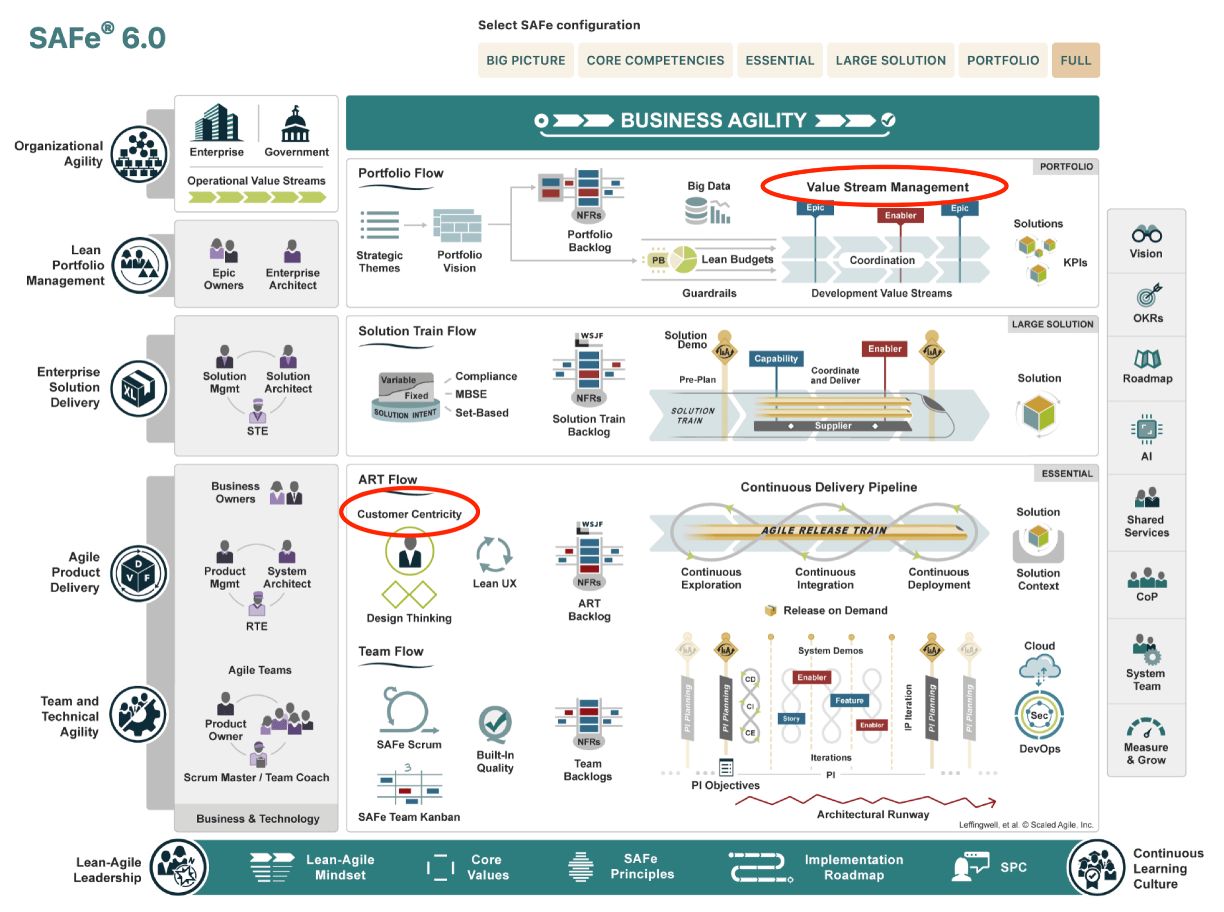

| M-2.2 Floor plans, seeing value streams | C6isrhow_02 | T_Value |

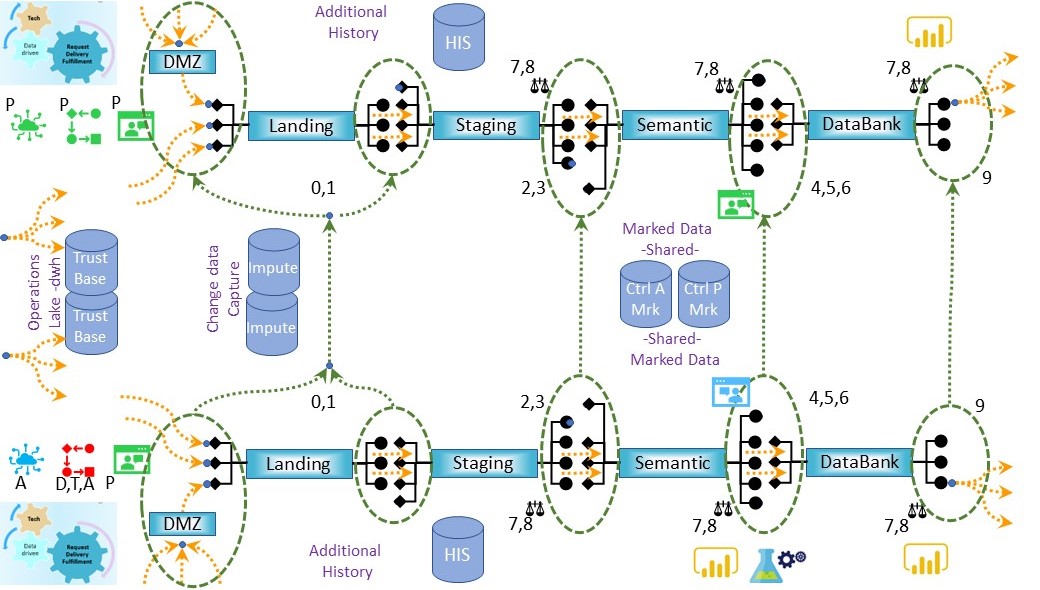

| M-2.2.1 Standard patterns information flows, process changes | |

| M-2.2.2 Information, process flow - change flow | |

| M-2.2.3 Processing information vs industrial differences | |

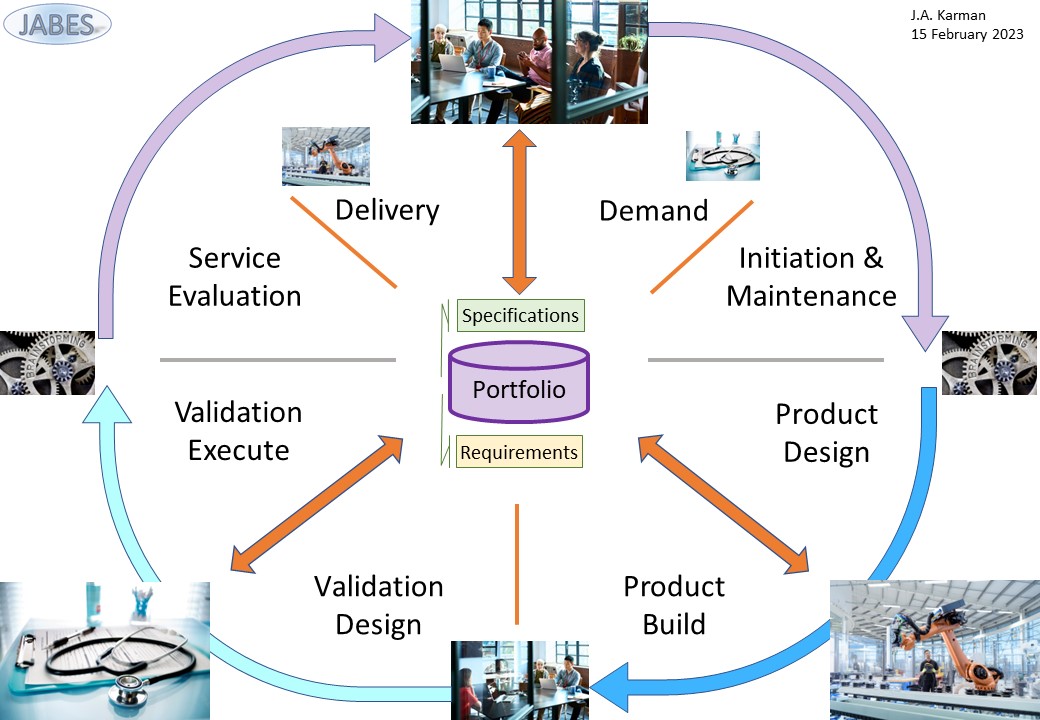

| M-2.2.4 Using the portfolio management system Jabes | |

| M-2.3 How to steer in the information landscape | C6isrhow_03 | C_Steer |

| M-2.3.1 Strategy learning, improvements | |

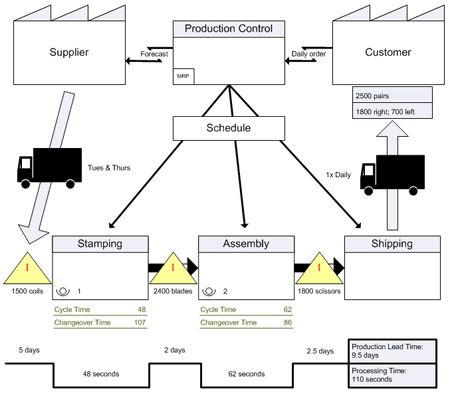

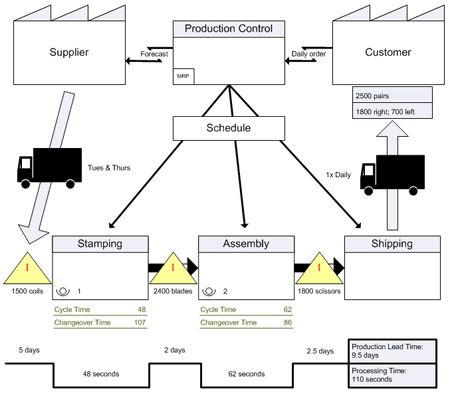

| M-2.3.2 The practical Value stream map, VaSM | |

| M-2.3.3 Defining, analysing the value stream | |

| M-2.3.4 Getting value from the value stream | |

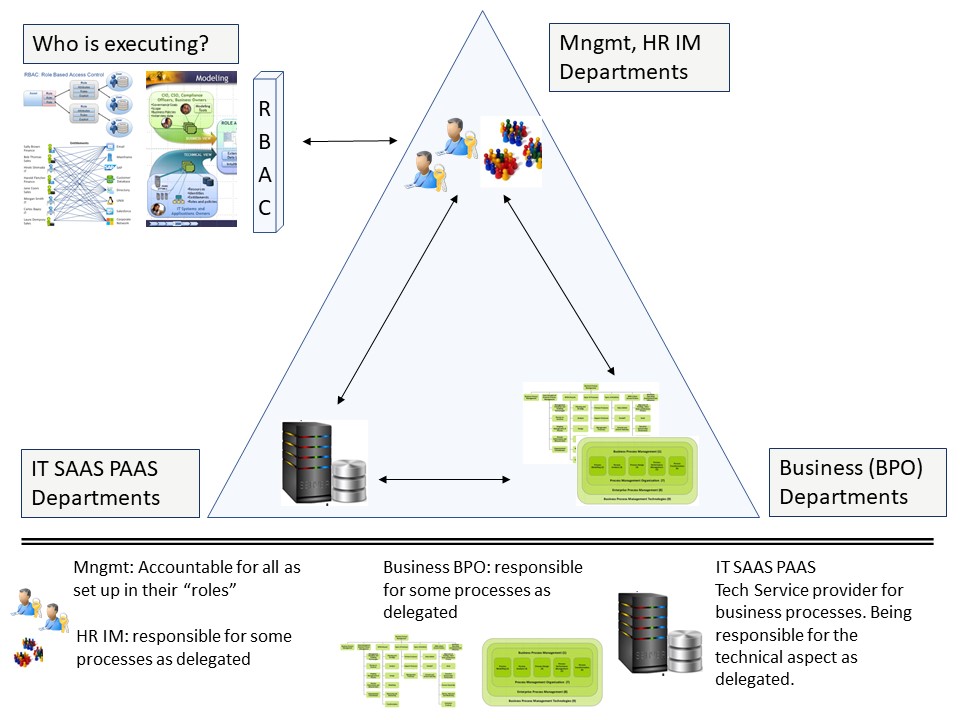

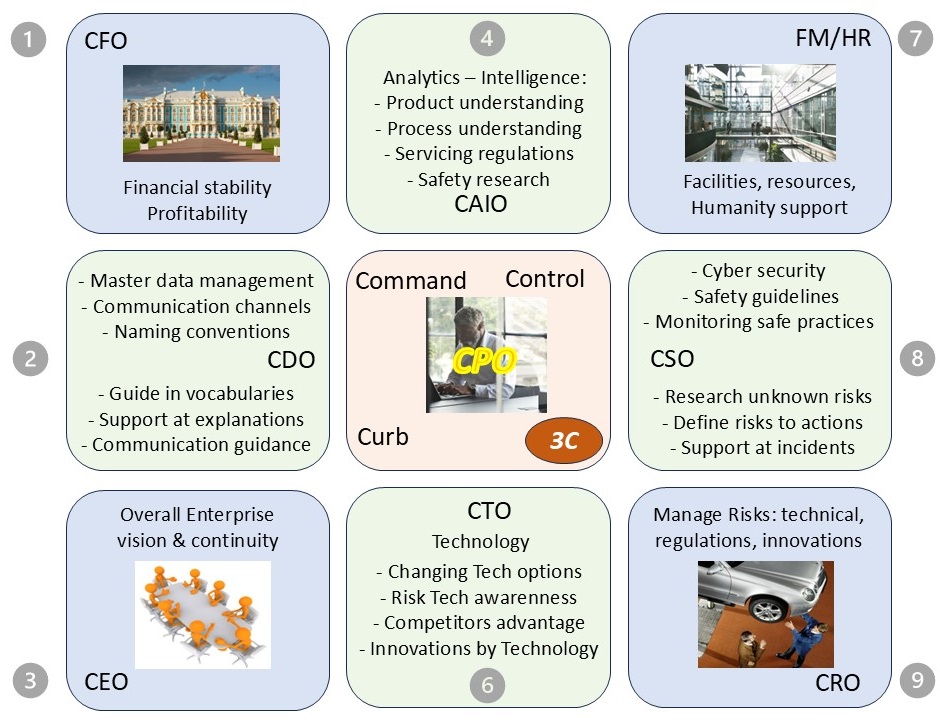

| M-2.4 Roles tasks in the boardroom | C6isrhow_04 | C_Culture |

| M-2.4.1 Changing role C-level | |

| M-2.4.2 Steering changing roles (I) | |

| M-2.4.3 Steering changing roles (II) | |

| M-2.4.4 Transforming steering roles | |

| M-2.5 Sound underpinned theory, operations | C6isrhow_05 | ✅-logic |

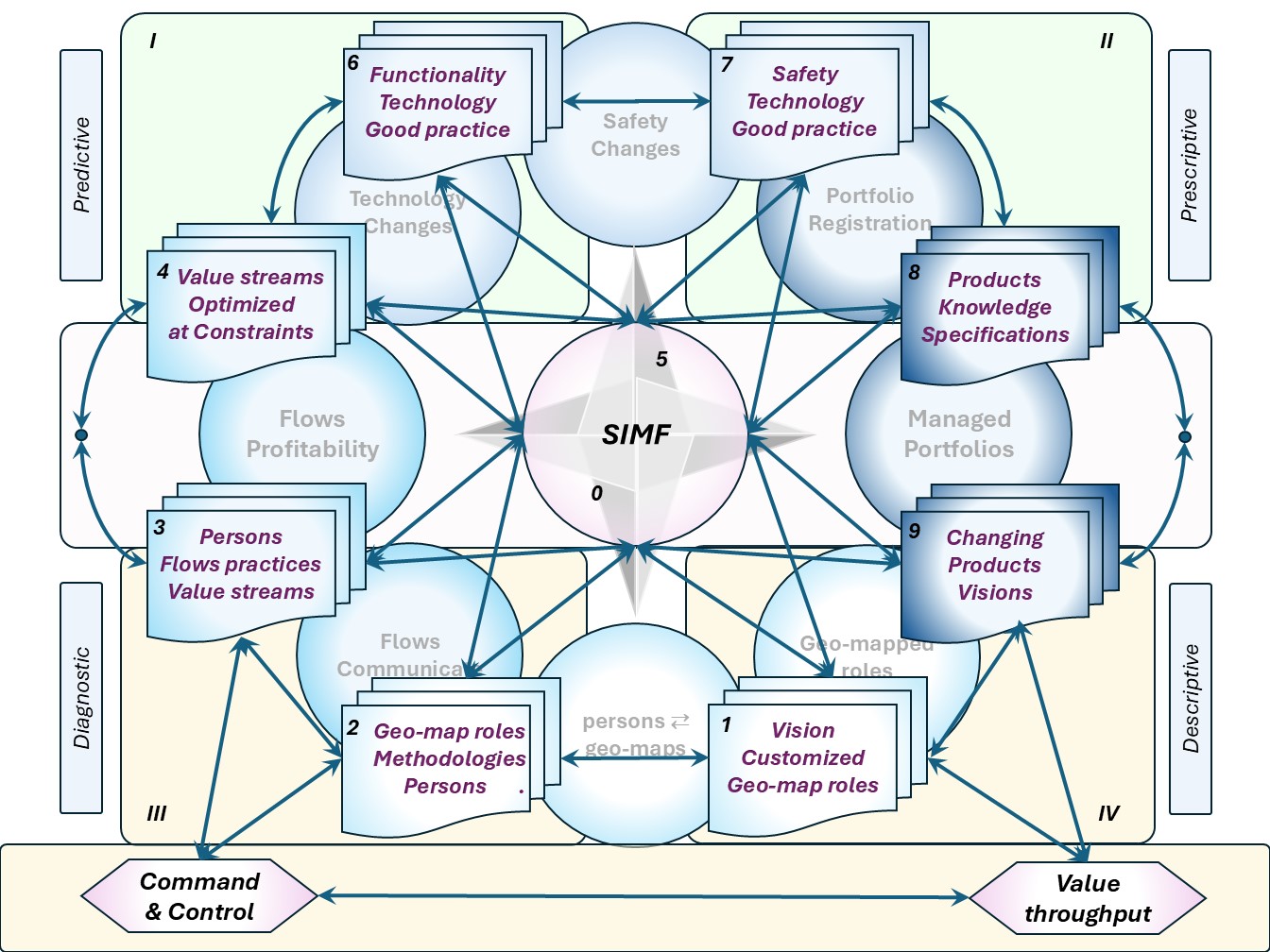

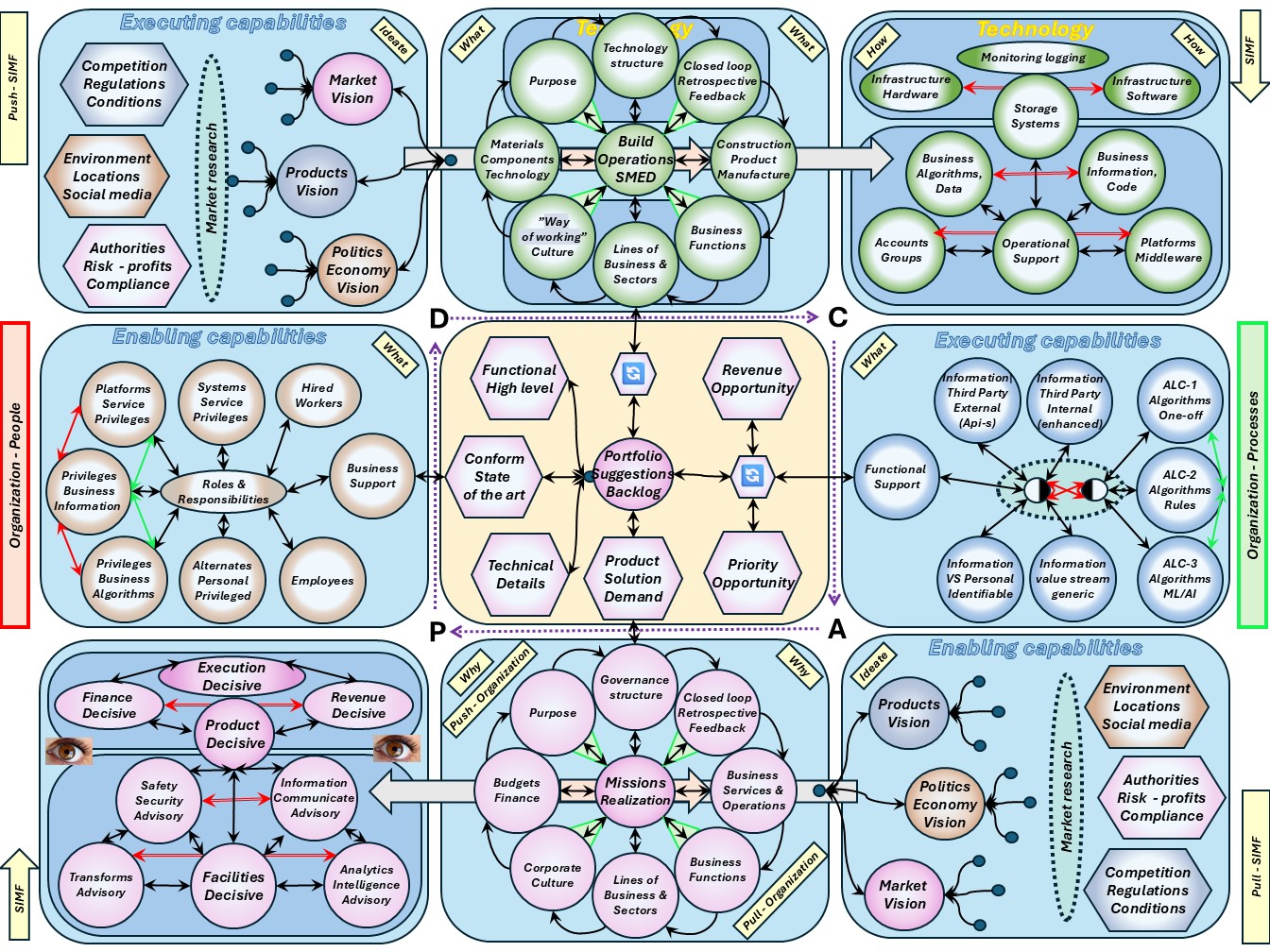

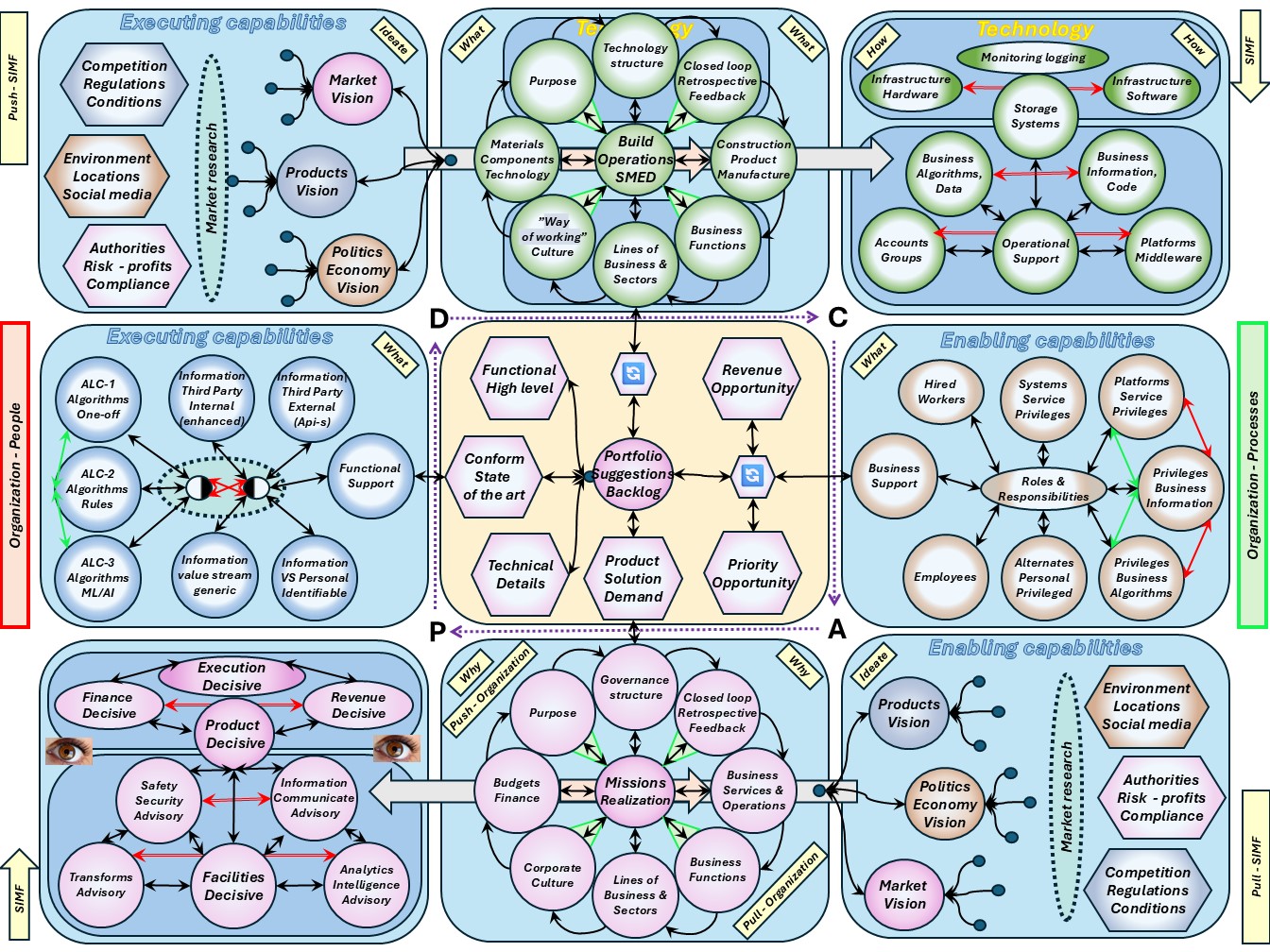

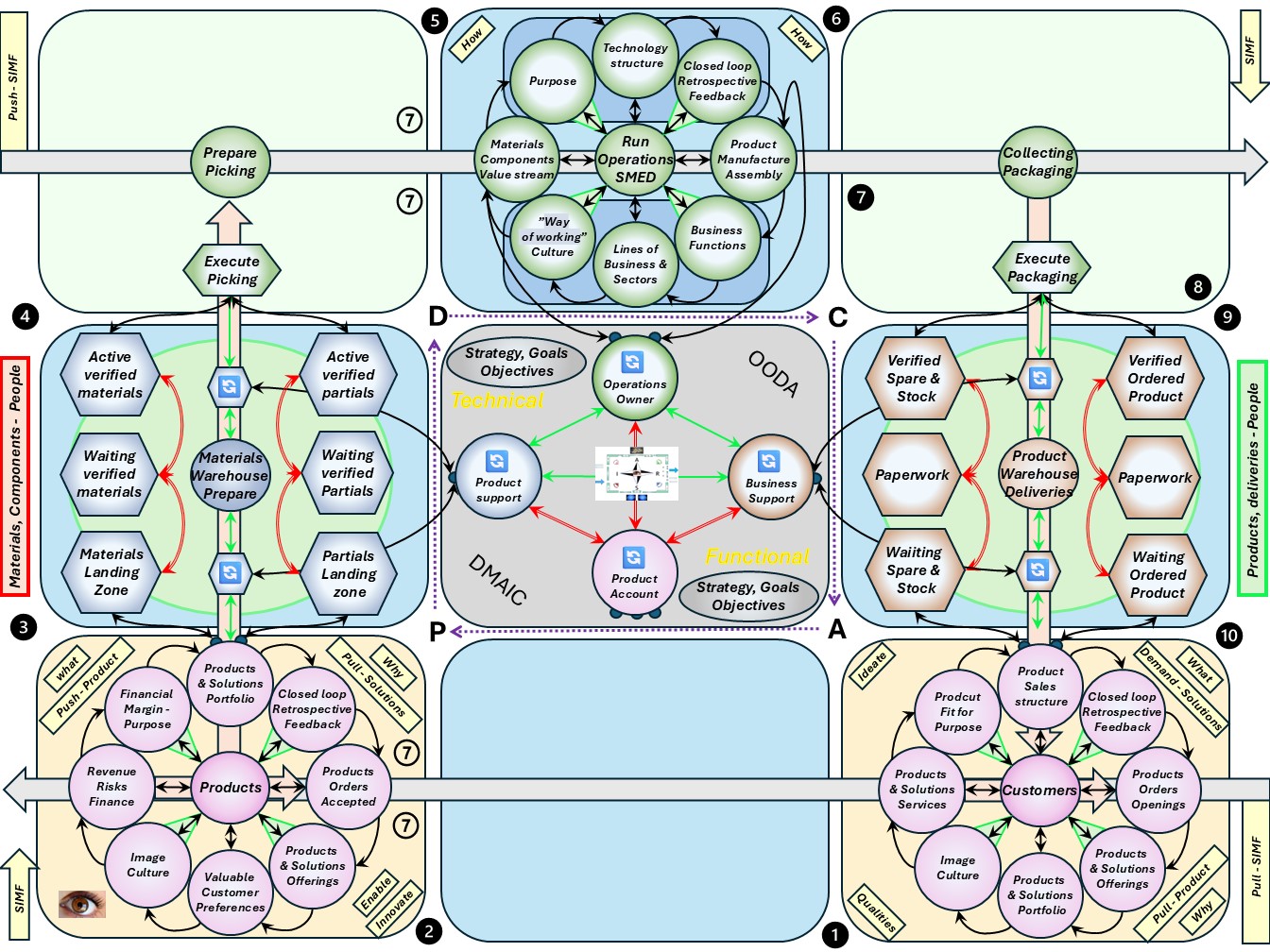

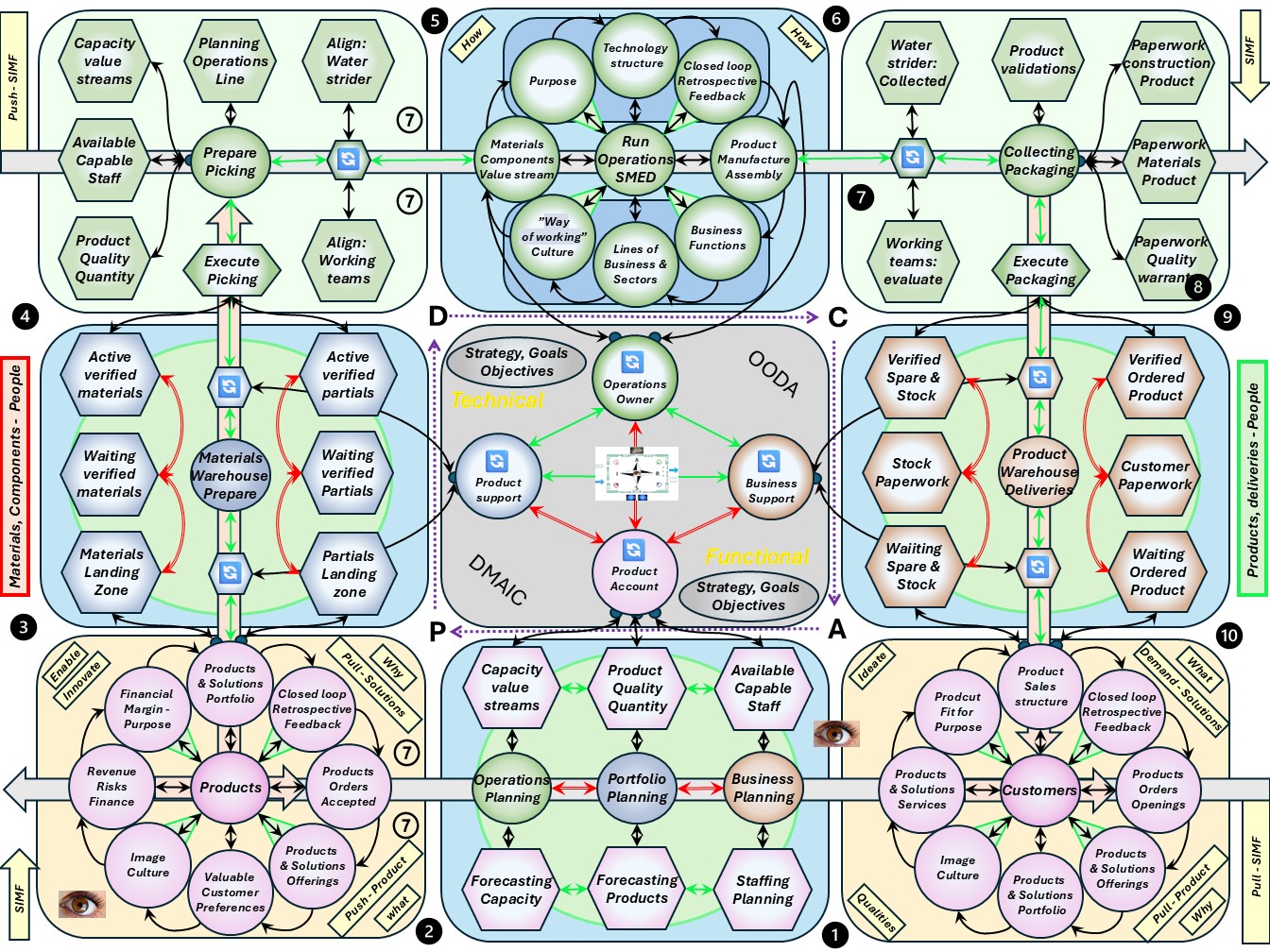

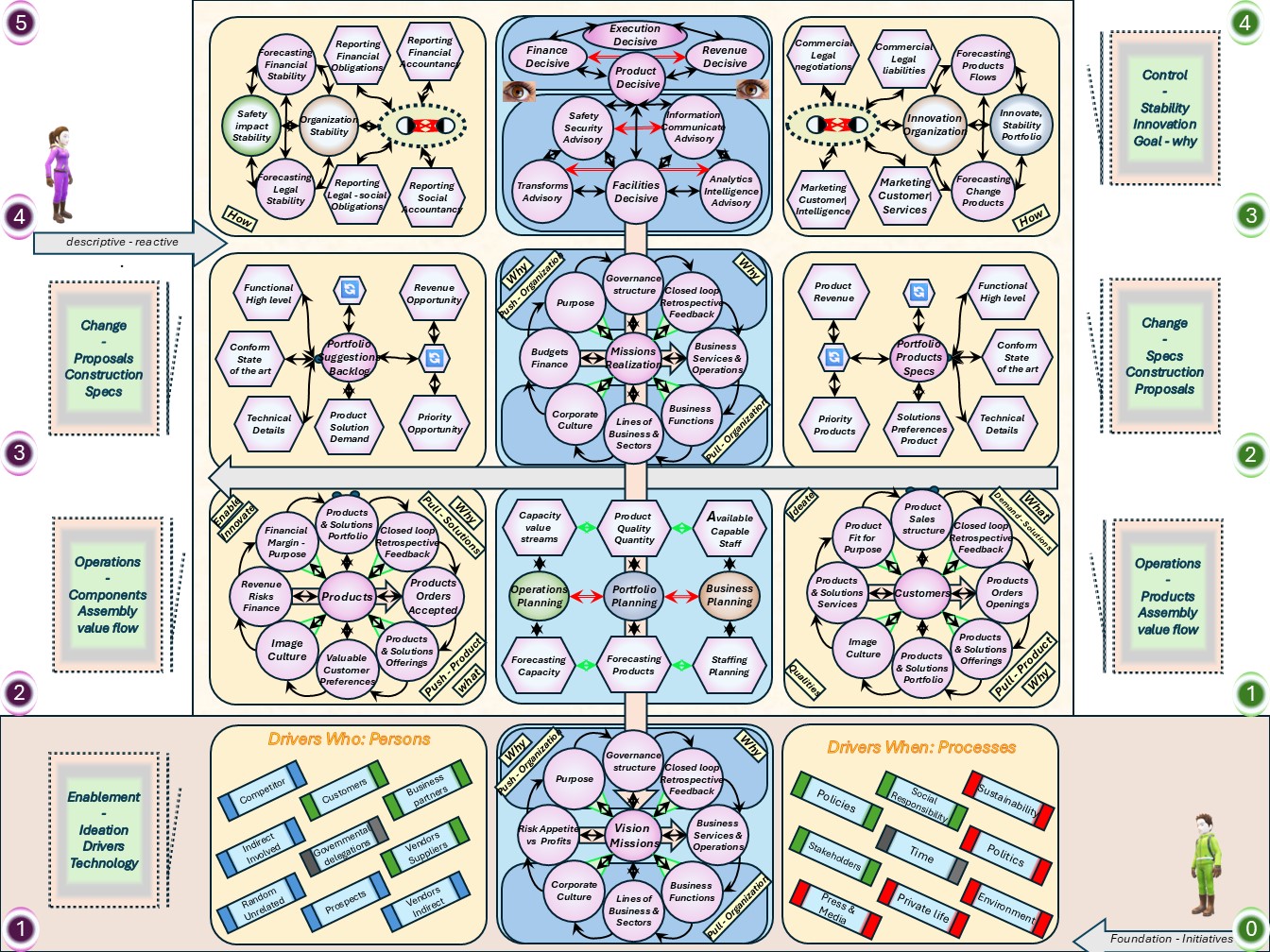

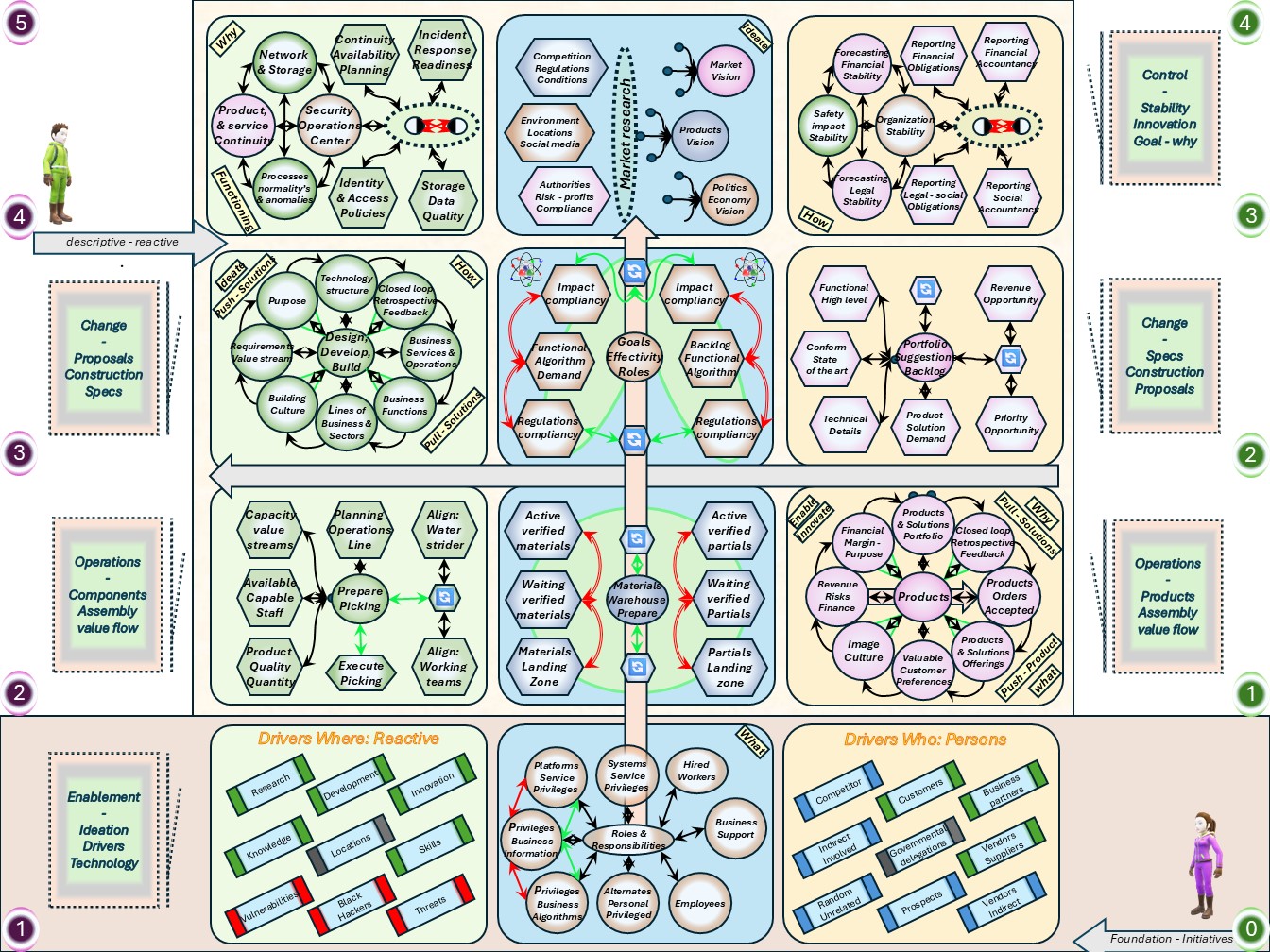

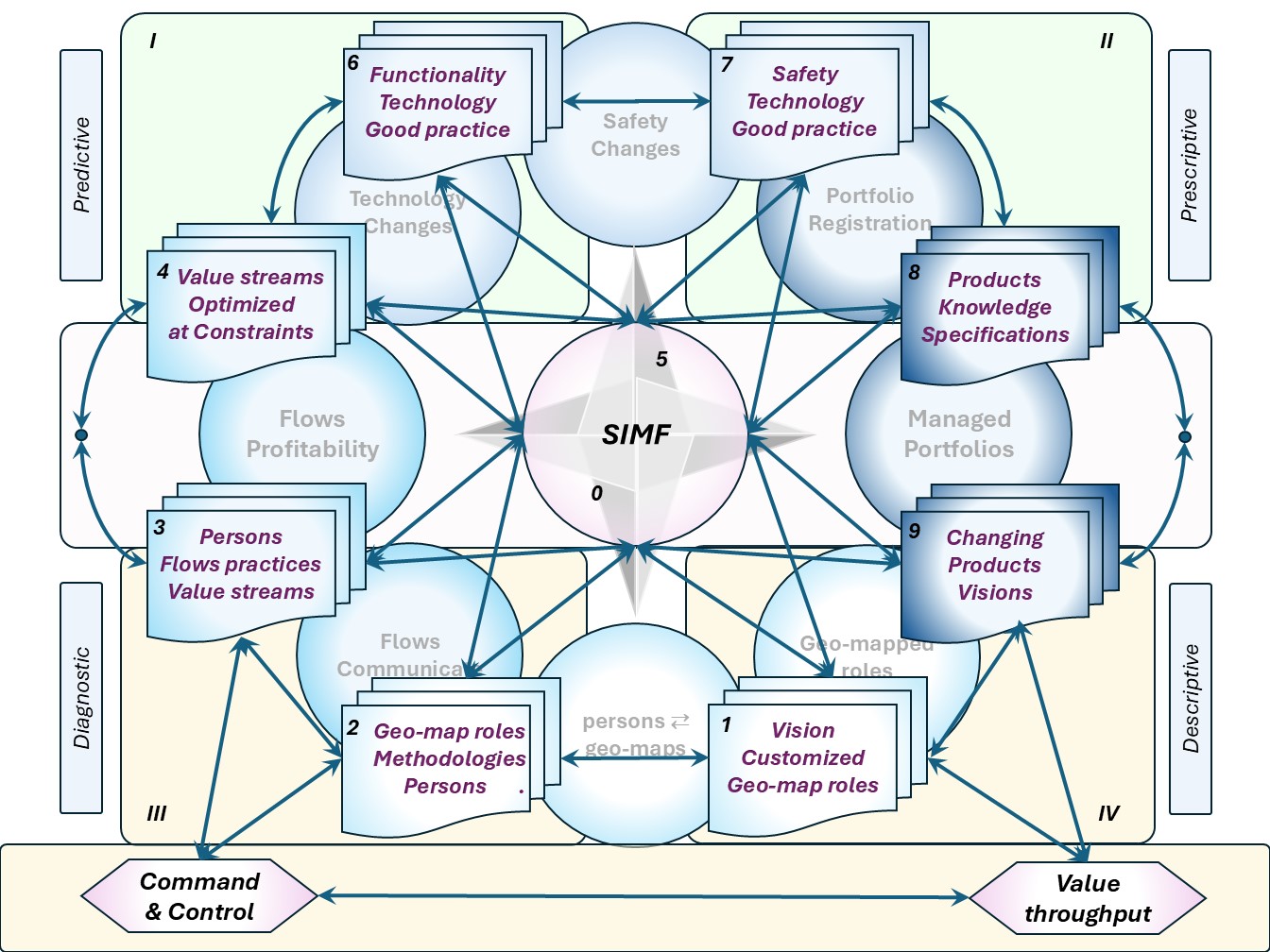

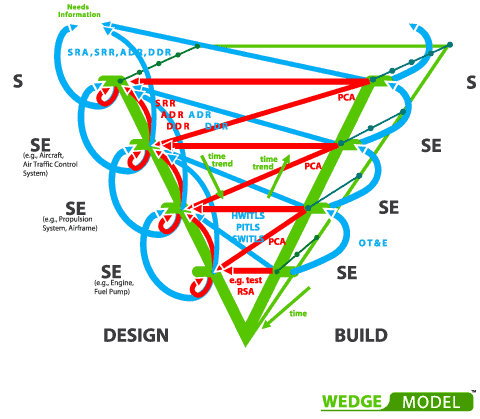

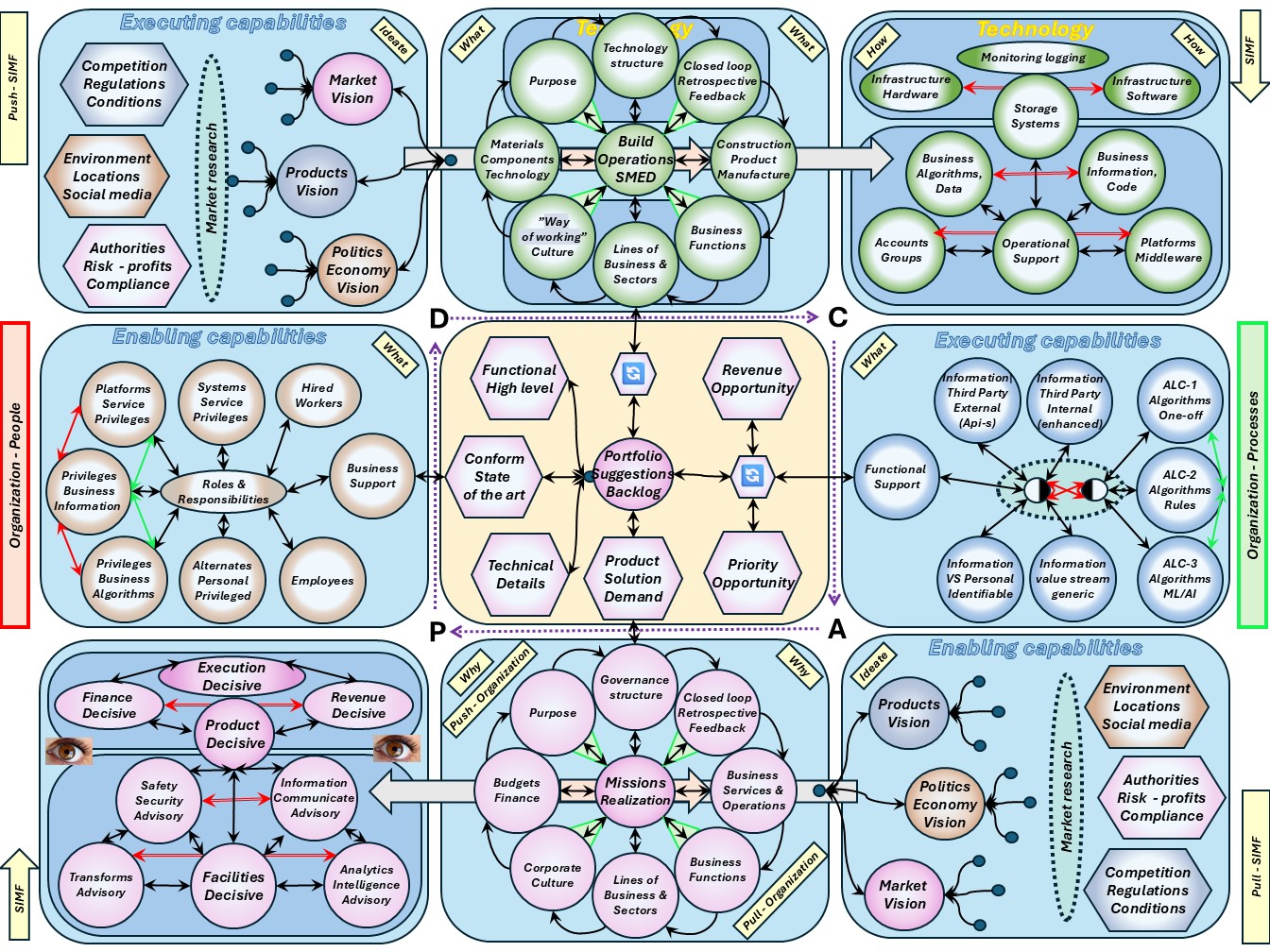

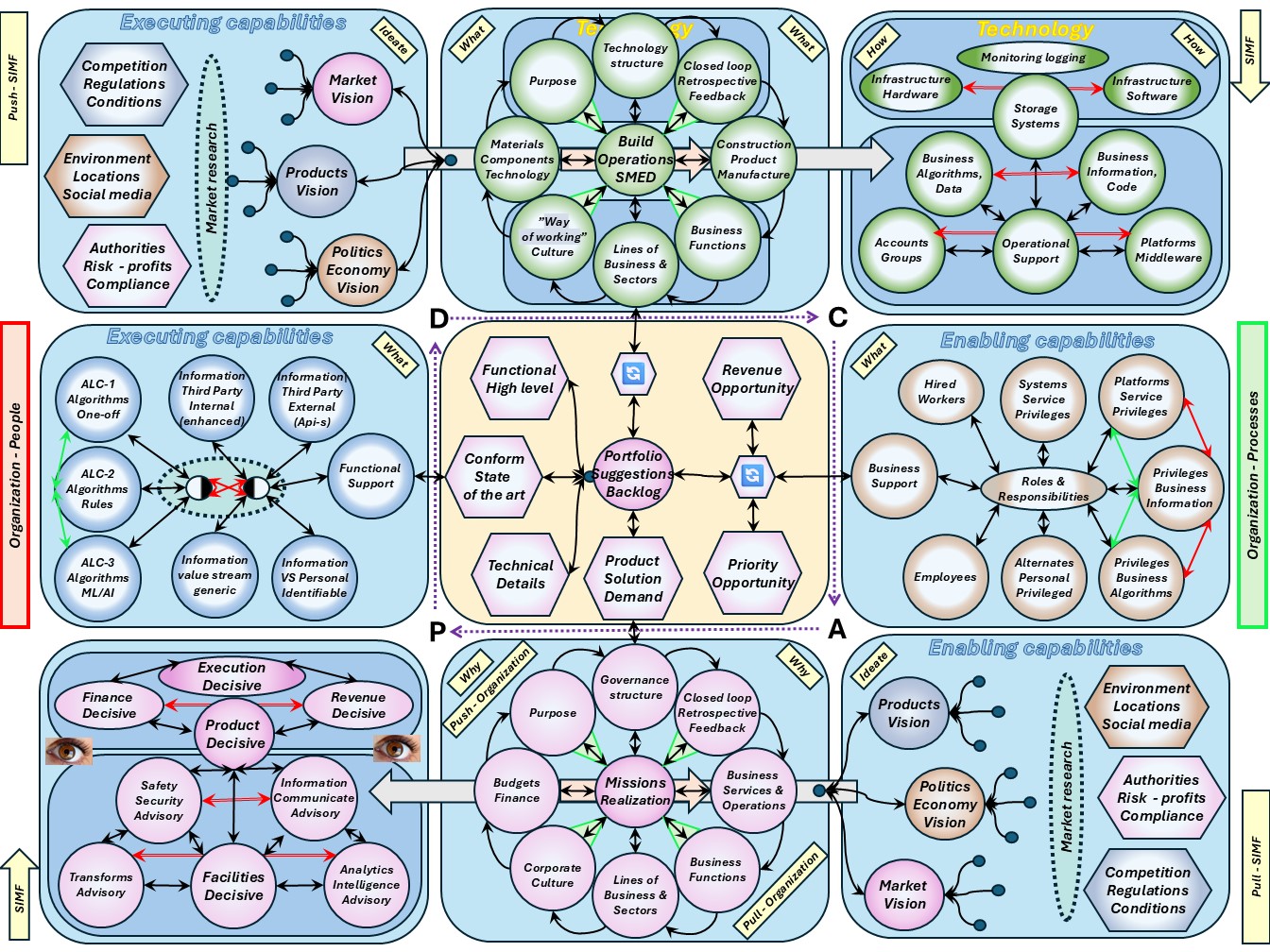

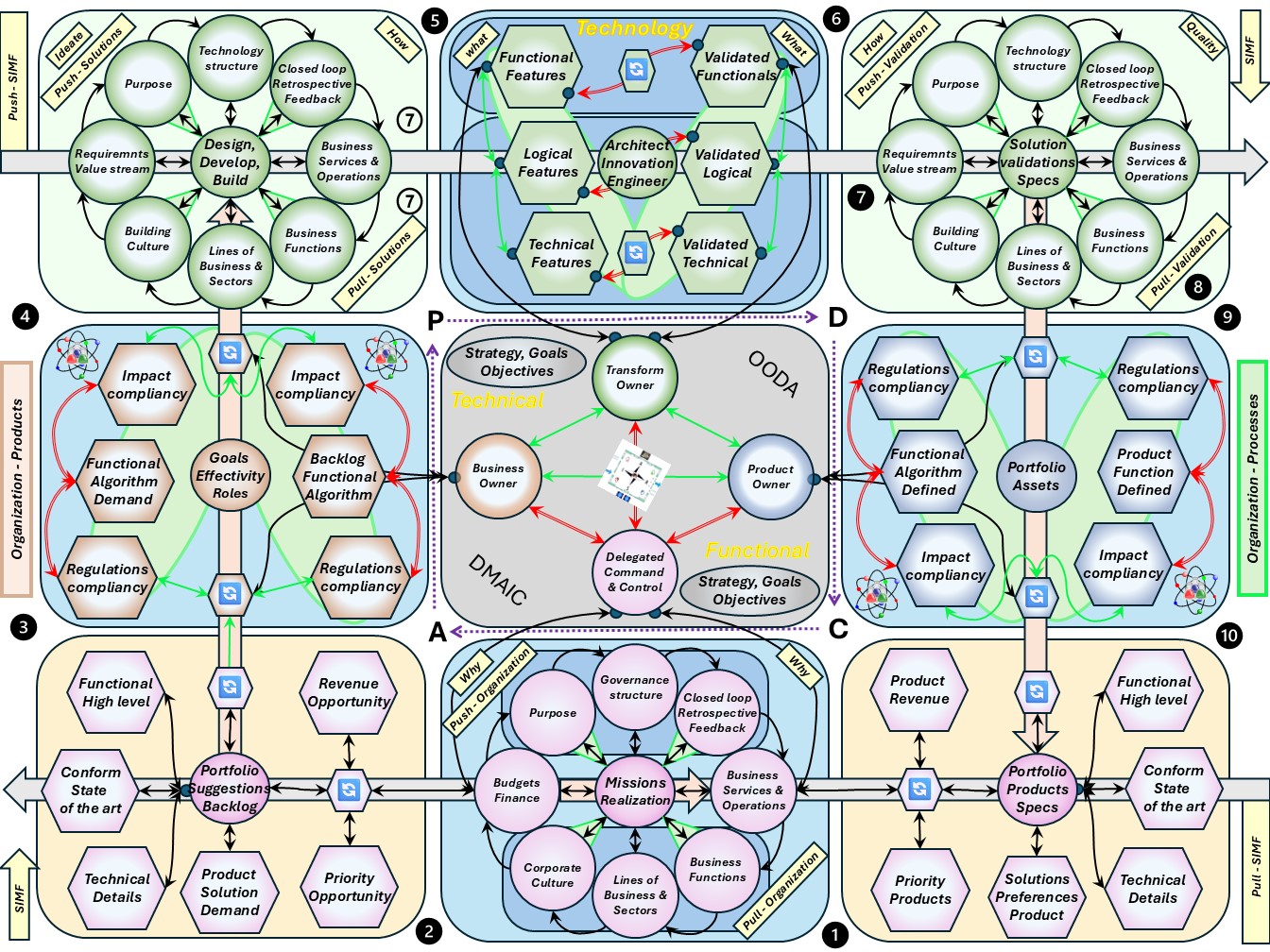

| M-2.5.1 Simf: the complete operational flow cycle | |

| M-2.5.2 Simf: the complete desing & build flow cycle | |

| M-2.5.3 Realisation, control & command dimensions seen outside | |

| M-2.5.4 Antipodes in Simf and maturity levels | |

| M-2.6 Maturity 3: Enable strategy to operations | C6isrhow_06 | CMM3-SIM |

| M-2.6.1 Short term results or long term results (I)? | |

| M-2.6.2 Short term results or long term results (II)? | |

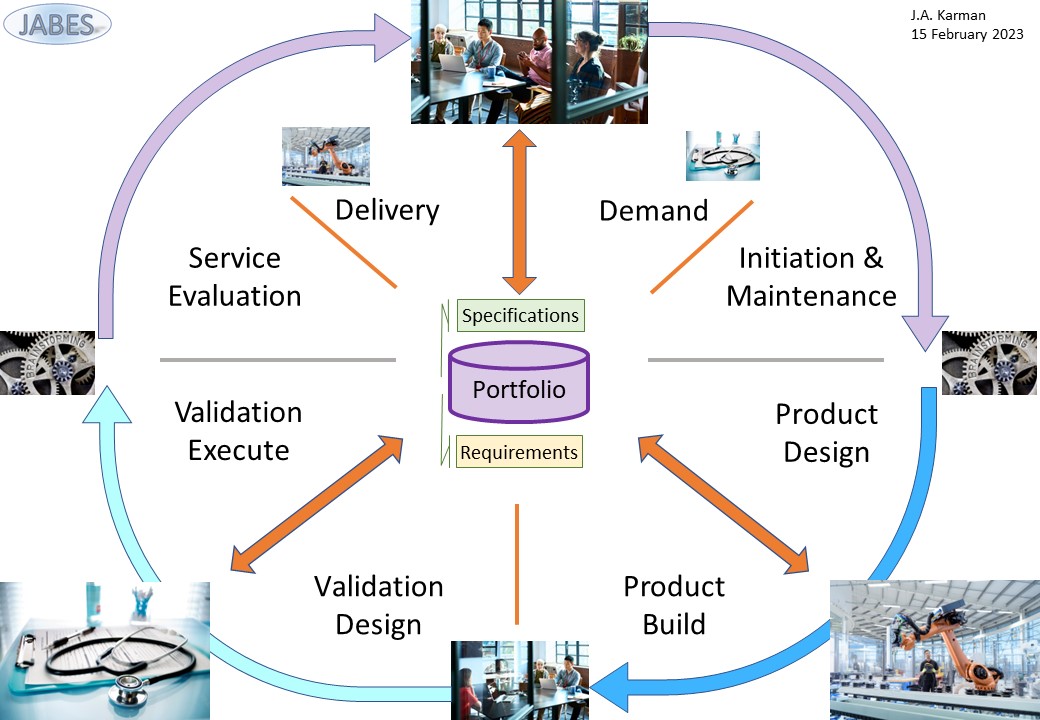

| M-2.6.3 A portfolio with specfication | |

| M-2.6.4 Purposeful products, services by an organisation | |

| M-3 Command & Control innovations and the future | |

| M-3.1 Information processing in the information age | C6isrsll_01 | I_Sage |

| M-3.1.1 Effectivity, efficiency, lean, agility | |

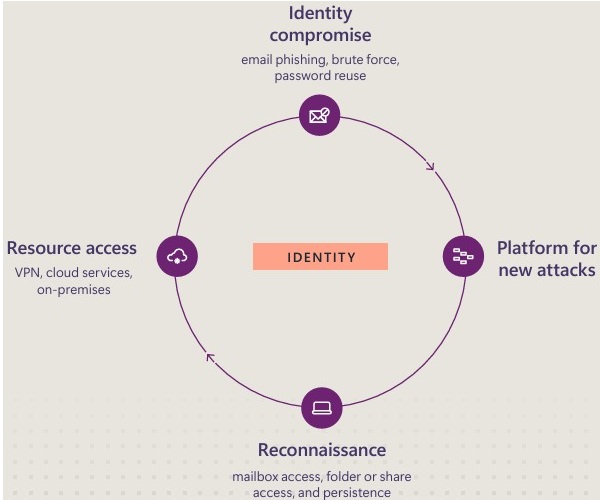

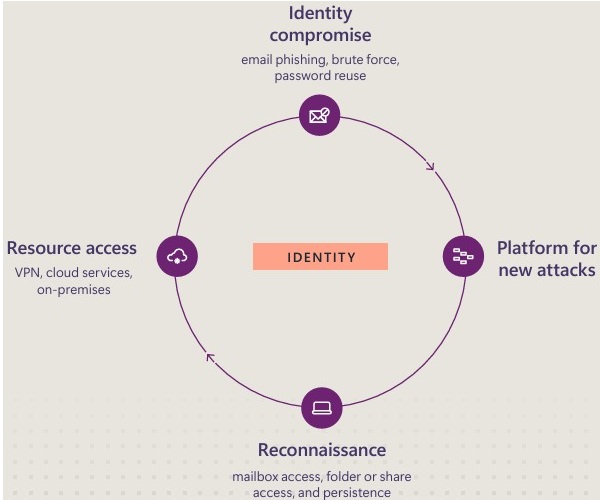

| M-3.1.2 Safety, Cyber security | |

| M-3.1.3 Knowing, managing, information customer related | |

| M-3.1.4 Knowing, managing, process results customer related | |

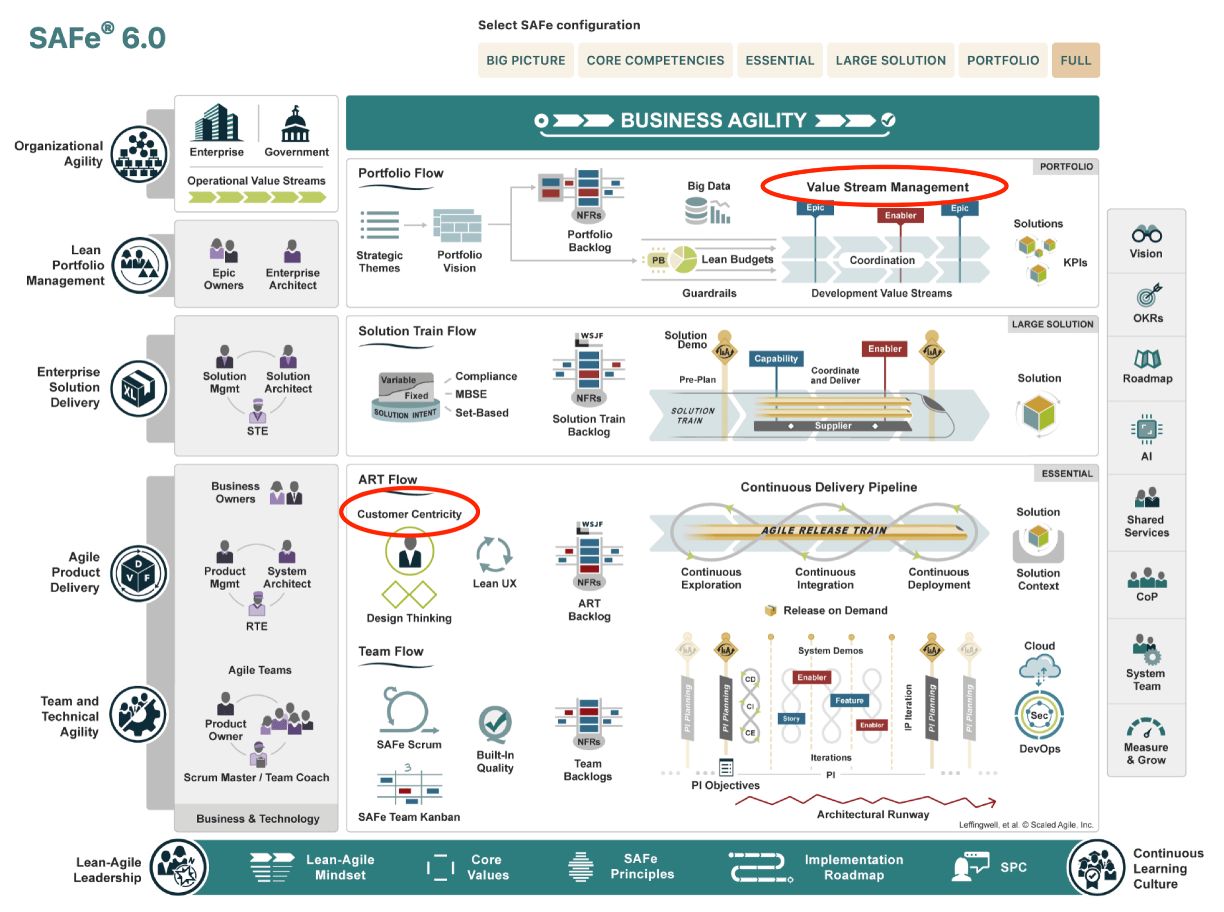

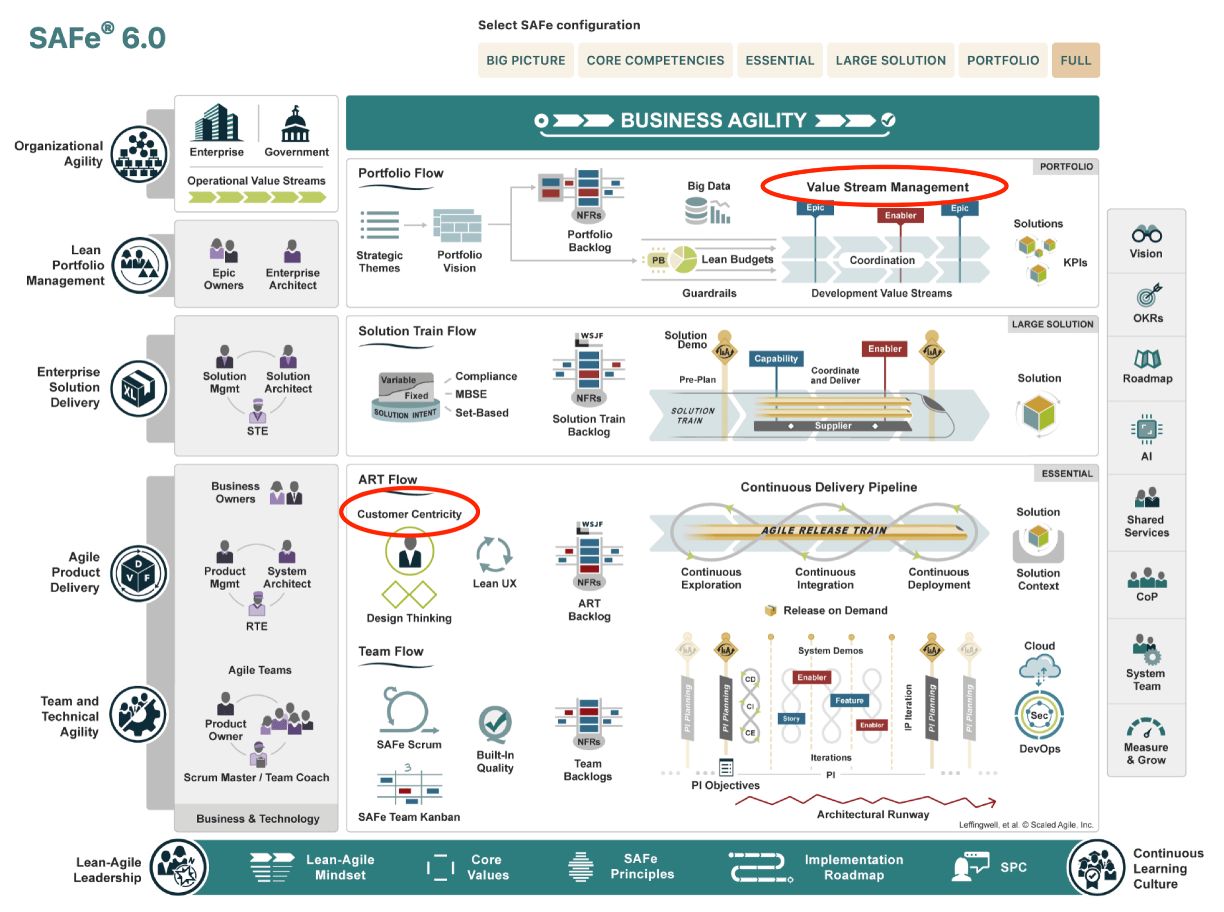

| M-3.2 Floor plans, optimizing value streams | C6isrsll_02 | I_Serve |

| M-3.2.1 Aligning the Service organisation to the information age | |

| M-3.2.2 Abstraction levels, closed loops in information context | |

| M-3.2.3 Understanding information, from data to insight | |

| M-3.2.4 Aligning service provision in the information age | |

| M-3.3 Why to steer in the information landscape | C6isrsll_03 | C_Edge |

| M-3.3.1 Customer centricity value streams | |

| M-3.3.2 Failing management signals | |

| M-3.3.3 learning - lean accounting | |

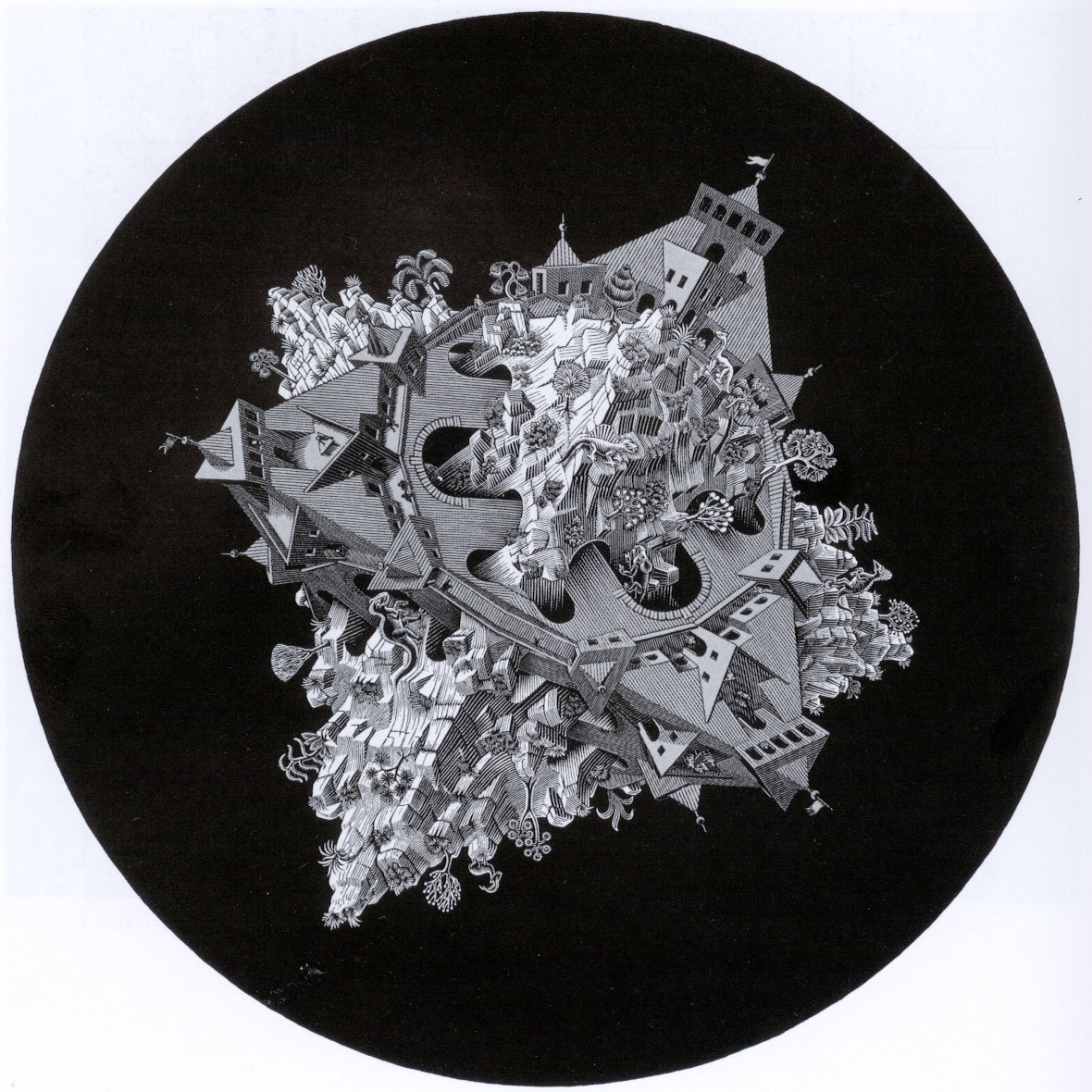

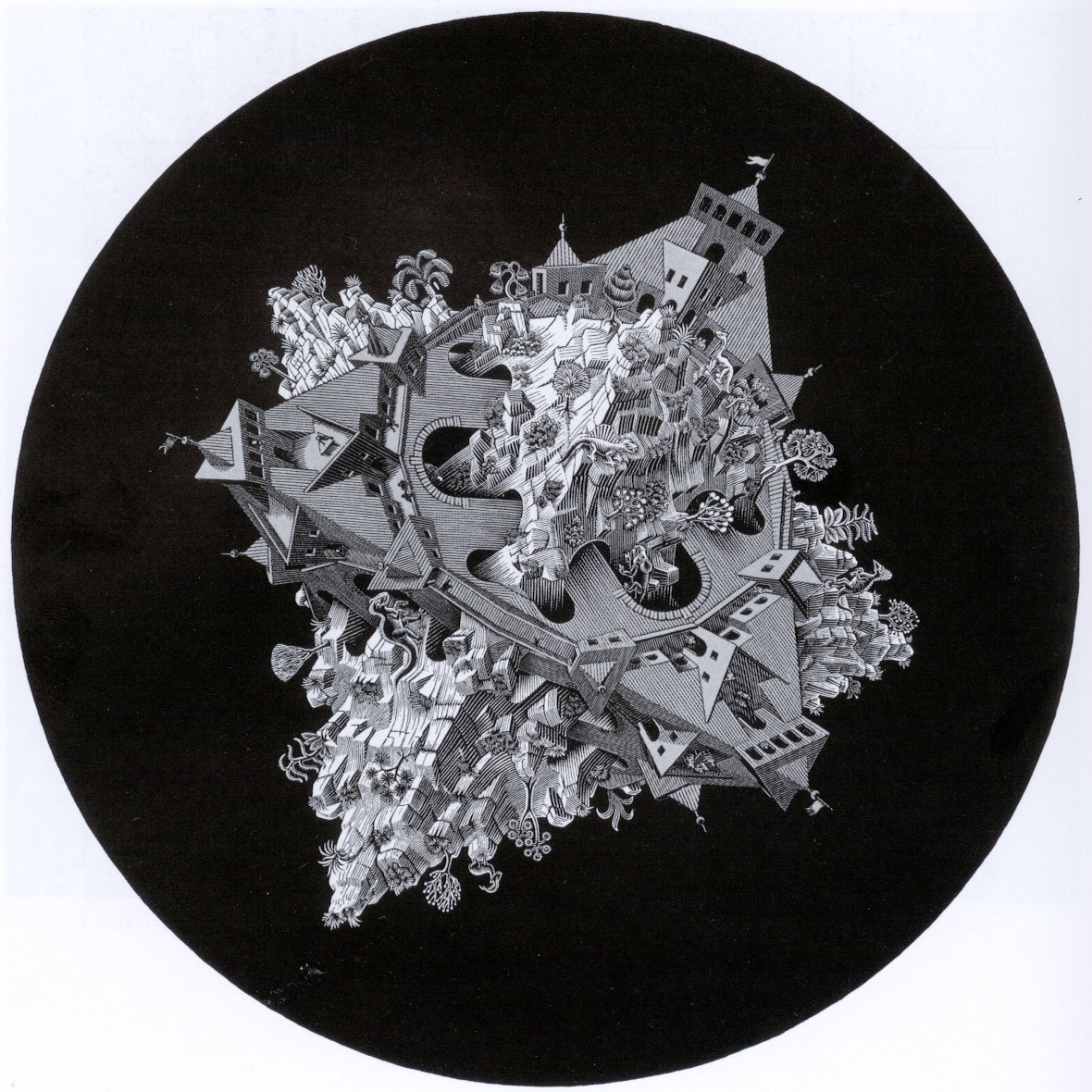

| M-3.3.4 Multiple dimensions, Antipodes asteroide world | |

| M-3.4 Visions & missions in the boardroom | C6isrsll_04 | C_Vision |

| M-3.4.1 Structuring, engineering the enterprise | |

| M-3.4.2 Enterpise structuring: cycles & multiple dimensions | |

| M-3.4.3 Optimising flows: removing constraints | |

| M-3.4.4 Optimising flows: budget, design, production | |

| M-3.5 Sound underpinned theory, improvements | C6isrsll_05 | ✅-dimensions |

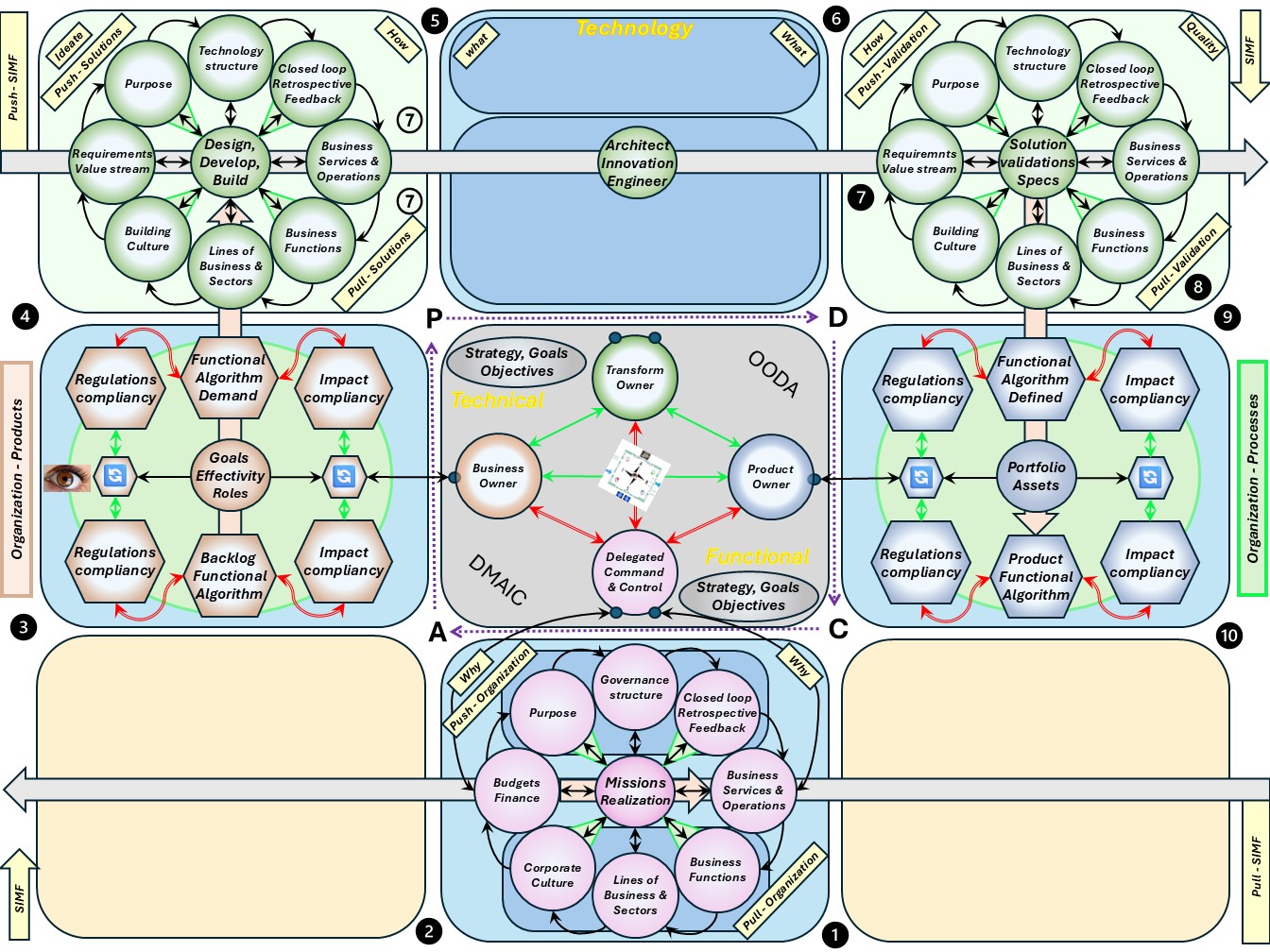

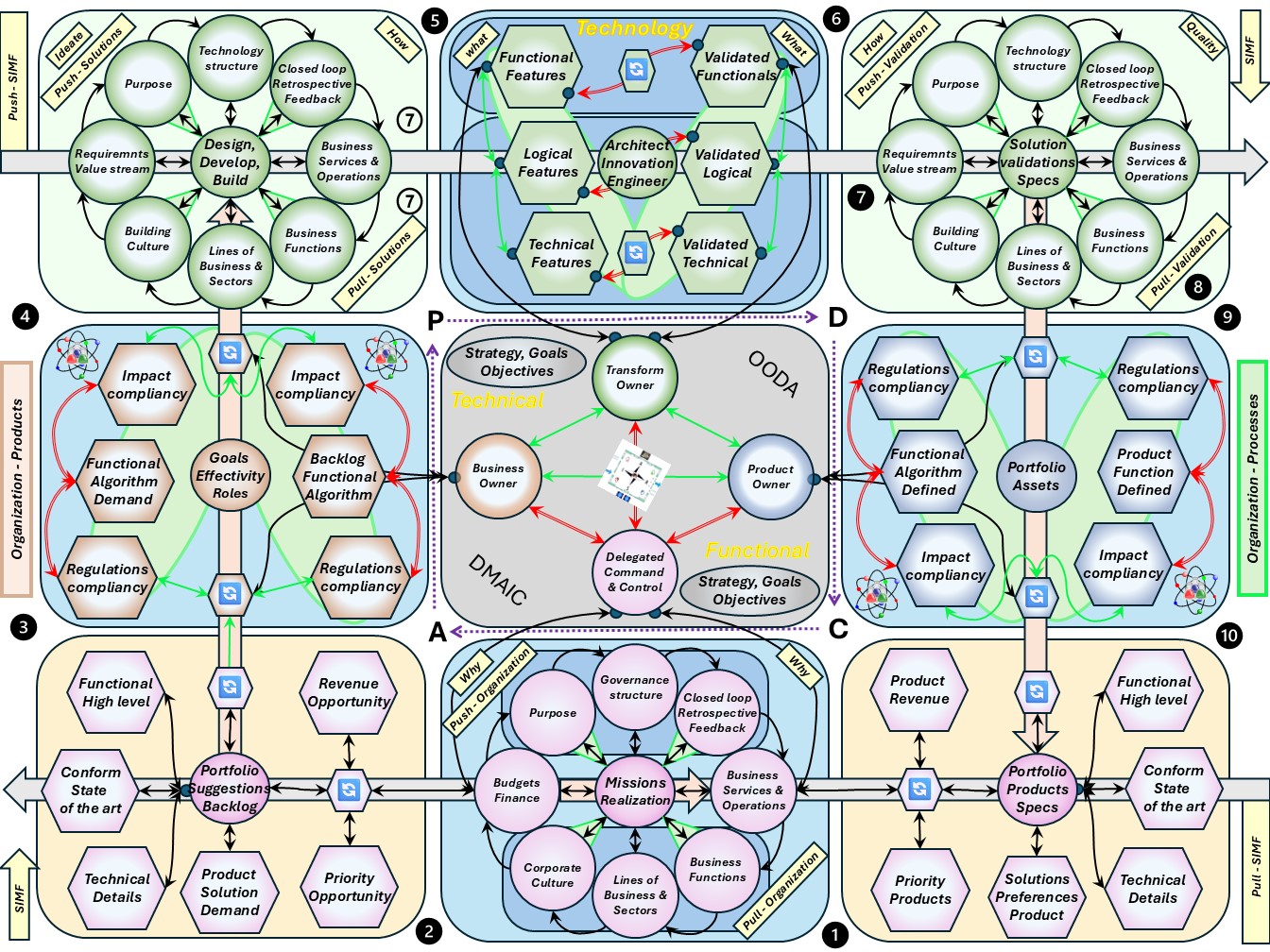

| M-3.5.1 A structured enterprise, organisation | |

| M-3.5.2 Change process in a structured enterprise, organisation | |

| M-3.5.3 Changing the structured enterprise, organisation | |

| M-3.5.4 Three dimensional anatomy maps, PDCA cycles | |

| M-3.6 Maturity 5: Strategy visions adding value | C6isrsll_06 | CMM5-SIM |

| M-3.6.1 The product, service positioned within a system | |

| M-3.6.2 Compliancy at an organisation, products, services | |

| M-3.6.3 Optimizing an organisation, products, services | |

| M-3.6.4 The purposeful organisation, products, services | |

| M-3.6.5 Following steps | |

⚖ M-1.1.3 Guide reading this page

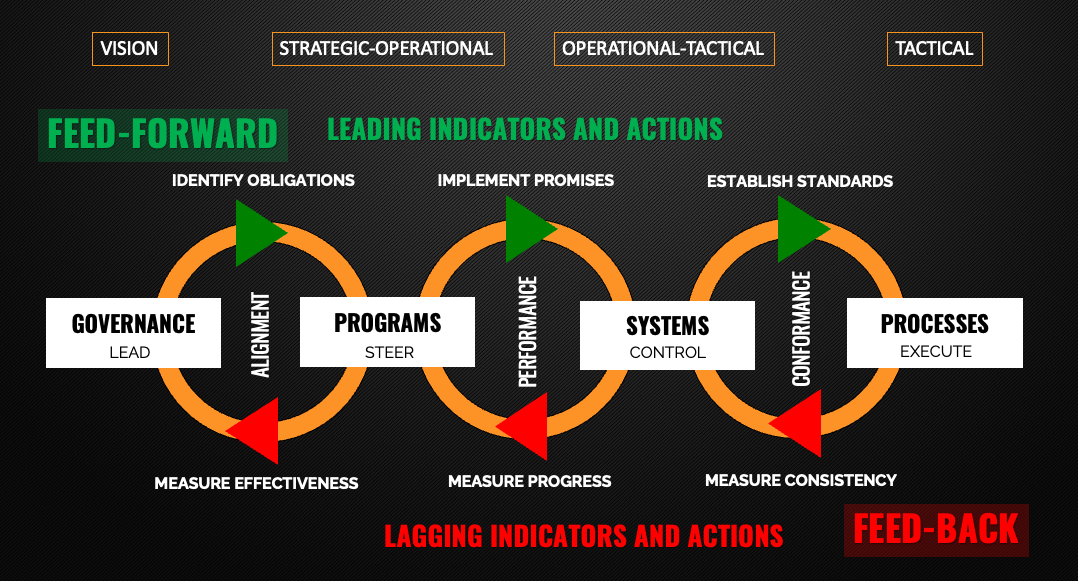

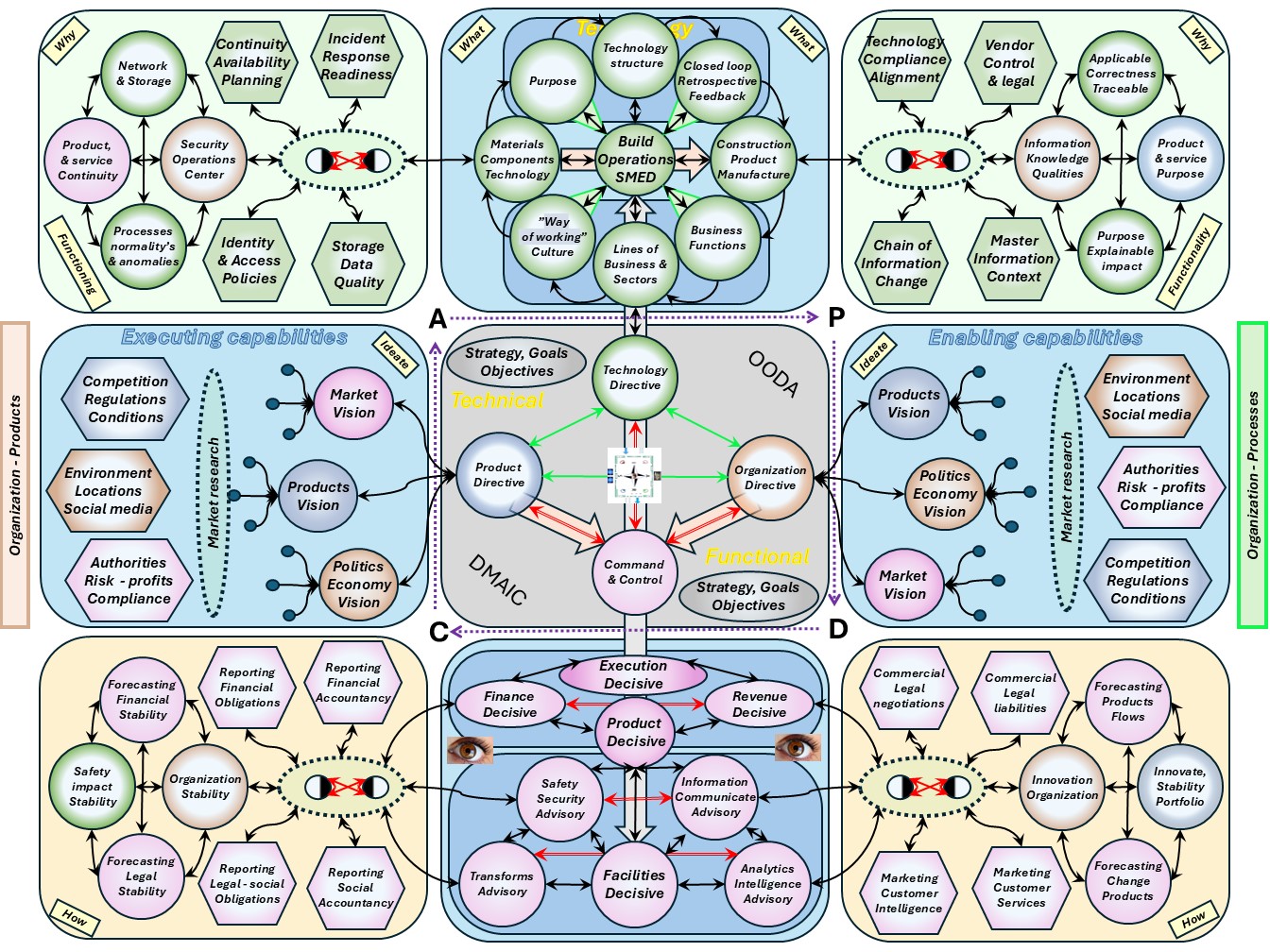

Command & Control Abstraction and models

This page is about

Command & Control

C6ISR command, control, communications, computers, cyber-defense and combat systems and intelligence, surveillance, and reconnaissance.

Just replace "combat systems" into "commercial systems" and we can proceed for a generic applicable abstraction for all systems.

I am not experienced in this command & control area although experienced by their effects.

My observations and collecting from a lot of others in trying to connect what control & command is and how it is entangled into information processing.

There are an incredible number of good usable models out there, but we've lost our way by getting too caught up in the tech buzz.

What we're doing is exchanging pretty abstract ideas between people with language and pictures.

Command & Control: Technology vs Organisation

❶ Guidance is: "Command... Control... in the Information Age"

Power to the Edge (David S. Alberts, Richard E. Hayes 2003)

Focusing upon improving both the state of the art and the state of the practice of command and control, the CCRP helps DoD take full advantage of the opportunities afforded by emerging technologies.

The source is defence but mentioned is that is generic appplicable.

This book explores a leap now in progress, one that will transform not only the U.S. military but all human interactions and collaborative endeavors.

Power to the edge is a result of technological advances that will, in the coming decade, eliminate the constraint of bandwidth, free us from the need to know a lot in order to share a lot, unfetter us from the requirement to be synchronous in time and space, and remove the last remaining technical barriers to information sharing and collaboration.

Our behaviors and the architectures and characteristics of our systems are driven by economics, in this case the economics of information.

The dawn of the Information Age was ushered in by Moore's Law.

As the cost of computing fell, we stopped focusing our attentions on conserving available computing resources and began to be inefficient consumers of computing resources.

❷ The document is not well known but reading it, it promotes lean agility in a way not being locked in anymore by a cultural example.

What to do about this?

- The source that referred me to this document got very upset when I interpreted it as vision for promoting lean agility in a changing world.

The only explanation for that is being hurt by bad experiences in wrong management approaches.

It is alarming signal that a lot of damage has been done to achieve a change into new information age.

- Just reading some vocabulary, avoiding confusion:

- Terms of references , TOR show how the object in question will be defined, developed, and verified.

They should also provide a documented basis for making future decisions and for confirming or developing a common understanding of the scope among stakeholders.

In order to meet these criteria, success factors/risks and constraints are fundamental. They define the:

- vision, objectives, scope and deliverables (i.e. what has to be achieved)

- stakeholders, roles and responsibilities (i.e. who will take part in it)

- resource, financial and quality plans (i.e. how it will be achieved)

- work breakdown structure and schedule (i.e. when it will be achieved)

- stakeholders

Project stakeholder refers to "an individual, group, or organization, who may affect, be affected by, or perceive itself to be affected by a decision, activity, or outcome of a project, program, or portfolio."

ISO 21500 uses a similar definition.

- Stovepiping is the presentation of information without proper context. ...

Another meaning of stovepiping is "piping" of raw intelligence data directly to decision makers, bypassing established procedures for review by professional intelligence analysts for validity (a process known as vetting), an important concern since the information may have been presented by a dishonest source with ulterior motives, or may be invalid for a myriad of other reasons.

Intelligence organizations may deliberately adopt a stovepipe pattern so that a breach or compromise in one area cannot easily spread to others.

- A stovepipe organization (alt organisations) has a structure which largely or entirely restricts the flow of information within the organization to up-down through lines of control, inhibiting or preventing cross-organisational communication.

Many traditional, large (especially governmental or transnational) organizations have (or risk having) a stovepipe pattern. In the IT-jargon: siloed organisations.

😉 There is a connection for vision, strategy.

Optimizing governance in the organisation for the service

❸ Where tot optimize is another question than what to optimize, the difference is important. (Kevin Kohls 2024)

Understanding the Theory of Constraints: Much More Than Production

The goal of TOC is always system optimization, not local optimization.

System metrics, such as overall throughput, cycle time, and variability, must be optimized for the entire production system.

In contrast, trying to improve efficiency at each individual station is called local optimization, which often leads to suboptimal results for the broader system.

😉 There is a connection for optimizing systems, lean.

Optimizing governance and accountability

❹ It was assumed only a technical only state of the art report. Then seeing: (pag 63, 66)

Microsoft Digital Defense Report 2024 "our customer base and the role" expectations from customers.

Organizations should focus their security culture and governance efforts on accountability, teamwork, and shared responsibility.

Accountability always starts at the top, with organizational leaders who not only understand their responsibility for security outcomes but ensure that security risk management is embedded across their business in an organization-wide and collaborative way.

Leaders must establish a system of accountability, prioritization, and aligned incentives that is executed and monitored across the organization.

They must delegate risk accountability, mitigation implementation responsibility, and associated budgets/costs to leaders, managers, and individual contributors, as accountability alone cannot create a healthy culture.

we have found that an organization's resilience maturity can be determined based on four pillars: Operational, Tactical, Readiness, and Strategic. Maturity in each of these pillars is categorized as either Basic, Moderate, or Advanced.

😉 There is a connection for systems entangled with safety.

⚒ M-1.1.4 Progress

done and currently working on:

- 2020 week 44

- Was set up for the sentence: data is the new oil.

- 2024 week 40

- Web content redesign with Jabes being pivotal started for this page.

- Adding the C6isr replacing data for stovepipes opened the next steps.

- The goal for command & control has become more clear by:

- Knowing what the conceptual gaps in command & controls are

- Insight needed improvements according Gemba, shop-floor

- The old content moving to the technical system serve area.

- 2024 week 43

- With the trigger of SIMF the first six chapters is getting a holding with strategy in the information age.

Much about strategy is collected from advisory sources.

- 2024 week 45

- Getting around to the whole in the analysis the last six chapter are becoming in a direction of inter relationships and goals.

- 2024 week 46

- Getting around to the whole in the analysis the middelt six chapter are getting completed in line of the others.

- 2024 week 47

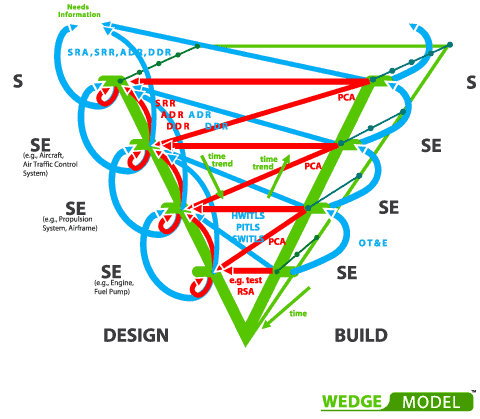

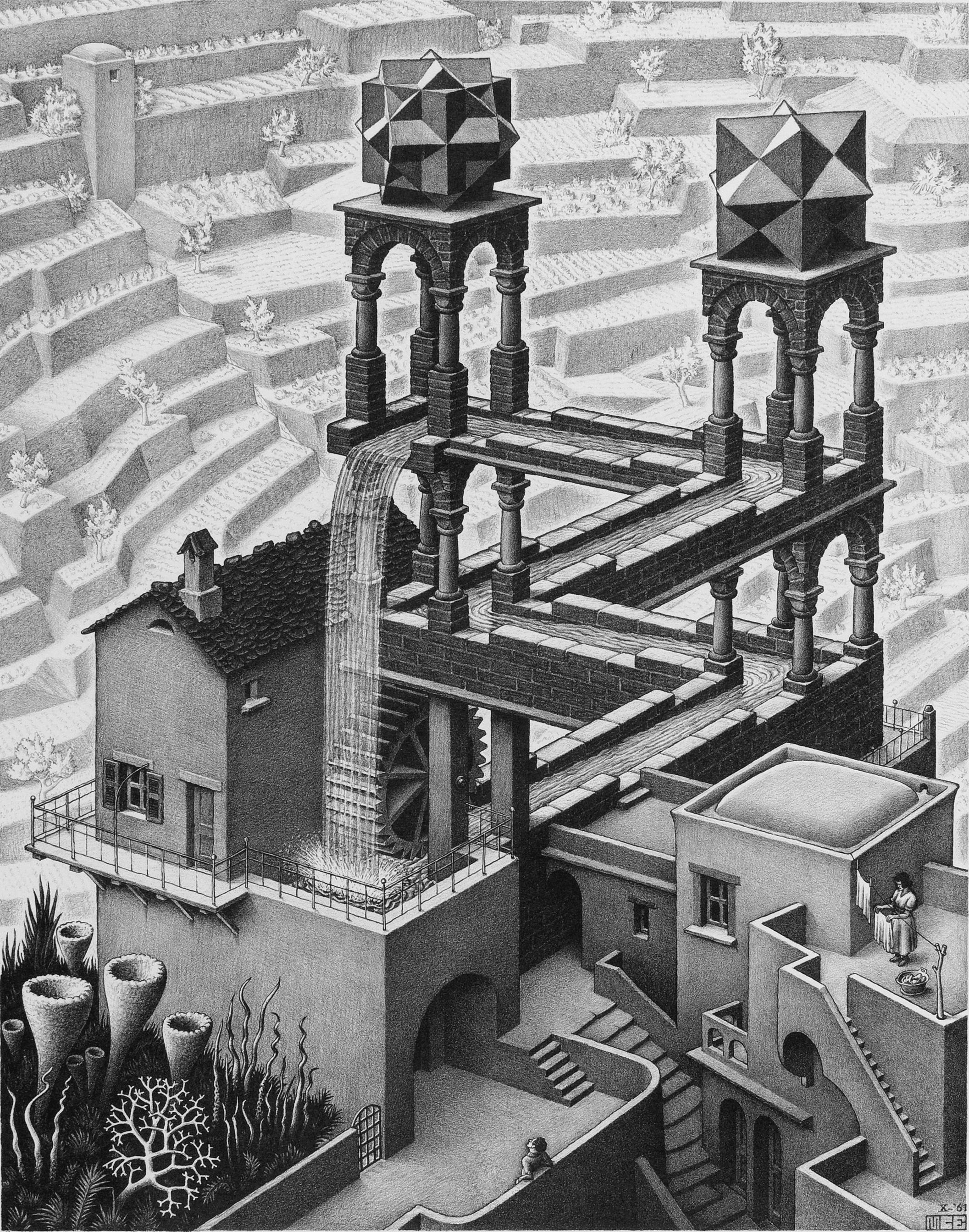

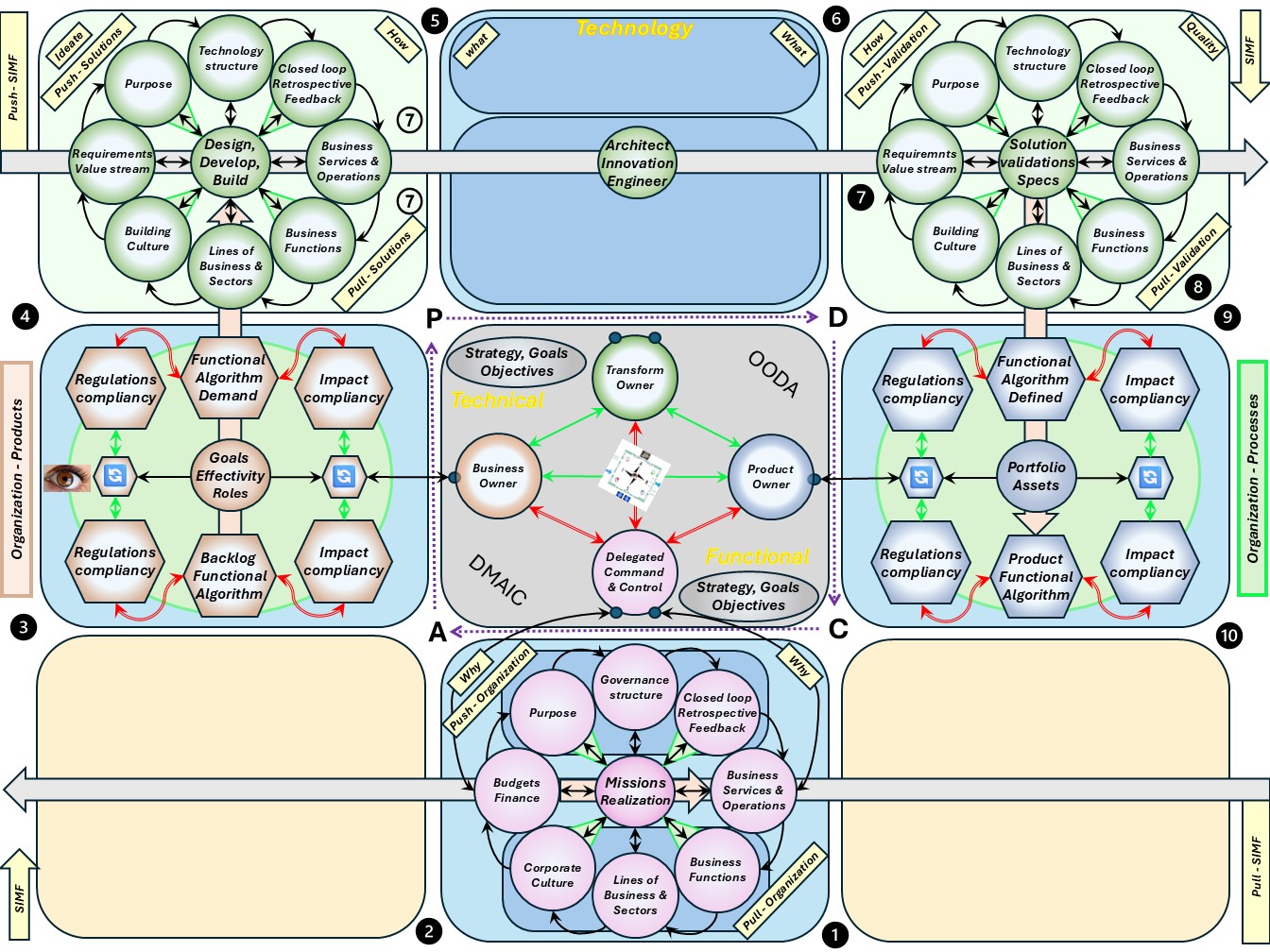

The cycle at technology, plane 2/3 was wrong figured.

There is a V-shape at engineering, building, validating.

The same approach is at the other parts in the cycle.

Only the portfolio suggestions, backlog and the portfolio product specifications are stable in some moment in time.

Changed V-shapes, triple-V, for:

- Suggestion box, backlog into requirements: Wedge model, double-u.

- Validations of the service, product into specifications: triple-u.

Planning to do & changes:

- The structure at technology, plane 3/4 is incomplete.

There should not direct external topics but onely roles, activities (2024 wk47).

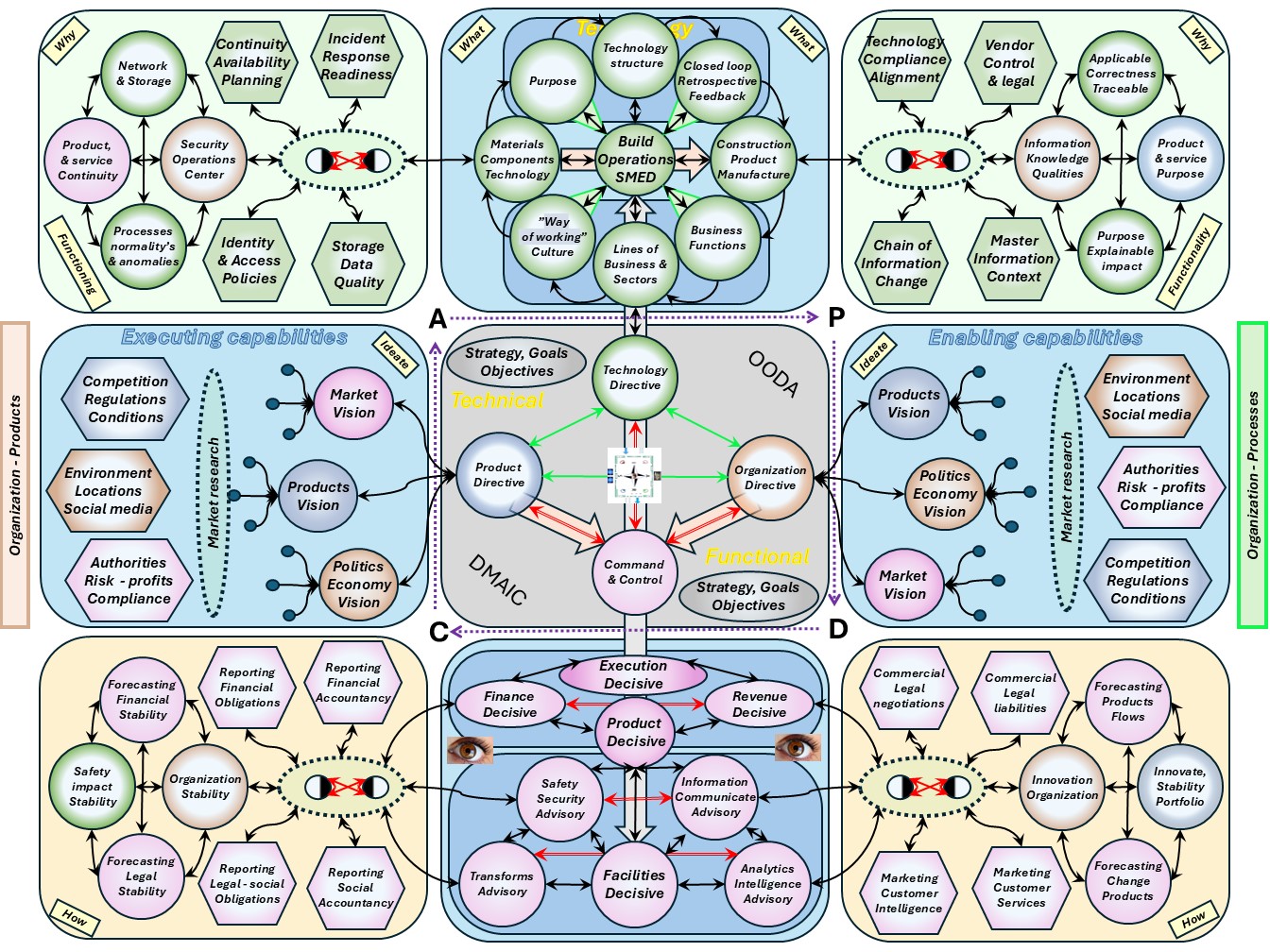

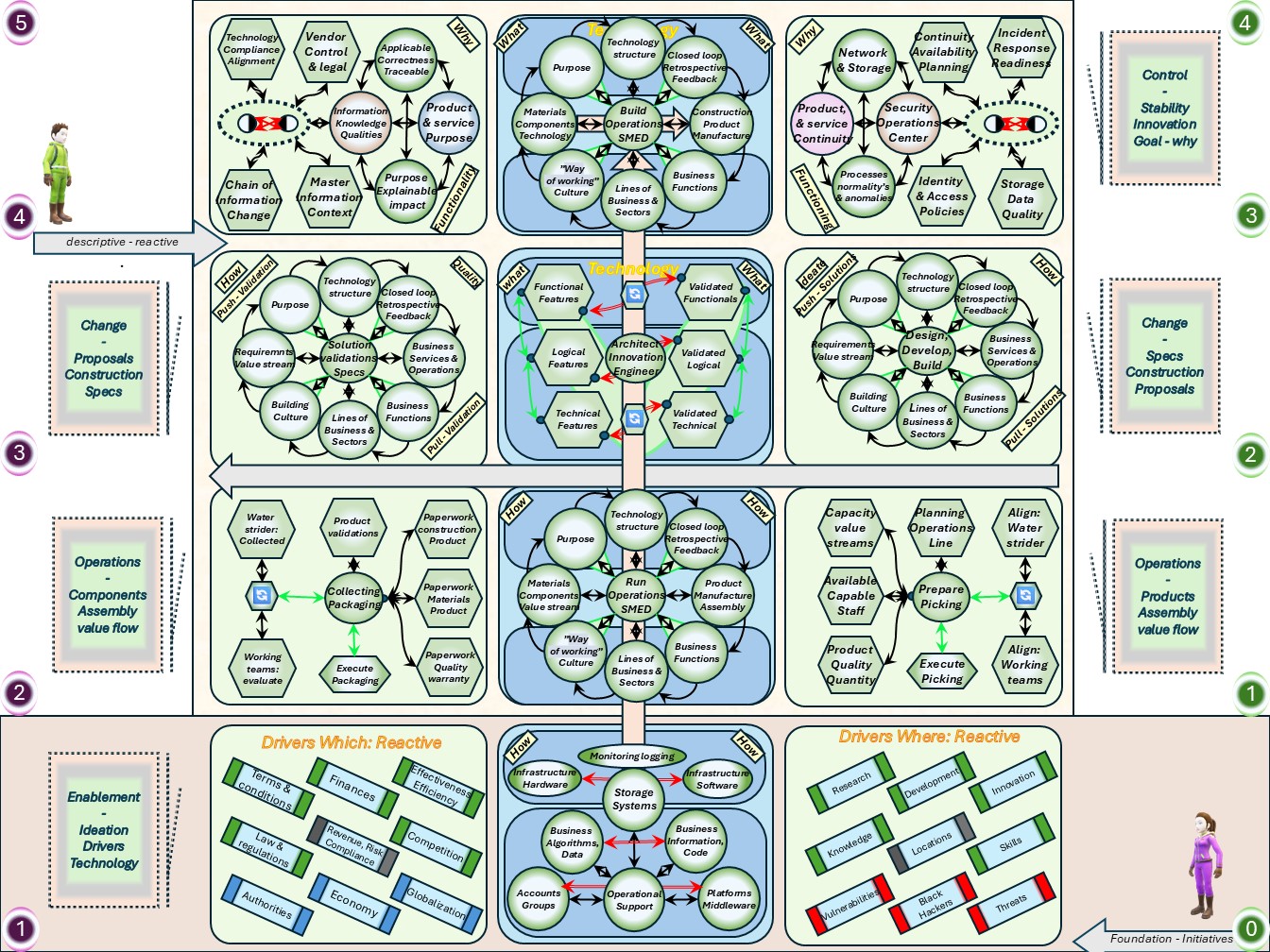

Technology:

- Security operations centre (SOC) with several focus area's.

- Identity & Access (IAM) - data quality (DQ).

- Incident repsonse (IRT) - Continuity availablity (DR).

Functionality:

- Information quality (IQ) with several focus area's.

- Information registration, history (HIS) - Information context, master data (DA-MD)

- Vendor management, procurement - Technical compliancy alignment (TAC).

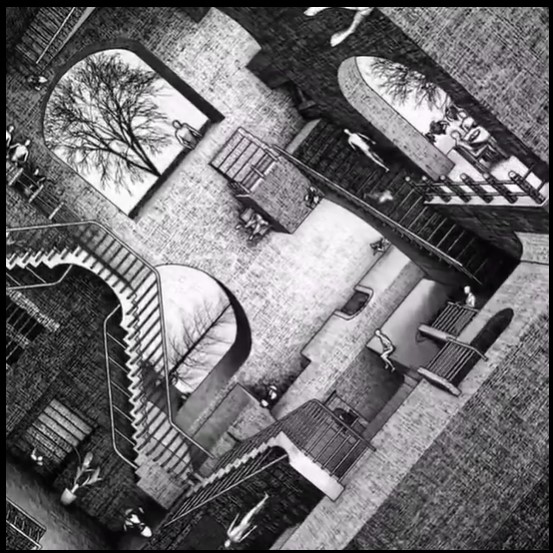

M-1.2 Floor plans, ordered dimensions

Building any non trivial construction is going by several stages.

These are:

- high level design & planning

- detailed design & realisation

- evaluation & corrections

Non trivial means it will be repeated for improved positions.

Before any design, tools are needed for measuring what is going on.

Without knowing the situation or direction there is no hope in achieving a destination by improvements.

⚖ M-1.2.1 Multidimensional contexts

Control & command lost in space

(Power to the edge)

The Industrial Age applied a “divide and conquer” mentality to all problems.

Academic disciplines, businesses, associations, and military organizations defined their roles as precisely as possible and divided their overall activities into coherent subsets that could be mastered with the existing knowledge, technologies, and personnel. ...

If a sound set of decompositions is made, then these organizational subsets of the organization (again, a business, bureaucracy, or military organization) can develop professional specialties that help the overall organization or enterprise to perform its mission and achieve its objectives. ...

The Industrial Age raised specialization to heights not previously contemplated.

The whole idea of an assembly line in which a set of carefully sequenced actions generates enormous efficiency was unthinkable before this era. ...

The organizational consequence of Industrial Age specialization is hierarchy.

The efforts of individuals and highly specialized entities must be focused and controlled so that they act in concert to achieve the goals of the larger organizations or enterprises that they support.

This implies the existence of a middle management layer of leaders whose tasks include:

- Understanding the overall goals and policies of the enterprise;

- Transmitting those goals to subordinates

- Developing plans to ensure coordinated actions consistent with the organization's goals and values;

- Monitoring the performance of the subordinates, providing corrective guidance when necessary; and

- Providing feedback about performance and changes in the operating environment to the leadership, and making recommendations about changes in goals, policies, and plans.

The size and the number of levels that separate the leader(s) of an enterprise and the specialists that are needed to accomplish the tasks at hand are a function of the overall size of the enterprise and the effective span of control, that is, how many individuals and/or organizational entities that can be managed by an individual or entity

(page 41)

❶ With that approach situational awareness in the working force was not required.

Perhaps the most famous quotation about planning from that era (all the more relevant because it was uttered by one of the great planners in history) is, “No plan survives first contact with the enemy.”

(page 47)

❷ Able to act on change what is expected is required in the information age.

Industrial Age organizations are not optimized for interoperability or agility.

Thus, solutions based upon Industrial Age assumptions and practices will break down and fail in the Information Age.

This will happen no matter how well intentioned, hardworking, or dedicated the leadership and the force are.

(page 56)

❸ Able to act on change what is expected is required in the information age.

The result is that, as some have put it, “everyone needs to talk to everyone.” We would put it a slightly different way.

Since one cannot know who will need to work with our systems and processes, they should not be designed to make it difficult to do so.

On the contrary, they must be built to support a rich array of connectivity to be agile.

The same is true with our processes. They need to be adaptable in terms of who participates as well as who plays what roles.

(page 59)

😉 The change to unlearn and learn: everybody needs to understand the shared goal and able to understandable communicate to their counterparts.

The Shop floor, mapping activities flows to maps

Cartography,

the art and science of graphically representing a geographical area, usually on a flat surface such as a map or chart.

It may involve the superimposition of political, cultural, or other nongeographical divisions onto the representation of a geographical area. ...

The procedures for translating photographic data into maps are governed by the principles of photogrammetry and yield a degree of accuracy previously unattainable.

The remarkable improvements in satellite photography since the late 20th century and the general availability on the Internet of satellite images have made possible the creation of Google Earth and other databases that are widely available online.

😉 Similarity to unlearn and learn: becoming aware in the location, situation.

Anatomy, Data literacy

Anatomy,

involves the study of major body structures by dissection and observation and in its narrowest sense.

By the end of the 19th century the confusion caused by the enormous number of names had become intolerable.

Medical dictionaries sometimes listed as many as 20 synonyms for one name, and more than 50,000 names were in use throughout Europe. ...

In 1887 the German Anatomical Society undertook the task of standardizing the nomenclature, and, with the help of other national anatomical societies, a complete list of anatomical terms and names was approved in 1895 that reduced the 50,000 names to 5,528. ...

The Terminologia Anatomica, produced by the International Federation of Associations of Anatomists and the Federative Committee on Anatomical Terminology (later known as the Federative International Programme on Anatomical Terminologies), was made available online in 2011.

😉 Similarity to unlearn and learn: an agreement in shared vocabulary in communications.

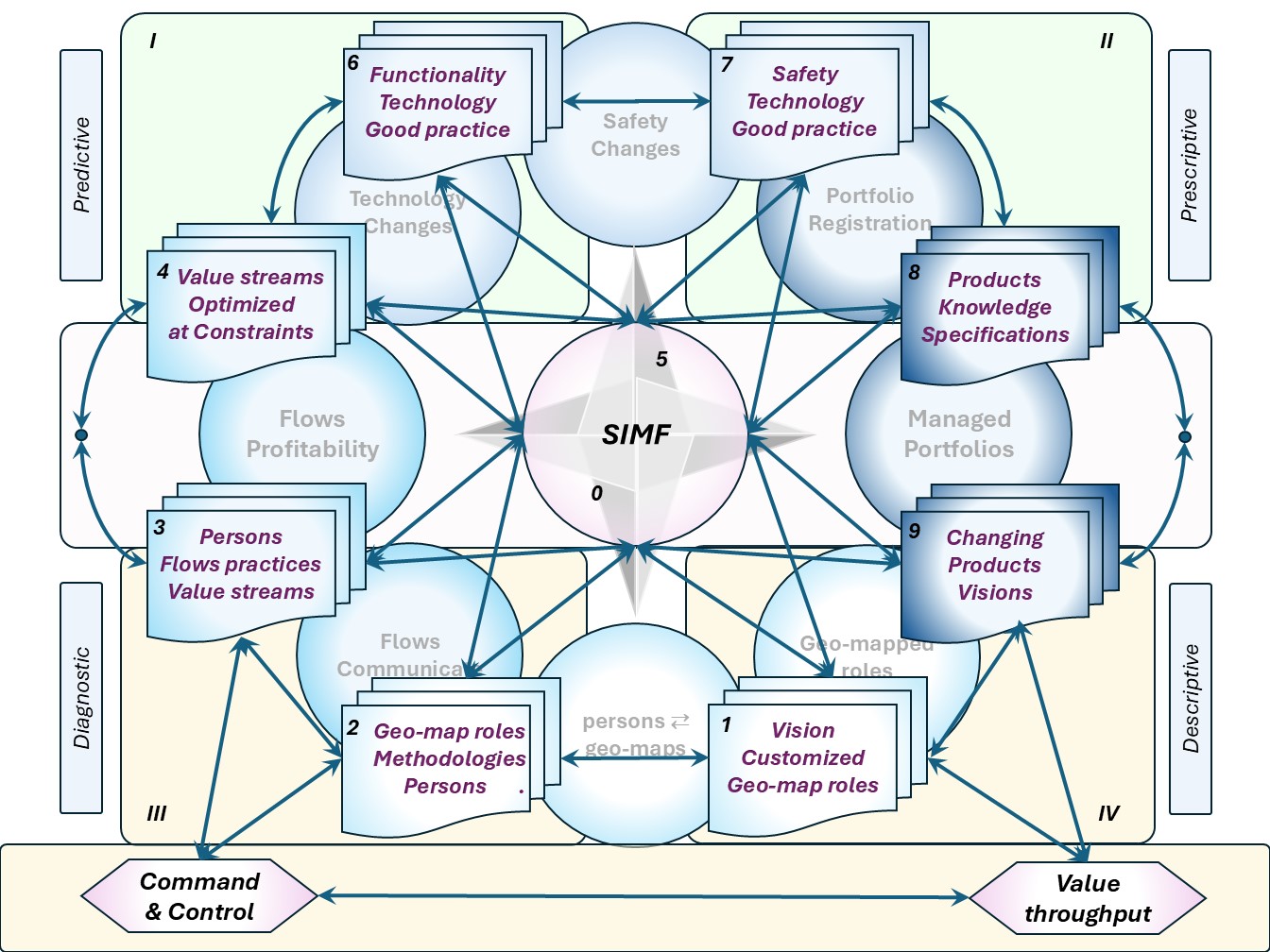

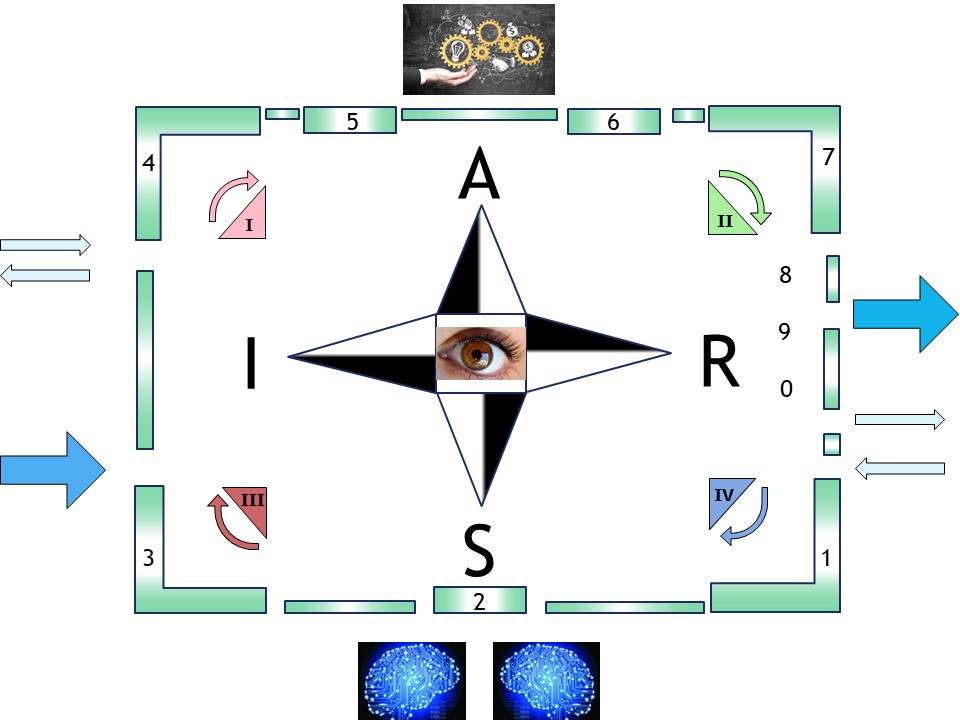

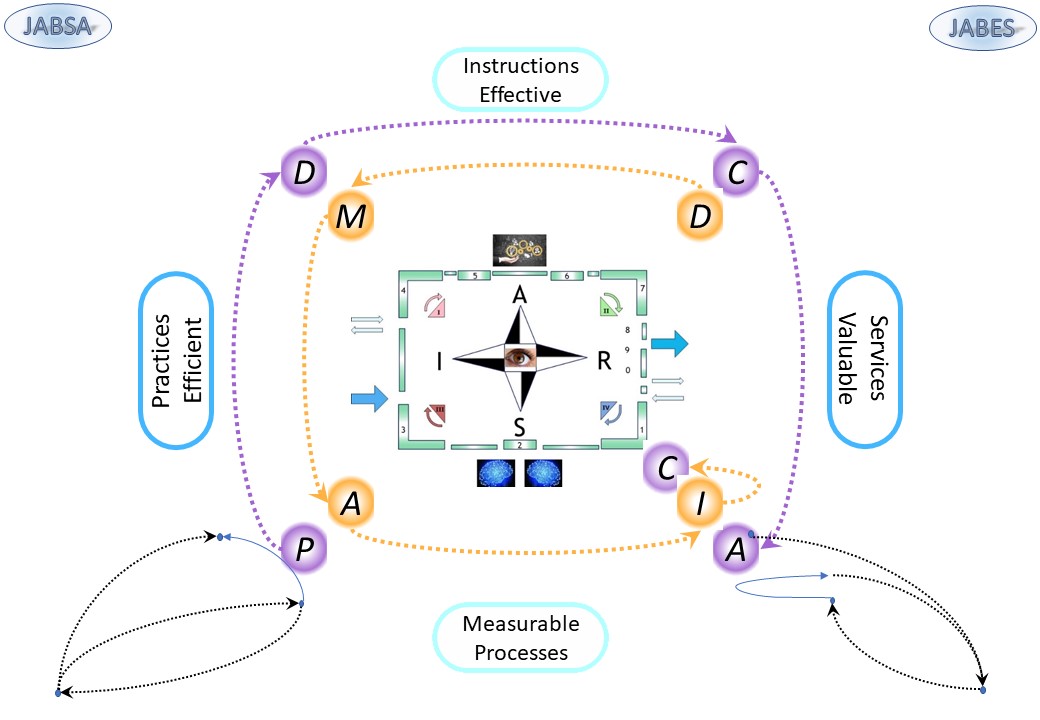

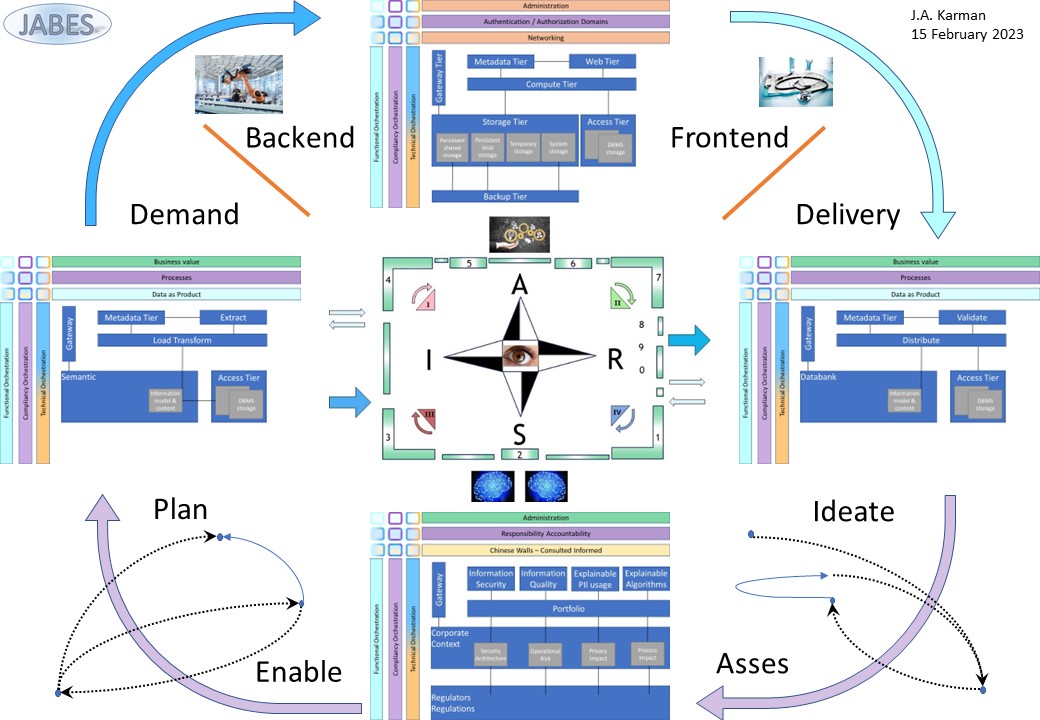

⚖ M-1.2.2 The Siar model

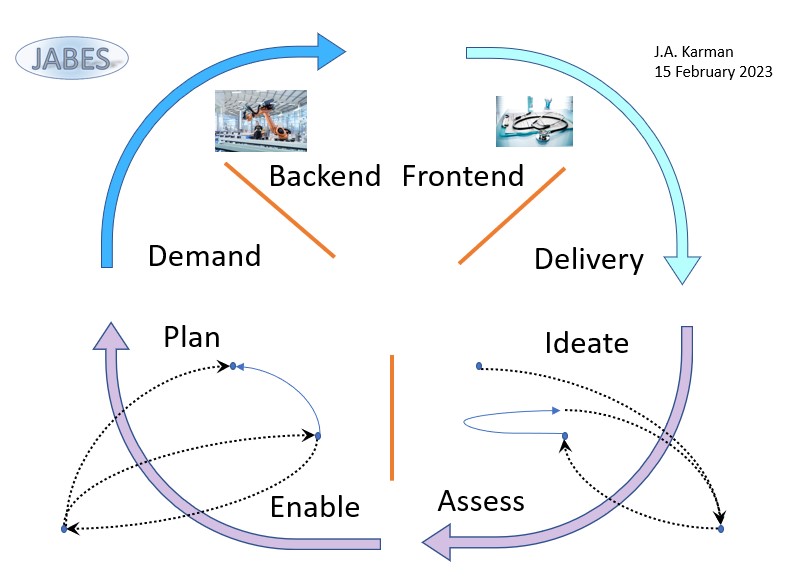

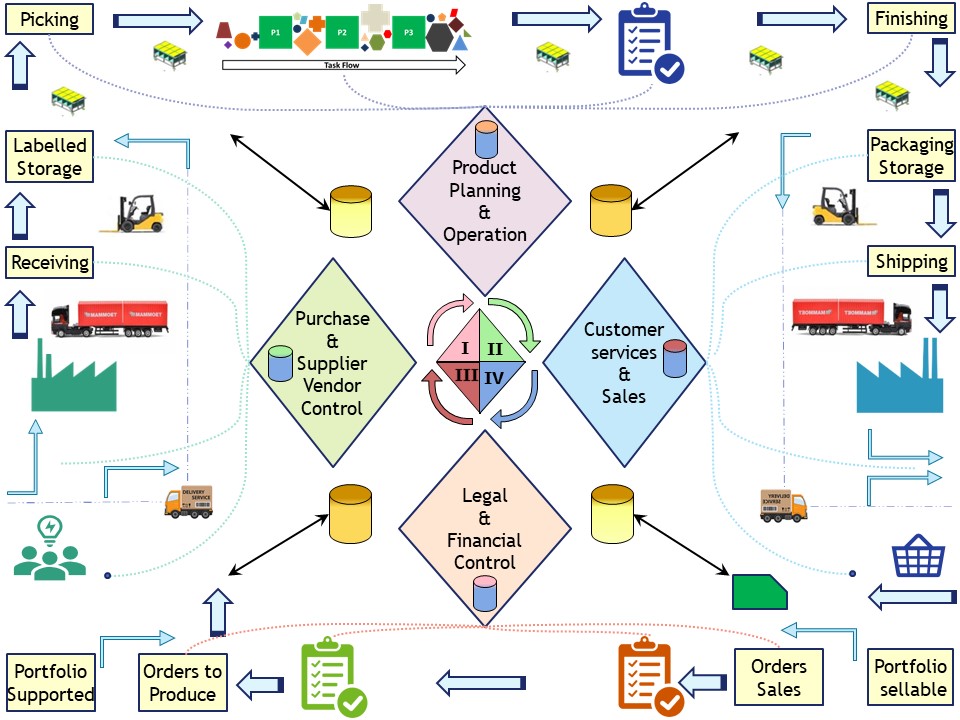

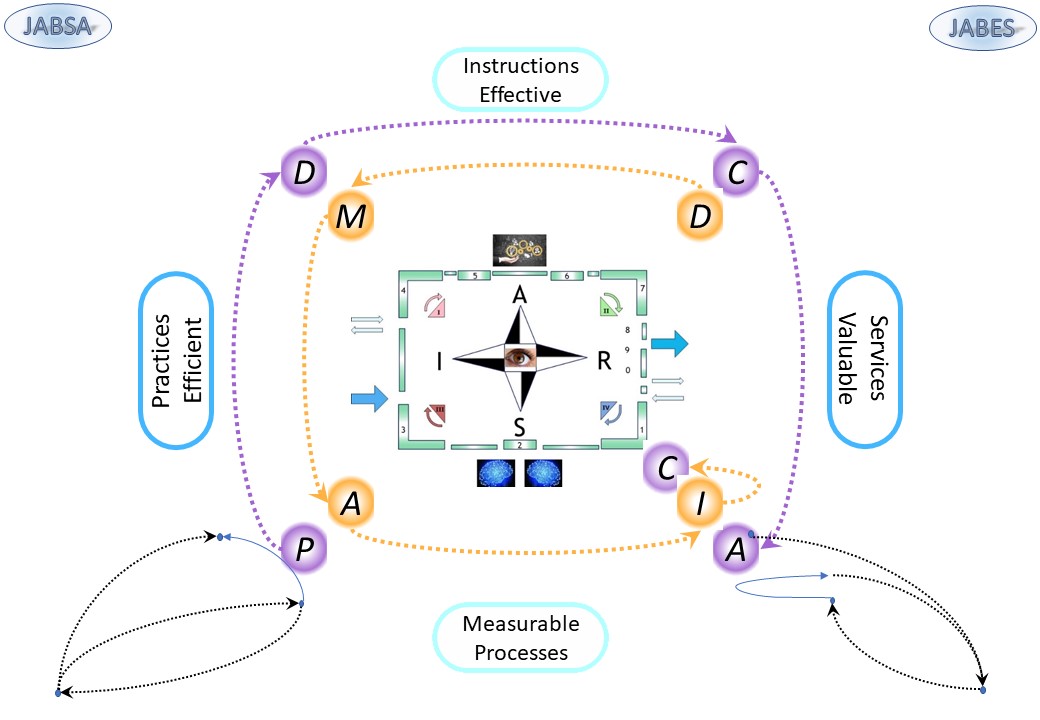

The process life cycle, static & dynamic

❹

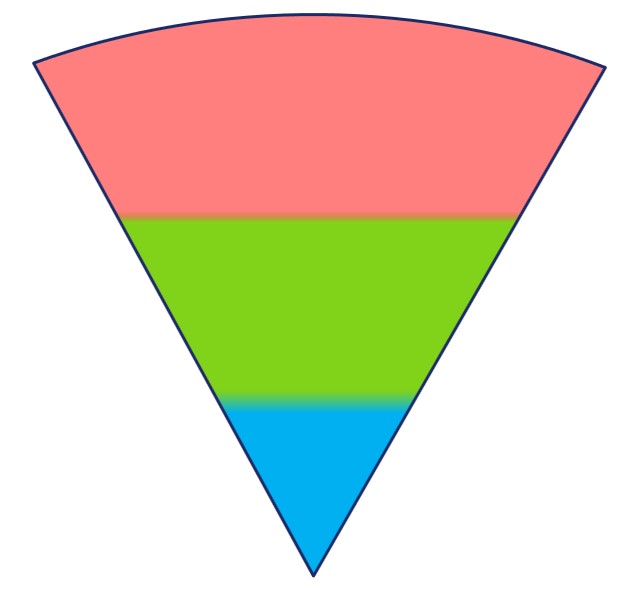

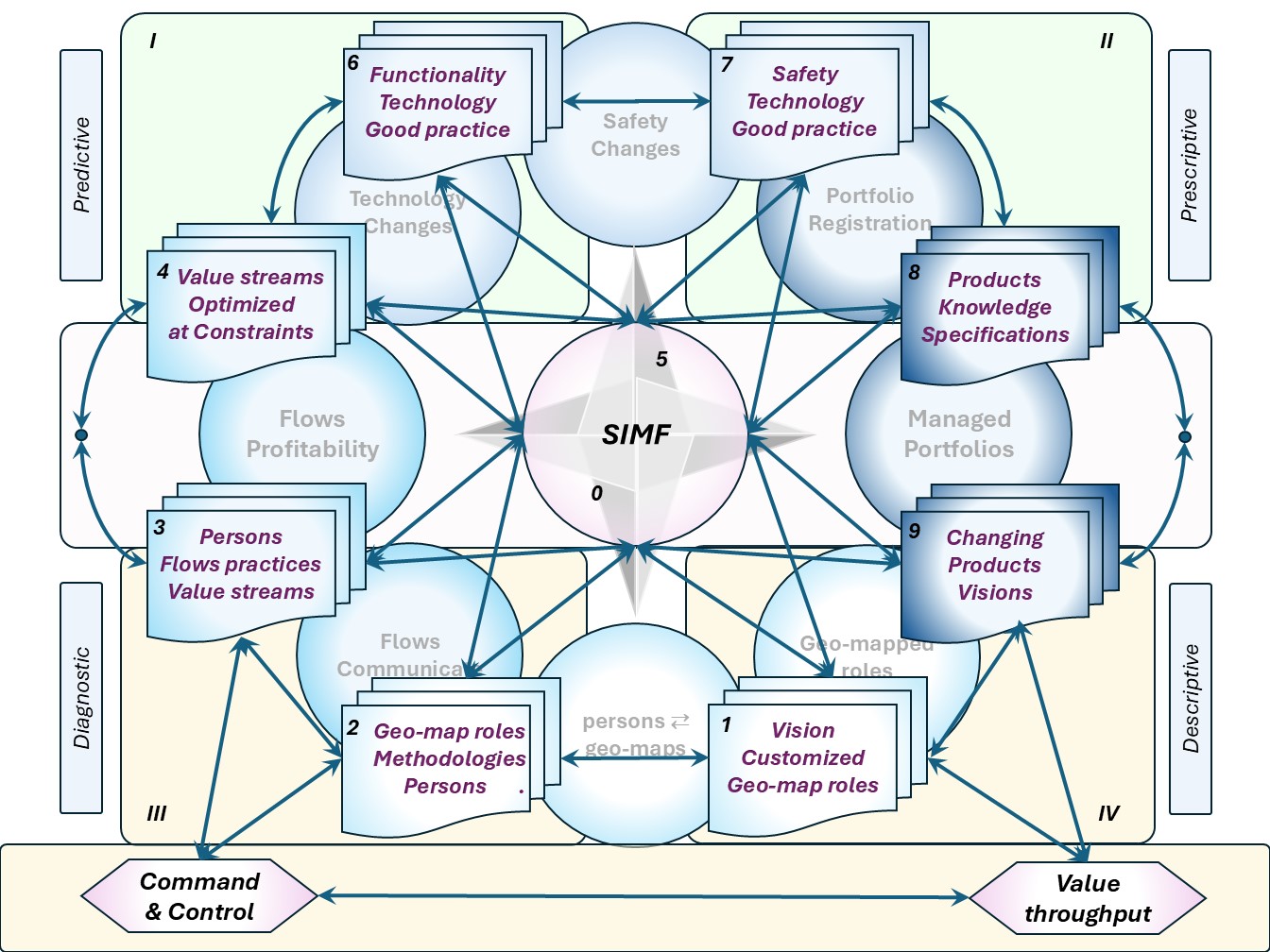

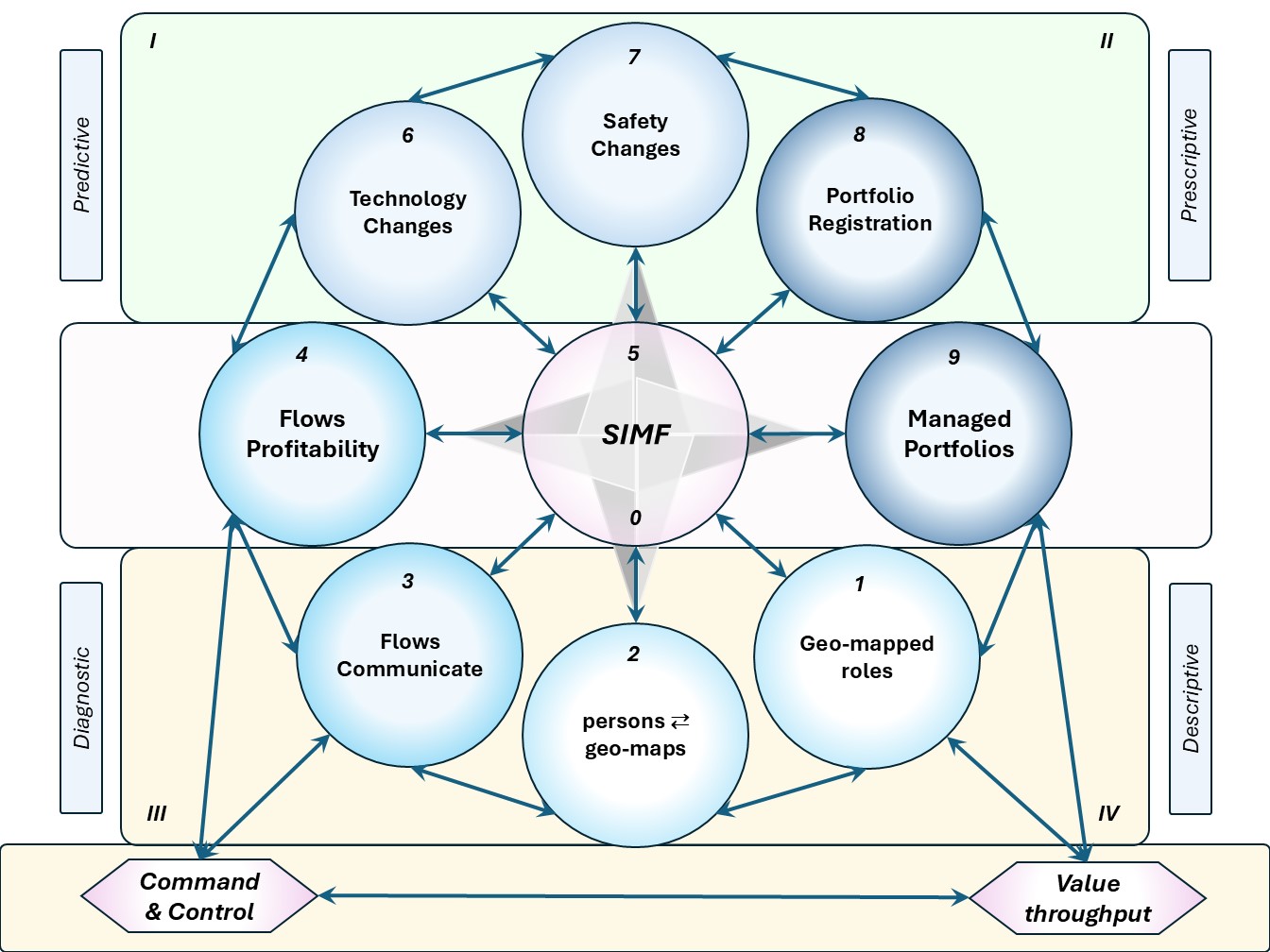

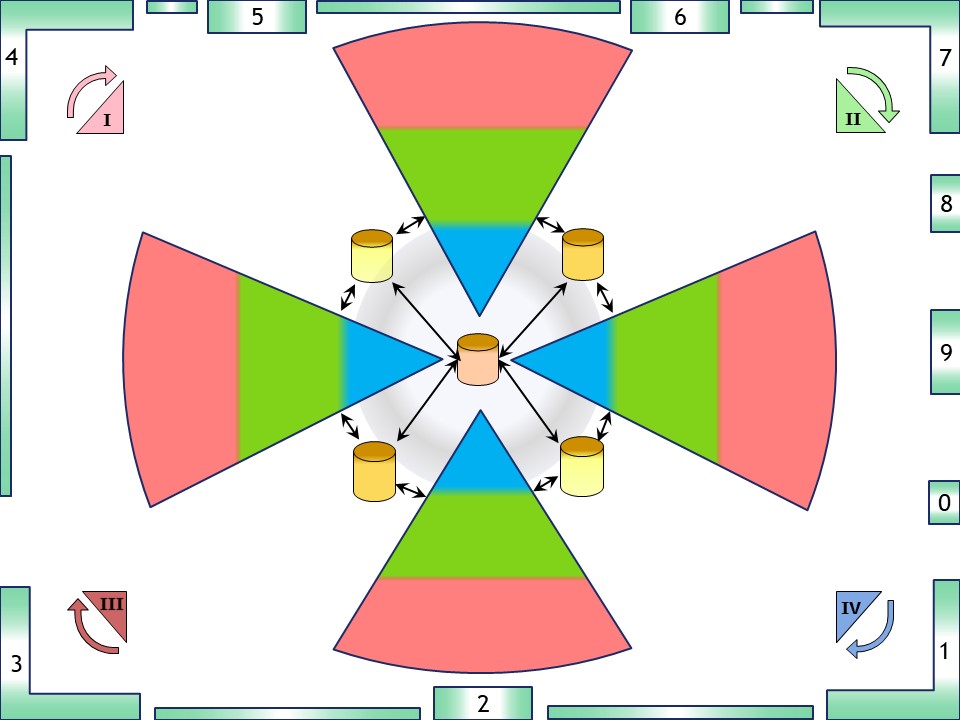

❹ Mindset prerequisites 👉🏾 Siar model

The static element information is well to place. A rectangular, box, is a fit for the static representation.

😉 The model, static, covers all of:

- value stream: left to right

- PDCA, DMAIC, lean agile improvements

- simple processes: 0 - 9

- The duality between processes, transformations, and information, data

- four quadrants:

- I Push, IV Pull

- III lean agile requests. II deliveries

😉

😉

Processing, transforming, is a dynamic process.

A circular representation is a better fit.

The cycle:

- Ideate - Asses

- Plan - Enable

- Demand, Backend

- Frontend , Delivery

Customer interaction: bottom right side.

Supply chain interaction: bottom left side.

- realistic human interaction & communication. nine plane:

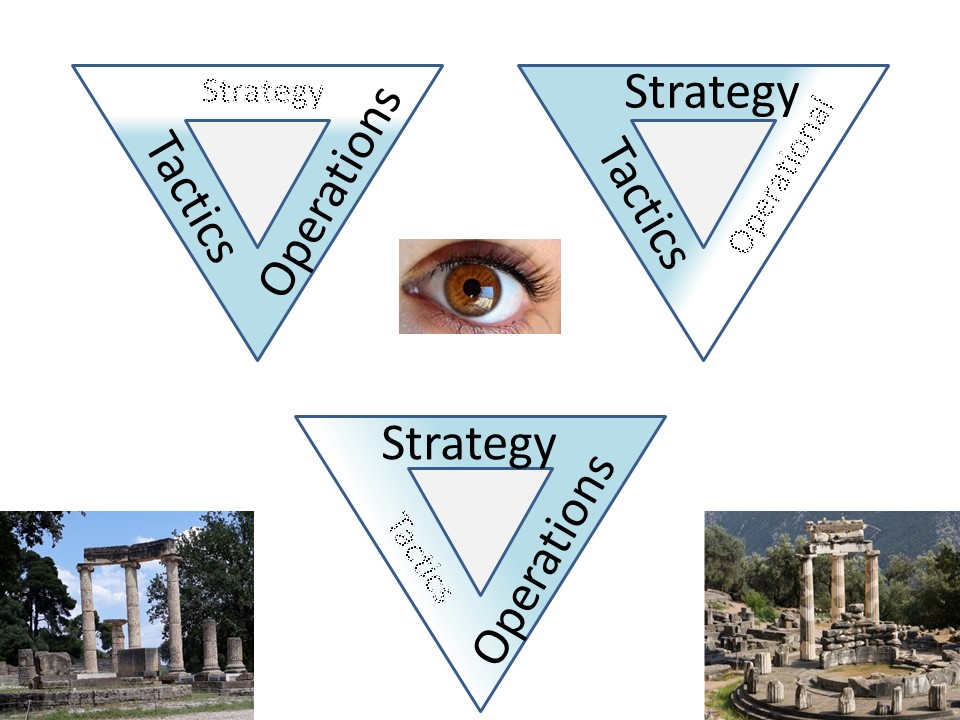

- Steer Shape Serve

- Strategy, Tactics, Operational

- Accountabilities, responsibilities, roles

Situation awareness

❺ Situational awareness

or situation awareness (SA) is the understanding of an environment, its elements, and how it changes with respect to time or other factors.

Situational awareness is important for effective decision making in many environments. It is formally defined as:

🎭 the perception of the elements in the environment within a volume of time and space, the comprehension of their meaning, and the projection of their status in the near future.

The formal definition of SA is often described as three ascending levels:

- Perception of the elements in the environment,

- Comprehension or understanding of the situation, and

- Projection of future status.

People with the highest levels of SA have not only perceived the relevant information for their goals and decisions, but are also able to integrate that information to understand its meaning or significance, and are able to project likely or possible future scenarios.

These higher levels of SA are critical for proactive decision making in demanding environments.

The mindset by the SIAR model is used over and over again.

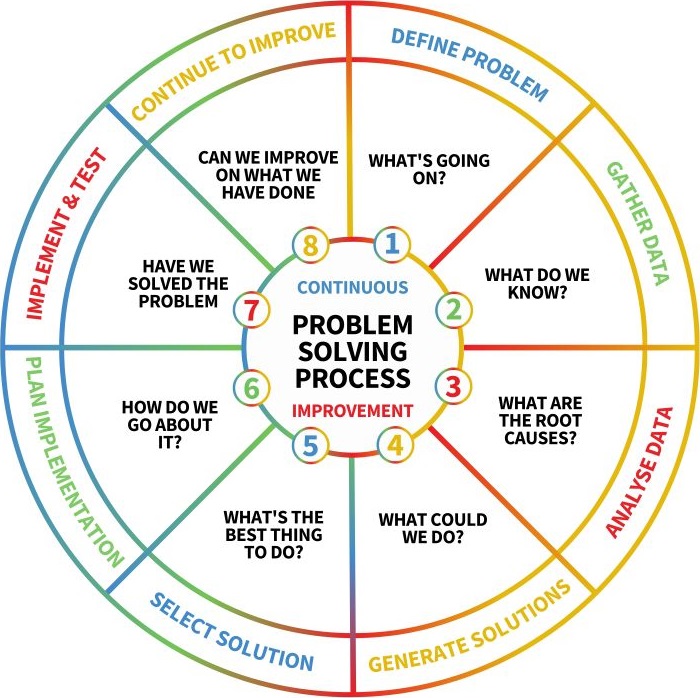

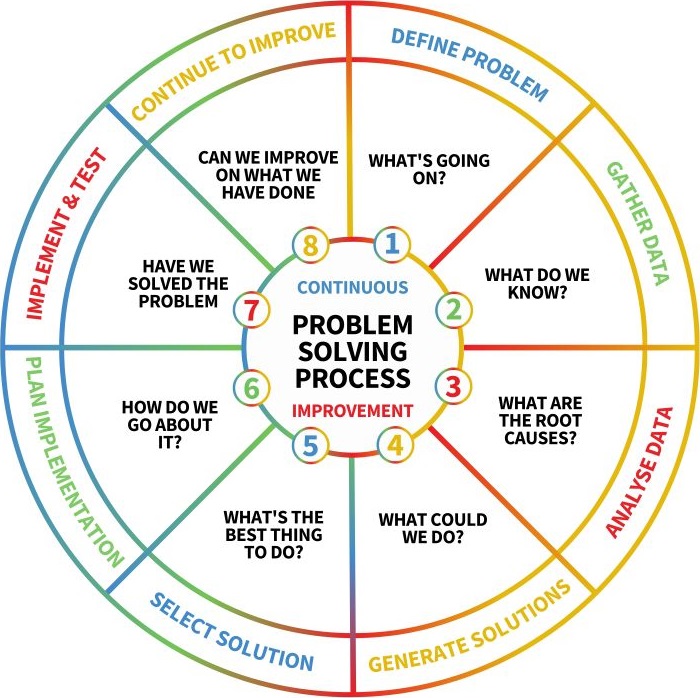

PDCA, DMAIC, OODA

❻ Focus on Outcomes or Face Failure:

- DMAIC is an abbreviation of the five improvement steps it comprises: Define, measure, analyze, improve and control.

All of the DMAIC process steps are required and always proceed in the given order.

- ADKAR is similar:

- Awareness (of the need for change)

- Desire (to participate and support the change)

- Knowledge (on how to change)

- Ability (to implement required skills and behaviours)

- Reinforcement (to sustain the change)

-

PDCA or plan do-check-act is an iterative design and management method used in business for the control and continual improvement of processes and products.

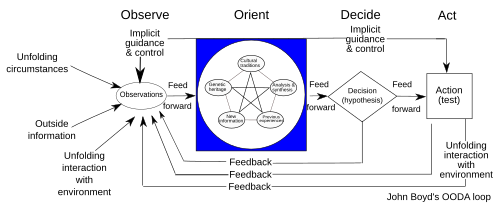

- The OODA loop (observe, orient, decide, act) is a decision-making model developed by United States Air Force Colonel John Boyd.

He applied the concept to the combat operations process, often at the operational level during military campaigns.

It is no silverbullet.

-

The OODA loop is merely one way among a myriad of ways of describing intuitive processes of learning and decision making that most people experience daily.

It is not incorrect, but neither is it unique or especially profound.

in a figure:

see right side.

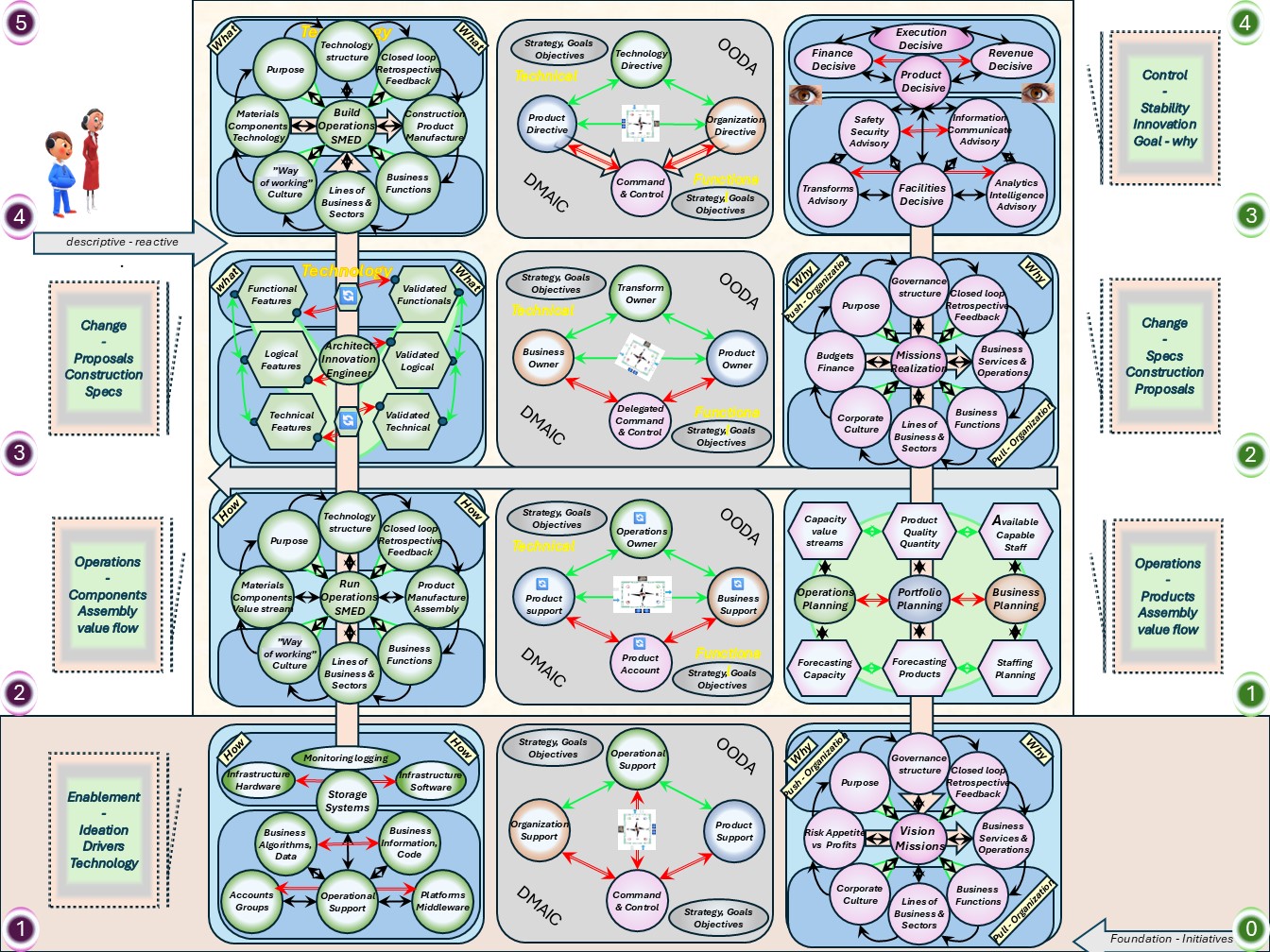

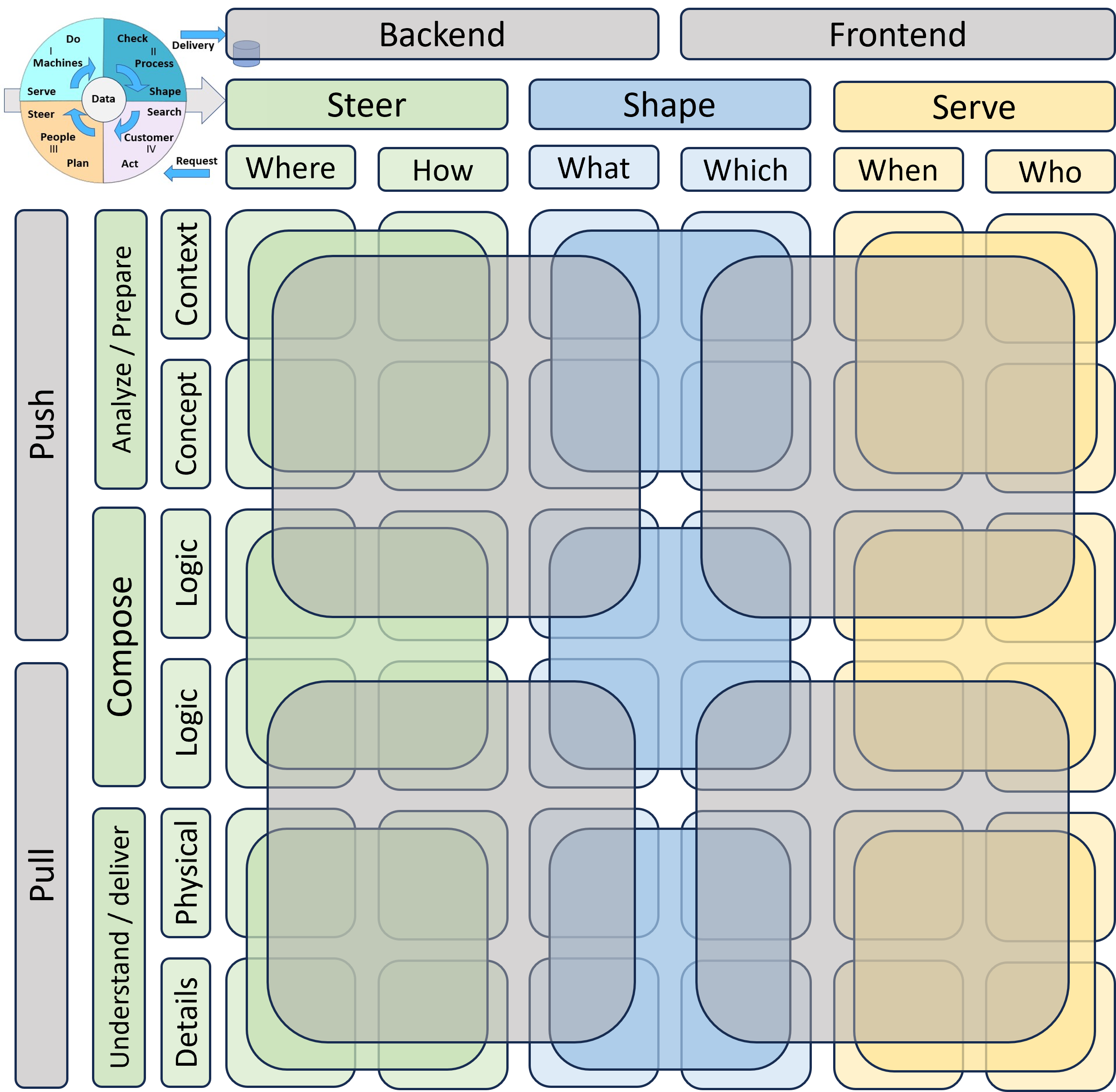

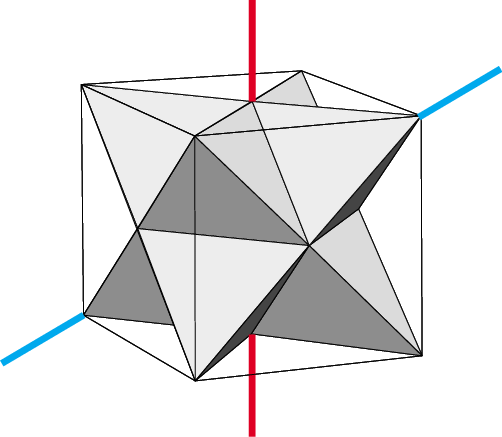

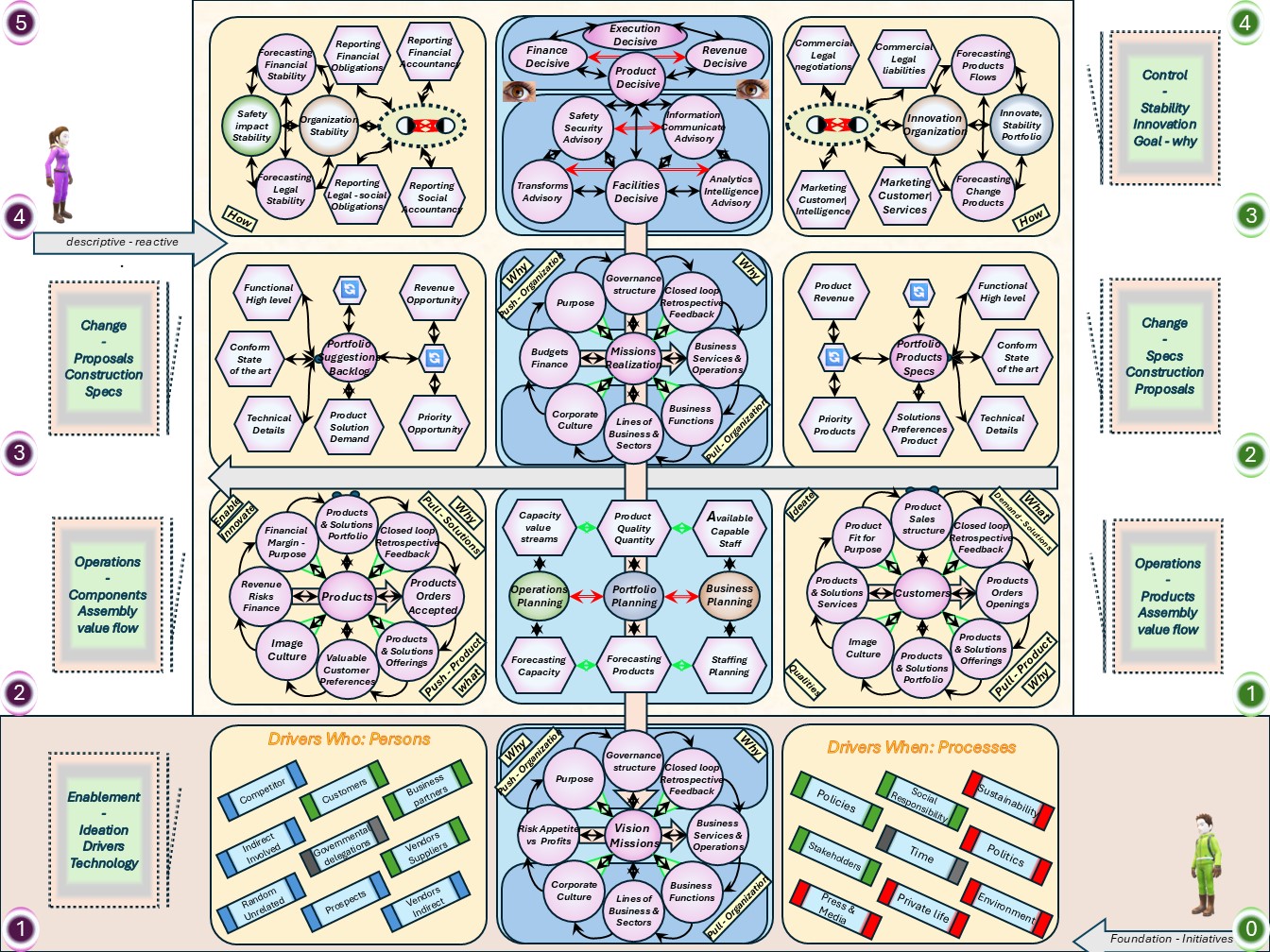

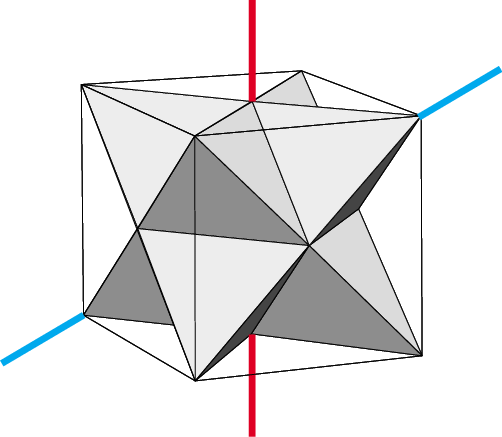

⚖ M-1.2.3 Multidimensional ordered contexts

Ordered for customer processes

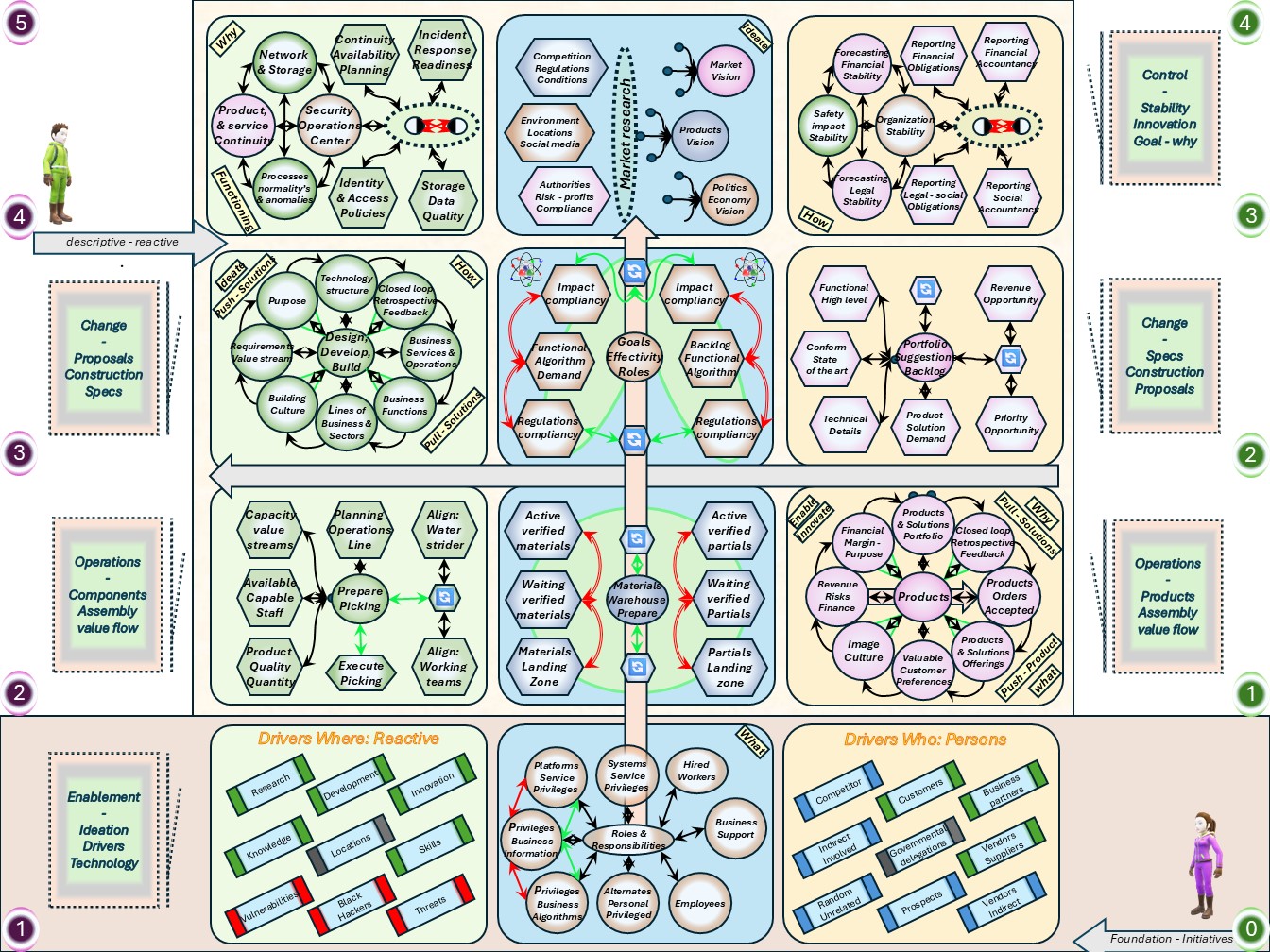

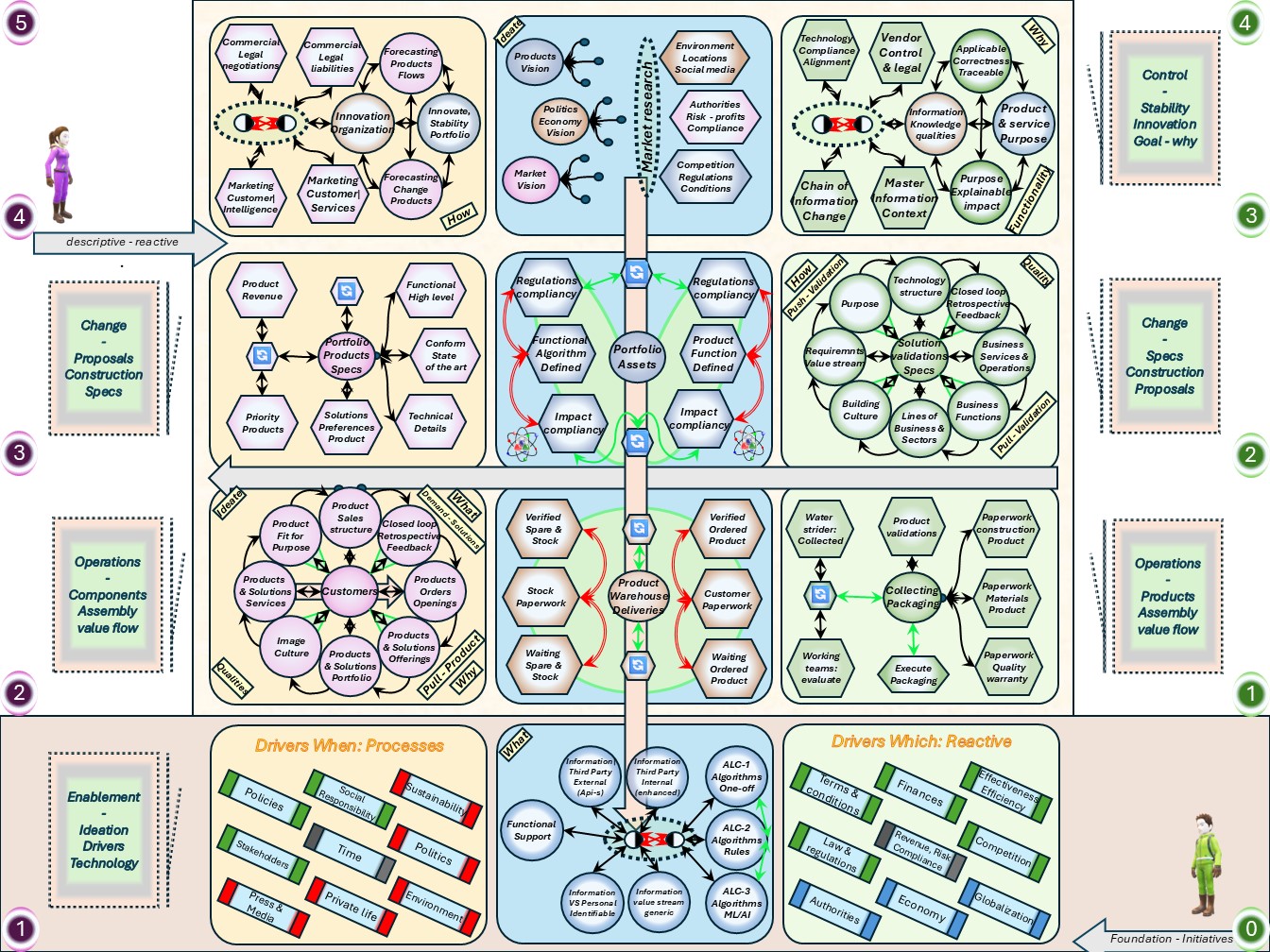

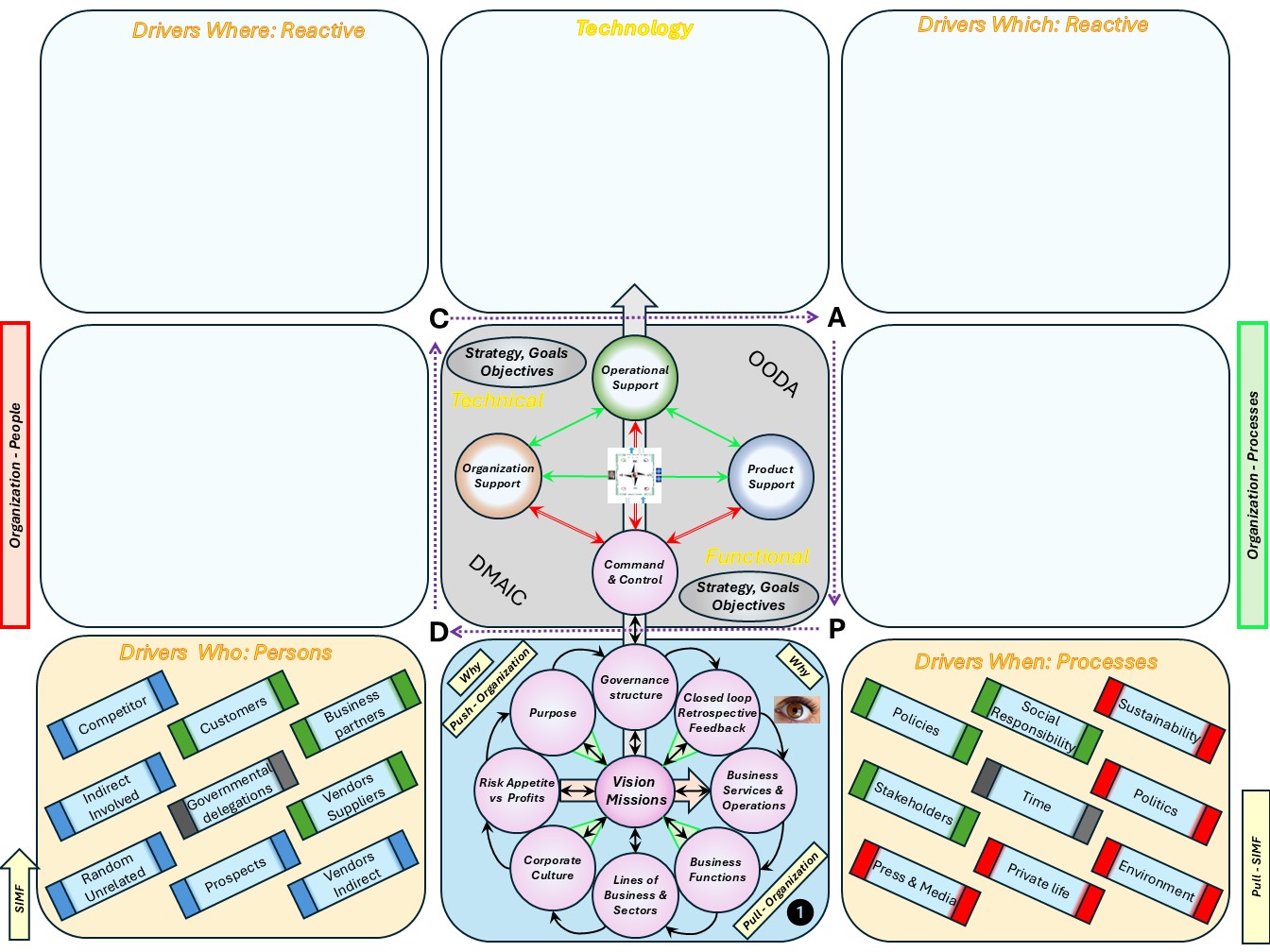

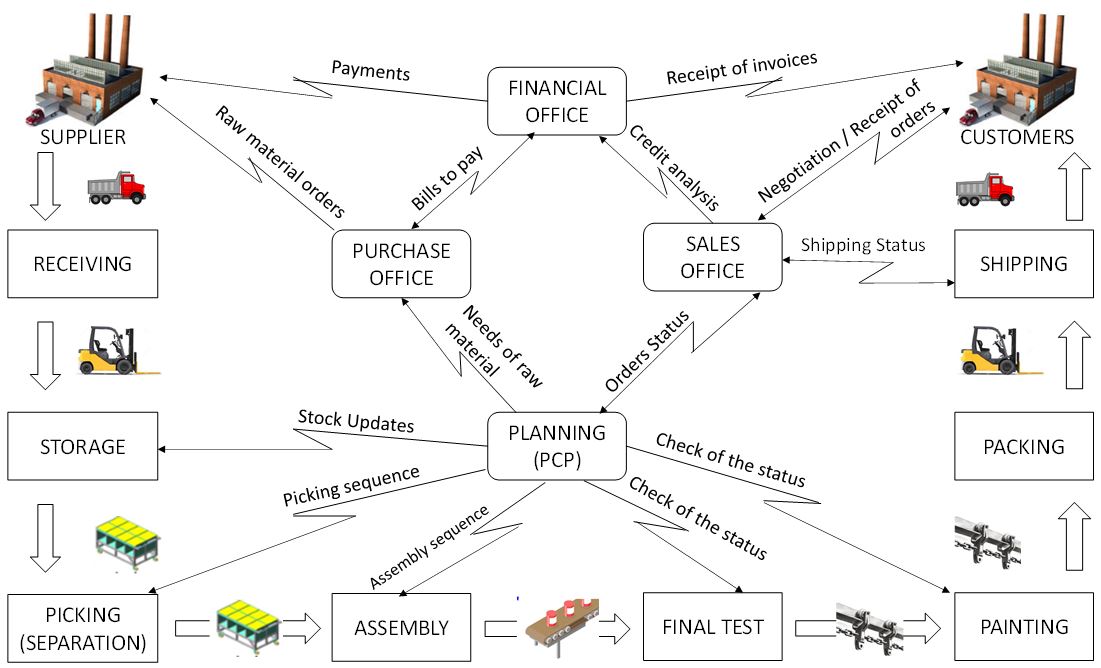

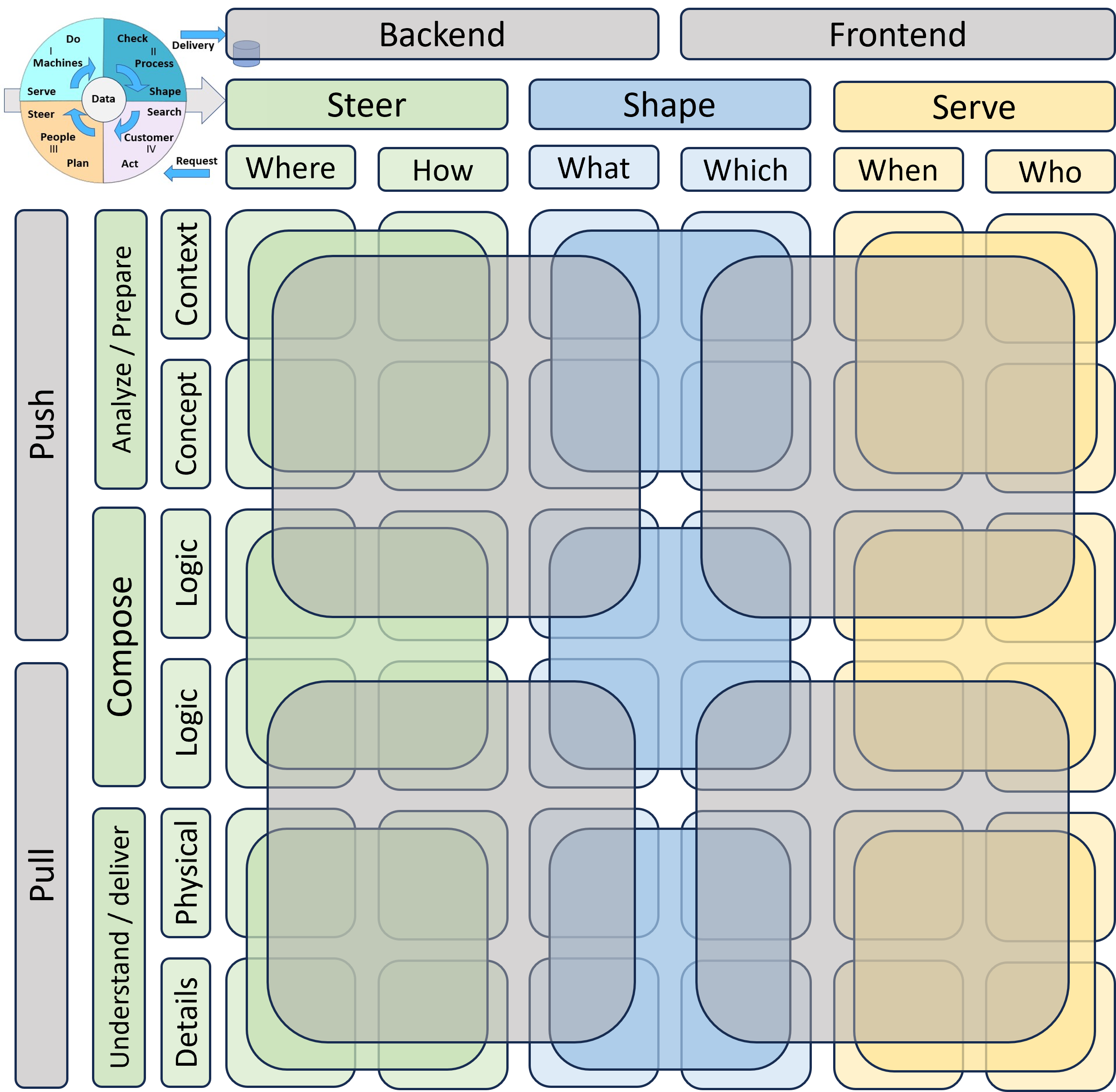

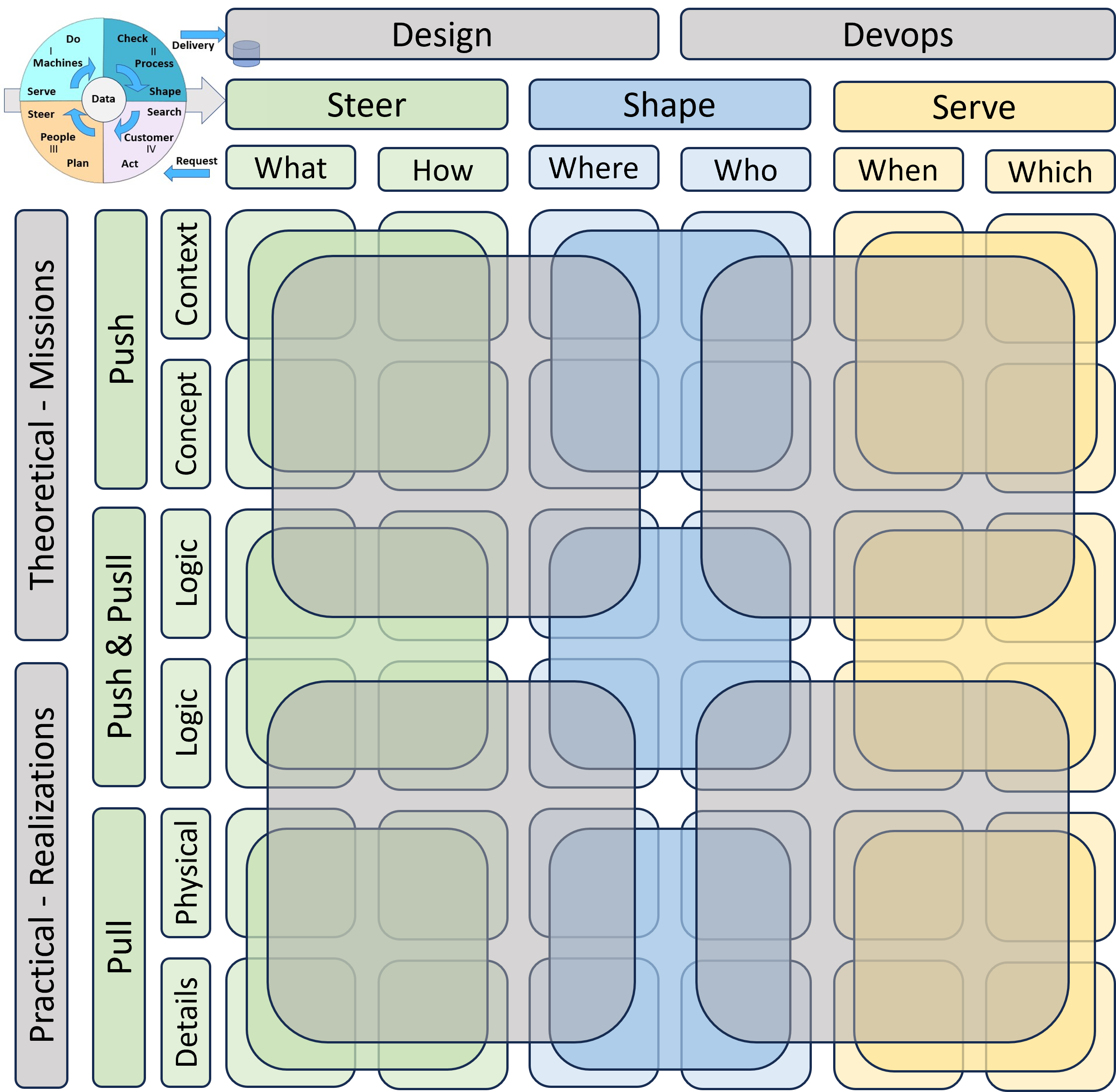

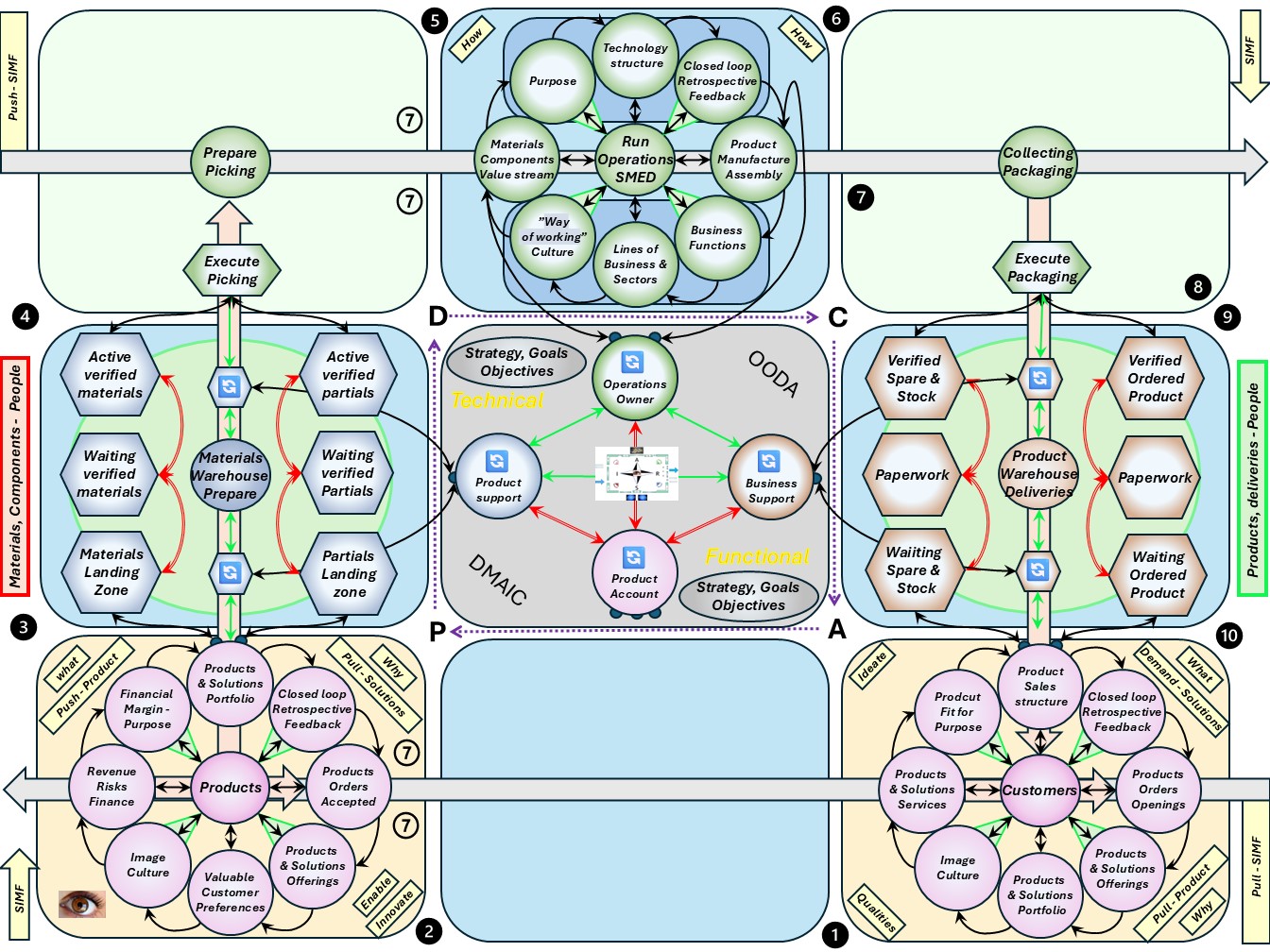

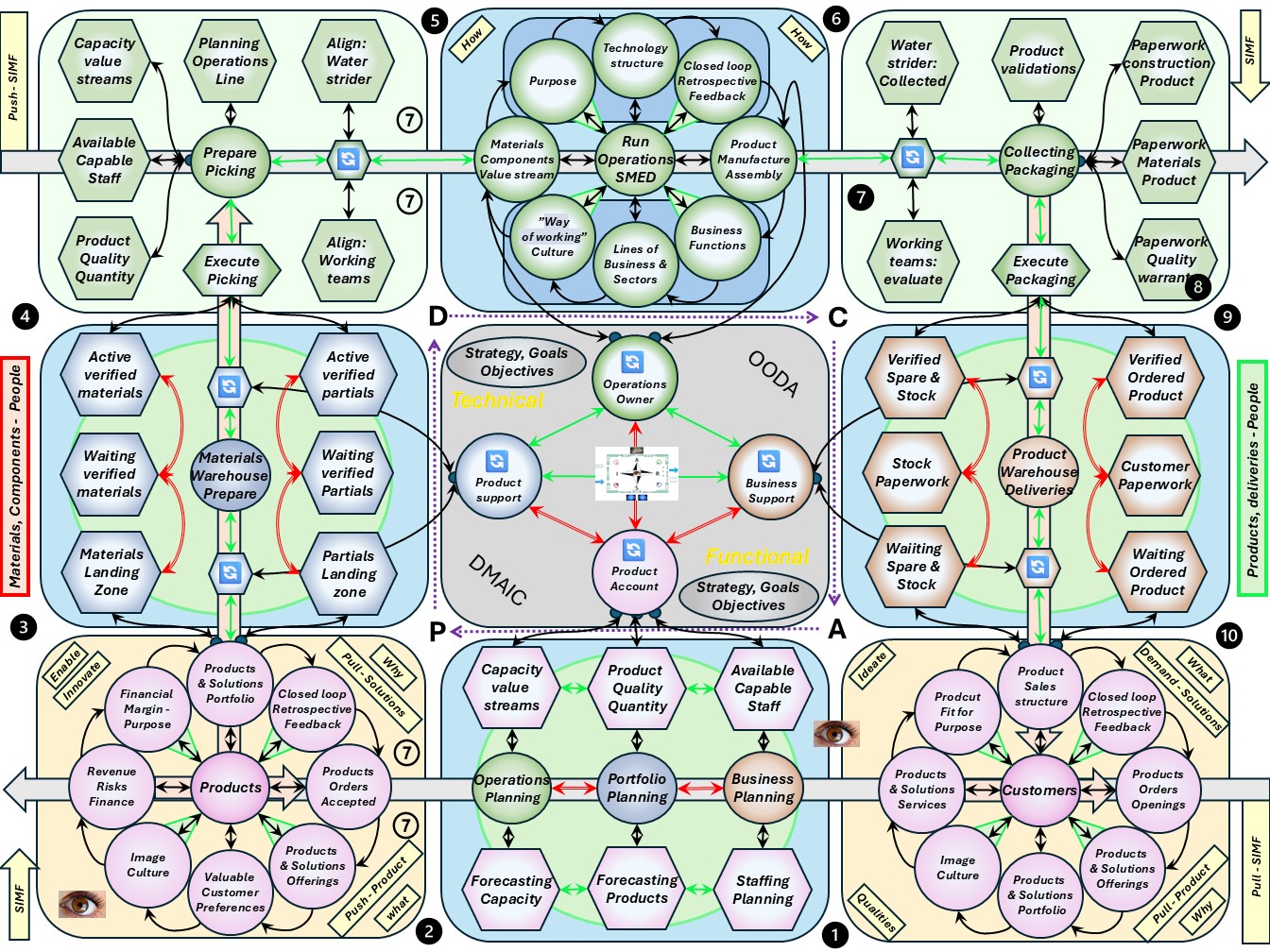

❼

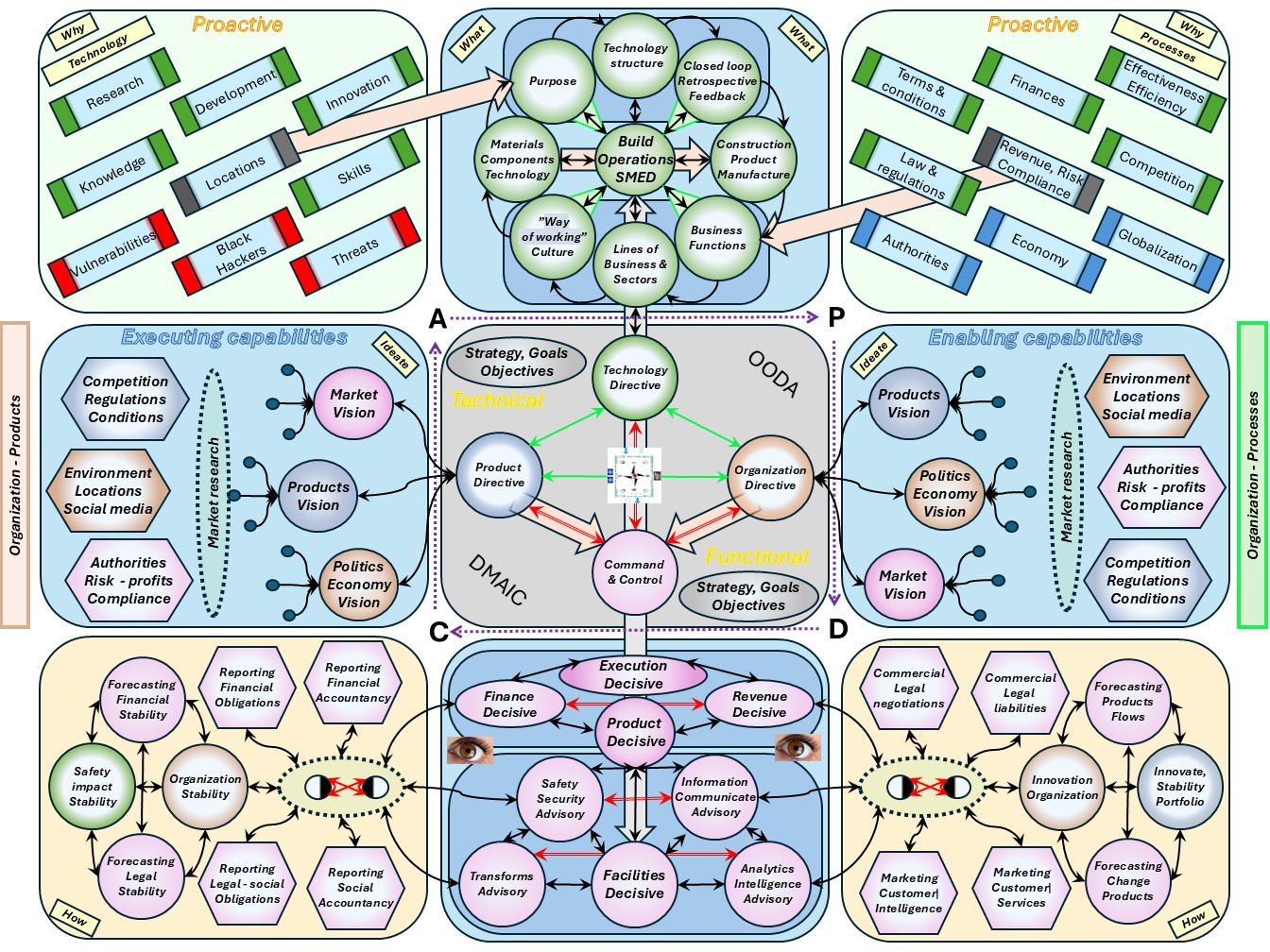

❼ The goal is drawing a floorplan (virtual).

Ordered dimensions for supporting the external customer.

This is the complete value stream.

😉 Goal: who, customer demand delivery.

Architecting, designing, building has some of the dimensions in the axis mirrored.

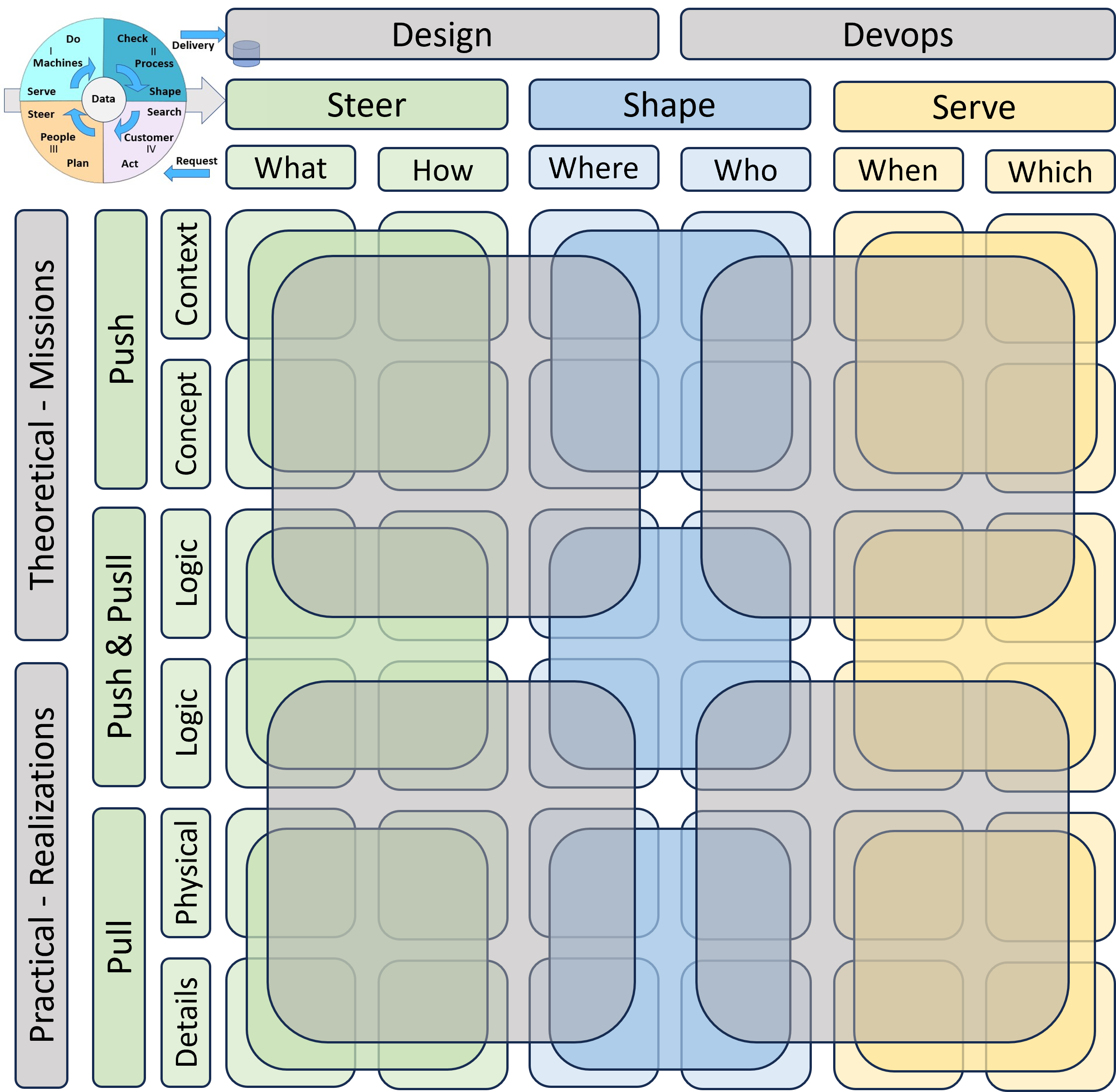

Ordered for internal processes

❽

❽ The goal is drawing a floorplan (virtual).

Ordered dimensions for supporting the service.

Depending on the strategy it is innovation of a product of part of value stream for a complicated project product.

😉 Goal: which, product quality quantity.

Architecting, designing, building has some of the dimensions in the axis mirrored.

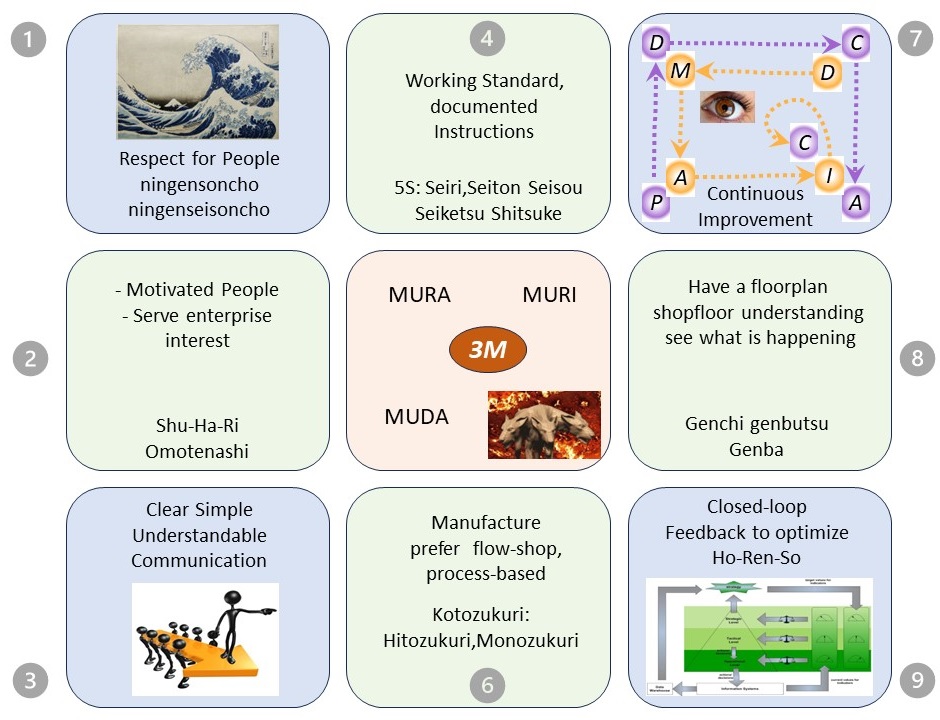

⚖ M-1.2.4 Advanced PDCA DMAIC understanding: Lean

Why: PDCA - DMAIC - SIAR , lean

❾ This is the third SIAR image: dynamic, static & stages for flow & control.

Change:

PDCA

DMAIC

- define, measure,

- analyze, improve

- control

SIAR

- situation,

- input/initiative

- activity/analyse

- result/request

OODA

- Observe

- Orient

- Decide

- Act

Information, data, processing adding measurements for understanding value creation:

- functionality: Processes, Practices, Instructions, Services

- functioning: Measurable, Efficient, Effective, Valuable

- Applied closed loops by: Data, Information, Knowledge, Insight

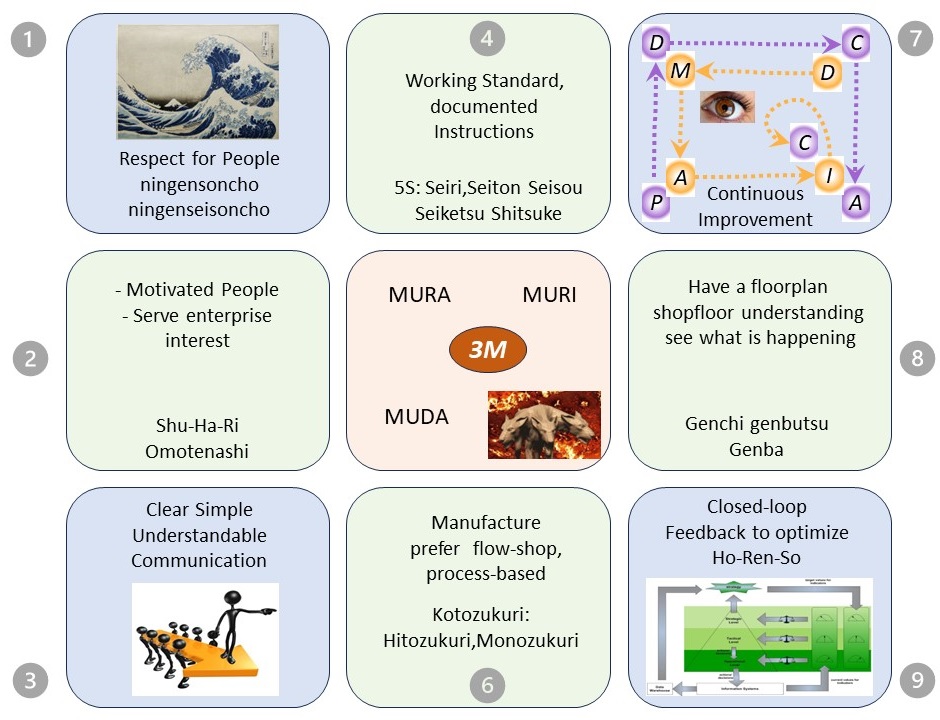

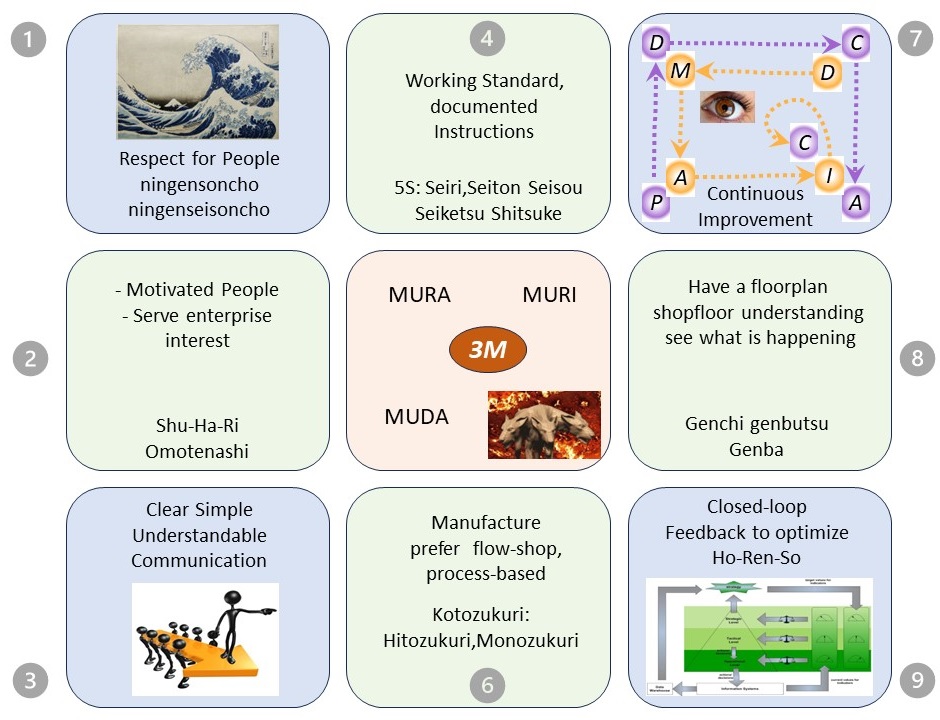

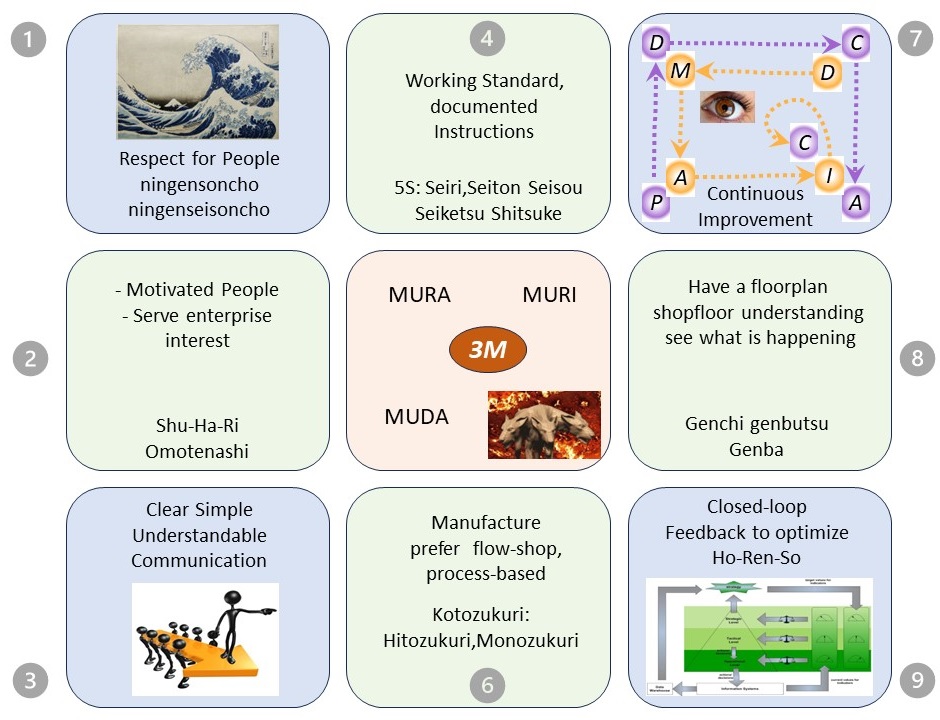

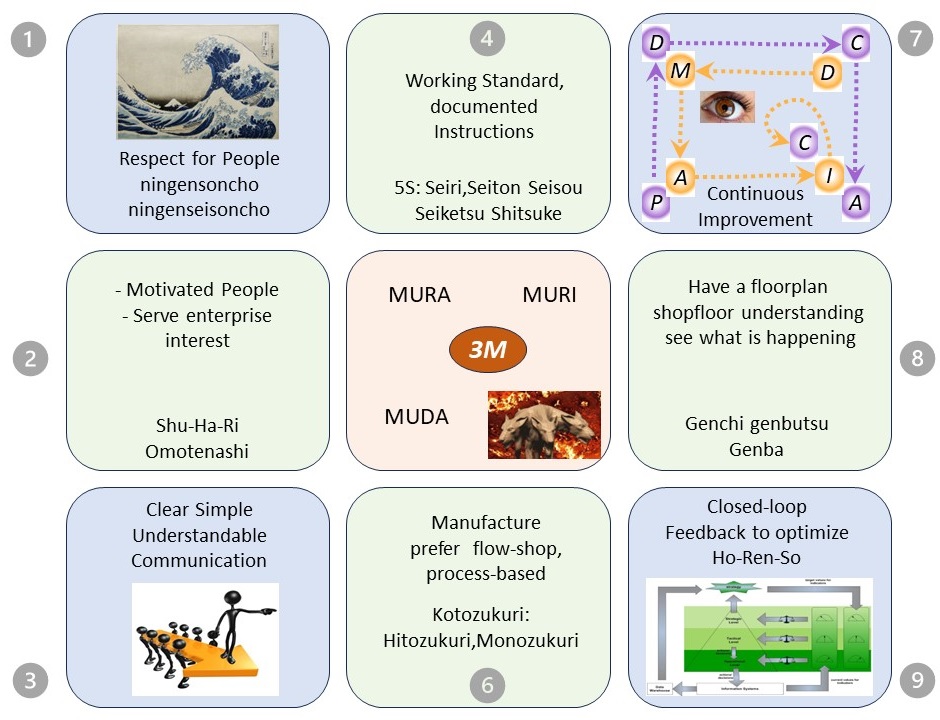

Going for lean, Genba-1

❿ Lean in a 3*3 plane is innovative idea. It is a result of combining the SIAR model to lean.

The lean philosophy in a 9-plane figure,

see right side.

Not only:

🎭 pillars, bars,

🎭 or diagonals,

🎭 edges - moderators

🎭 but also repetitive clockwise cycles.

Each pillar is bottom up from a novice to a master mind.

- ❶ ❷ ❸ The left pillar is what organisational leaders are supposed to do.

- ❹ ❻ The middle pillar is what a technical leader can do.

- ❼ ❽ ❾ The right pillar is where an advisor can help.

- ❺ In the middle the strategic goal and the threats.

It are the organisational leaders enabling this, but where to start?

- Have the strategic goals translated in something measurable, goal: closed loops.

- Go around clockwise from the right bottom corner.

- Continous repeat the cycle holding of threats improving the set goals.

A viable system, biotope

A very different aspect for information processing is: seeing it by components in a viable system.

"The formal viable system theory is normally referred to as viability theory, and provides a mathematical approach to explore the dynamics of complex systems set within the context of control theory.""

gives the reference to:

closed loop control, the control action from the controller is dependent on the process output.

The

Chemistry

is the scientific study of the properties and behavior of matter.

It is a physical science within the natural sciences that studies the chemical elements that make up matter and compounds made of atoms, molecules and ions: their composition, structure, properties, behavior and the changes they undergo during reactions with other substances.

This comes back to:

Petri Net

is one of several mathematical modeling languages for the description of distributed systems.

It is a class of discrete event dynamic system. A Petri net is a directed bipartite graph that has two types of elements: places and transitions.

Like industry standards such as UML activity diagrams, Business Process Model and Notation, and event-driven process chains, Petri nets offer a graphical notation for stepwise processes that include choice, iteration, and concurrent execution.

Unlike these standards, Petri nets have an exact mathematical definition of their execution semantics, with a well-developed mathematical theory for process analysis.

There are three type of systems within a viable one :

- Autonomic nervous system

The autonomic nervous system is a control system that acts largely unconsciously and regulates baisc functions

- Consciousness decisions.

Philosophers have used the term consciousness for four main topics: knowledge in general, intentionality, introspection (and the knowledge it specifically generates) and phenomenal experience.

- Complex adaptive systems.

A CAS is a complex, self-similar collectivity of interacting, adaptive agents. Complex Adaptive Systems are characterized by a high degree of adaptive capacity, giving them resilience in the face of perturbation.

M-1.3 Steering roles tasks

Managing the building any non trivial construction follows several stages.

These are:

- high level design & planning

- detailed design & realisation

- evaluation & corrections

Non trivial means it will be repeated for improved positions.

Managing the process, information is needed for understanding what is going on.

Without knowing the situation or direction there is no hope in achieving a destination by improvements.

⚖ M-1.3.1 With what to start understand steering?

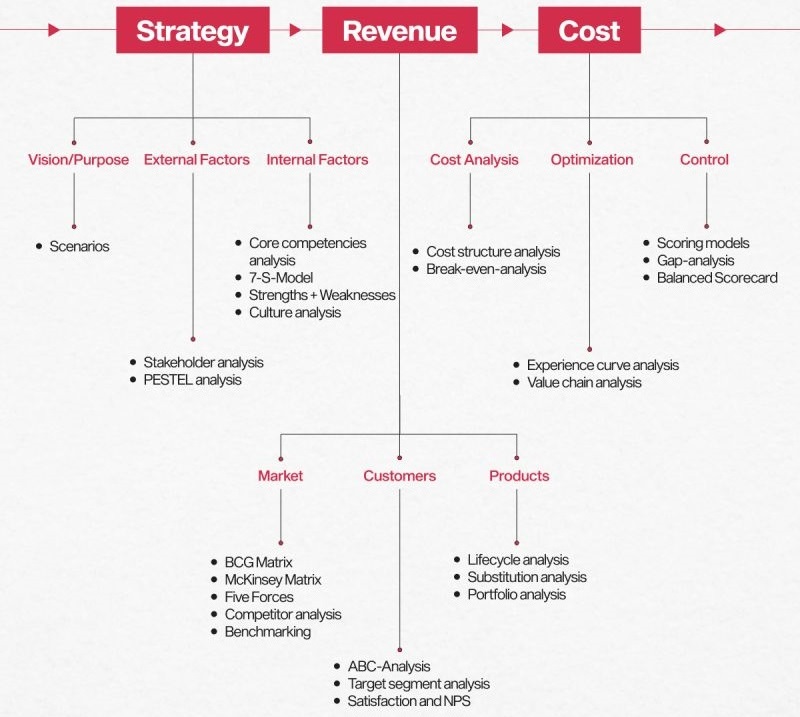

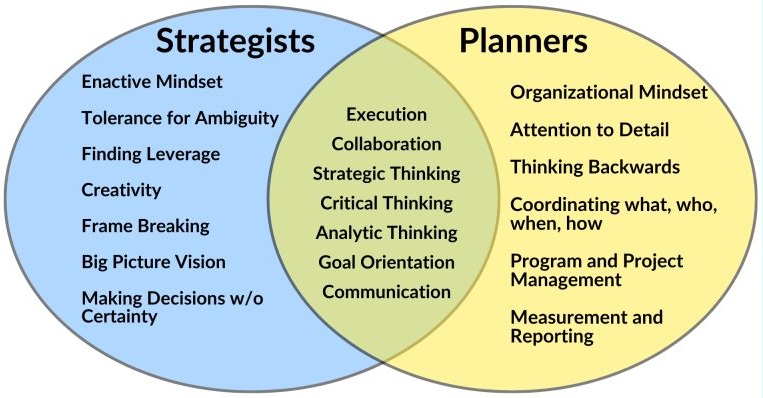

Strategy

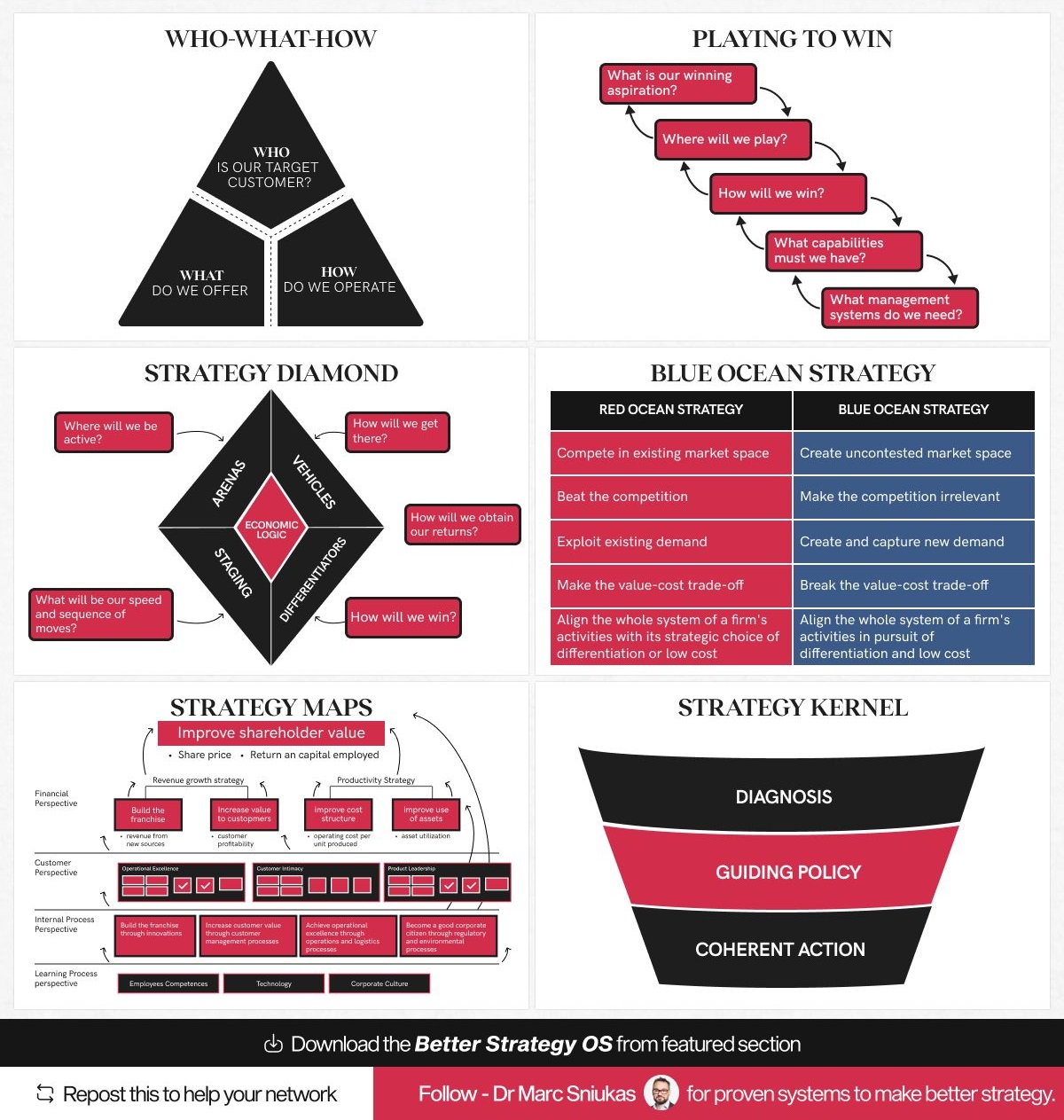

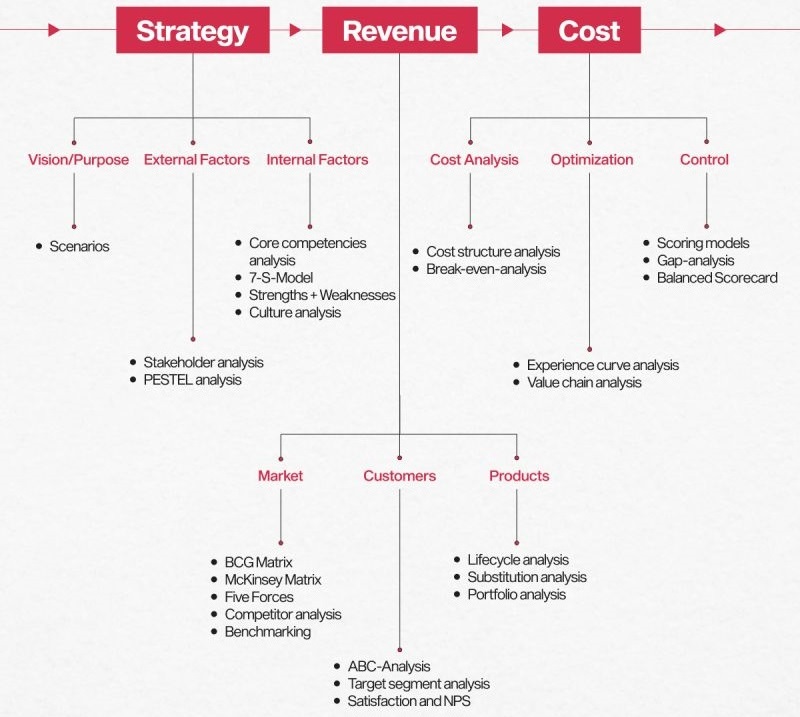

😉 Dr. Marc Sniukas made a presentation on this topic that got much attention.

It is 20 years ago (2004) but still a great resource.

Last updated in 2011, the theory is generic.

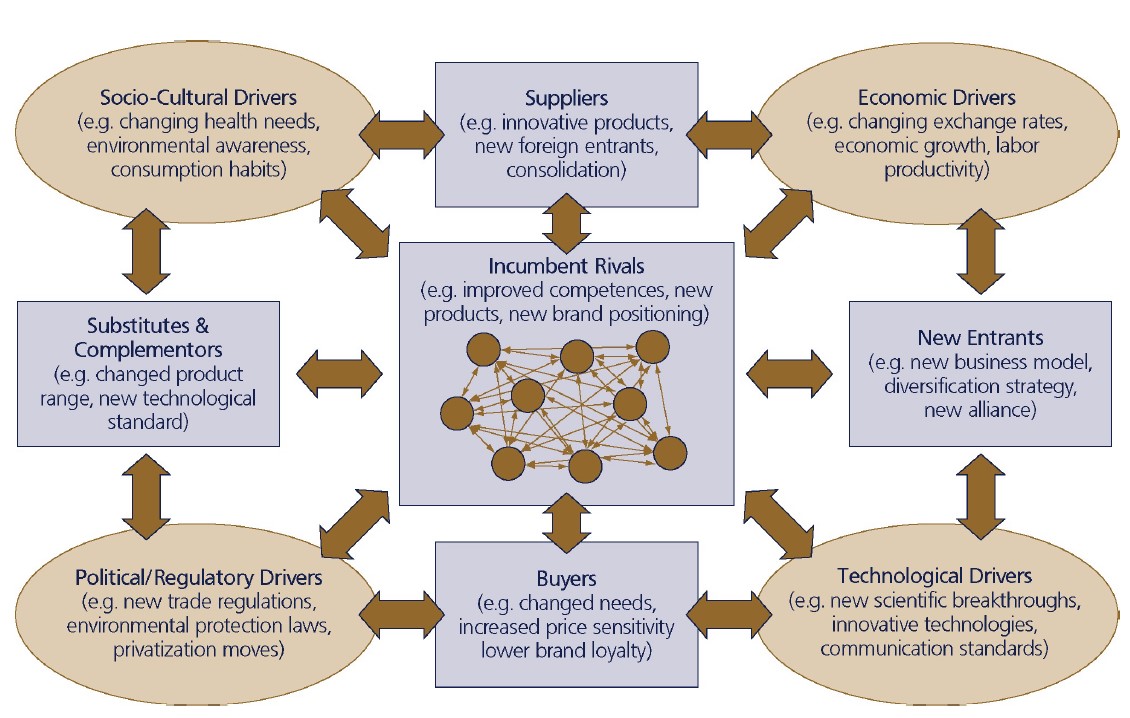

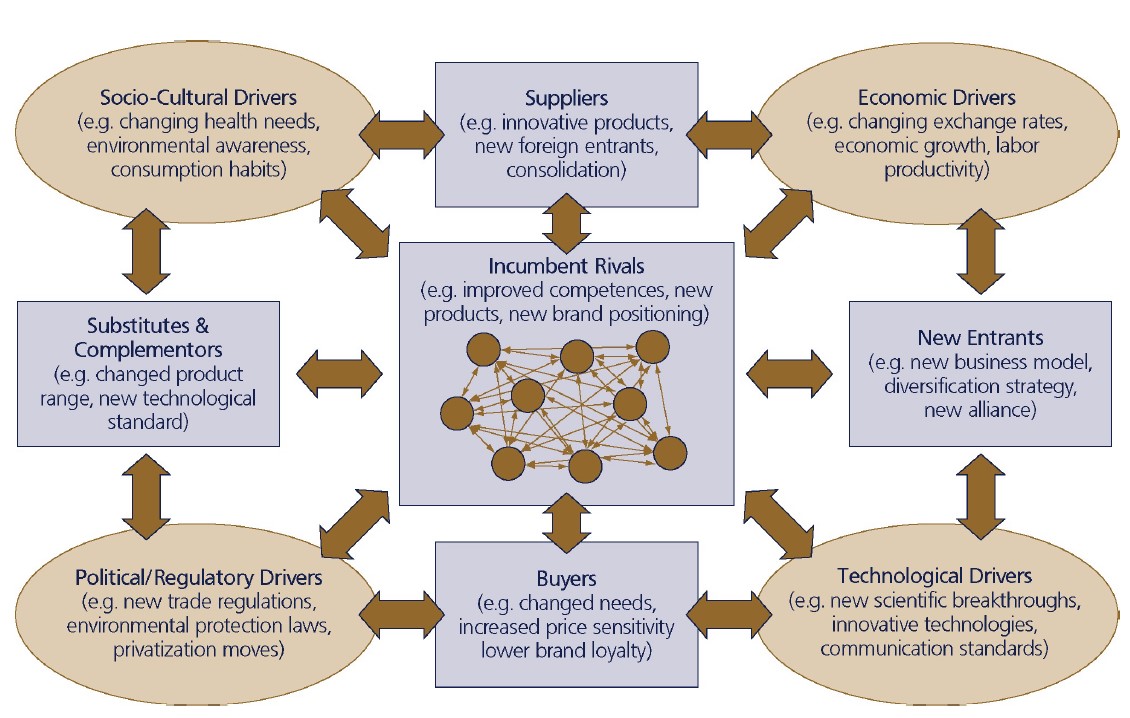

Porter's Five Forces.

It is the answer to:

What drives competition in the industry?

The question in this what is the orientation, the flow and change expectations?

A variation and extension to the basic version.

In a figure,

see right side.

⟲ Turn it 90° counter clockwise.

- New entrants to Act on. Situation for substitutes & complementors.

- The flow will be: Input = suppliers left to right into buyers = Result.

- The change starts:

- (pull) by political/regulatory drivers, to socio-cultural drivers.

- (push) in economic drivers to technology drivers.

Value stream

From the presentatiation (Sniukas): 👉🏾

An activity system is an integrated set of value creation processes leading to the supply of product and/ or service offerings.

This activity system is frequently referred to as the value chain. (Porter, 1985)

⚖ M-1.3.2 How tot steer, understanding improvements

C-level Vision misundertanding

A reactions in the LI-post (Manuel Bogado Gudsvän):

What is strategy? (2024 M. Sniukas)

Maybe to reduce the concept of Strategy to "business strategy" is the core reason on why "nobody really knows what strategy is".

Using the analogy of the elephant and different blinded people giving different opinions according to their "point of touch":

it could be compared to someone asking "what is an animal?" and the observer answers: "An elephant".

Because he might see all of the elephant, but despite that, an elephant per se is not the real definition of an animal, but an example.

Therefore, In the case of "Strategy" the true source must not be "business", but the "inventors" of the word "Strategy".

➡ What was the ancient greek art of the strategos?

In this case and according to historians and philosophers (not business people) Alexander the Great and other greek strategist are closer to Strategy than any business guru.

Because it is the original and fundamental source.

Once we know the mind of AIexander, Philip II, Julius Cesar, Hannibal, only then we could obtain the secret of Strategy (that said by Napoleon himself).

If we know the fundamentals, then we know all the colors.

Afterwards colors can be applied anywhere (i.g. business) and in different combinations according to the power of execution of the strategist.

Steer in continuous improvement

Kaizen is much more than process improvement. It's a self improvement process!

Respect for People starts with each one of us, individually! (repost of David Bovis, M. npn)

Got the reply (Kevin Kohls oct 2024) :

😉

An ideal vs. reality. It's great to hope this occurs, but to rely on it means we are "addicted to hopium."

Any change to a current established methodology, valid or not, will cause conflict. ...

A key aspect of Holistic Thinking is understanding "how to cause the change.":

- What is the motivation behind the business culture we live in?

- What is the goal, or a requirement for the goal, of every one of these businesses?

- What is the one metric for which CEOs, the ultimate customers/supporters of our CI process, are held accountable?

- What would every employee, especially those in a union, like to see improve due to a successful Kaizen event that also positively impacts their personal lives?

- What would an employee like to see as a cause-and-effect outcome of their efforts?

Hitozukuri (sometimes spelled Hitozokuri) is a Japanese concept that translates to "building people" or "developing people."

⚖ M-1.3.3 Strategy Who: Hoshin Kanri, Vision, Mission

C-level Vision retroperspective

What the heck is strategy anyway? (2024 M. Sniukas)

For more than 20 years, I've been exploring the answer to that question.

About 20 years ago, I made a presentation on this topic that got much attention.

This presentation, viewed by hundreds of thousands and ranked in the top 1% on Slideshare, was a significant milestone in my journey.

It was a big deal at the time, and its popularity speaks to the relevance of the topic.

I've updated the presentation a few times since then.

Here's a version from 2011 that still serves as a great introduction.

If I were to do it again today, I would definitely make some changes to the layout and design. ;-)

Overall, it's still a good way to understand the basics of strategy.

The reference: Strategy: Process, Content, Context? 3rd edition De Wit & Meyer Thomson Learning 2004

"Nobody really knows what strategy is."

Strategy Formation Activities:

😉 Where to start and how to proceed for these 9 pointers in the figure by their positions is unintended a good fit for the SIAR model that extended into Lean understanding.

C-level Vision or plan retroperspective

from a collection of what is about:

most ceos think they have a strategy (2024)

😱

Most CEOs think they have a strategy, they only have a plan. Here’s the difference:

- Strategy is the set of choices that positions you to win.

- Planning is just organizing who does what by when.

Too many companies treat planning as a stand-in for strategy.

The result is

- Without a strategy we fill our time with:

- ... what we want, or

- ... what we think the boss wants or,

- ... by reacting

- Without a strategy, time and resources are waste on piecemeal disparate activities.

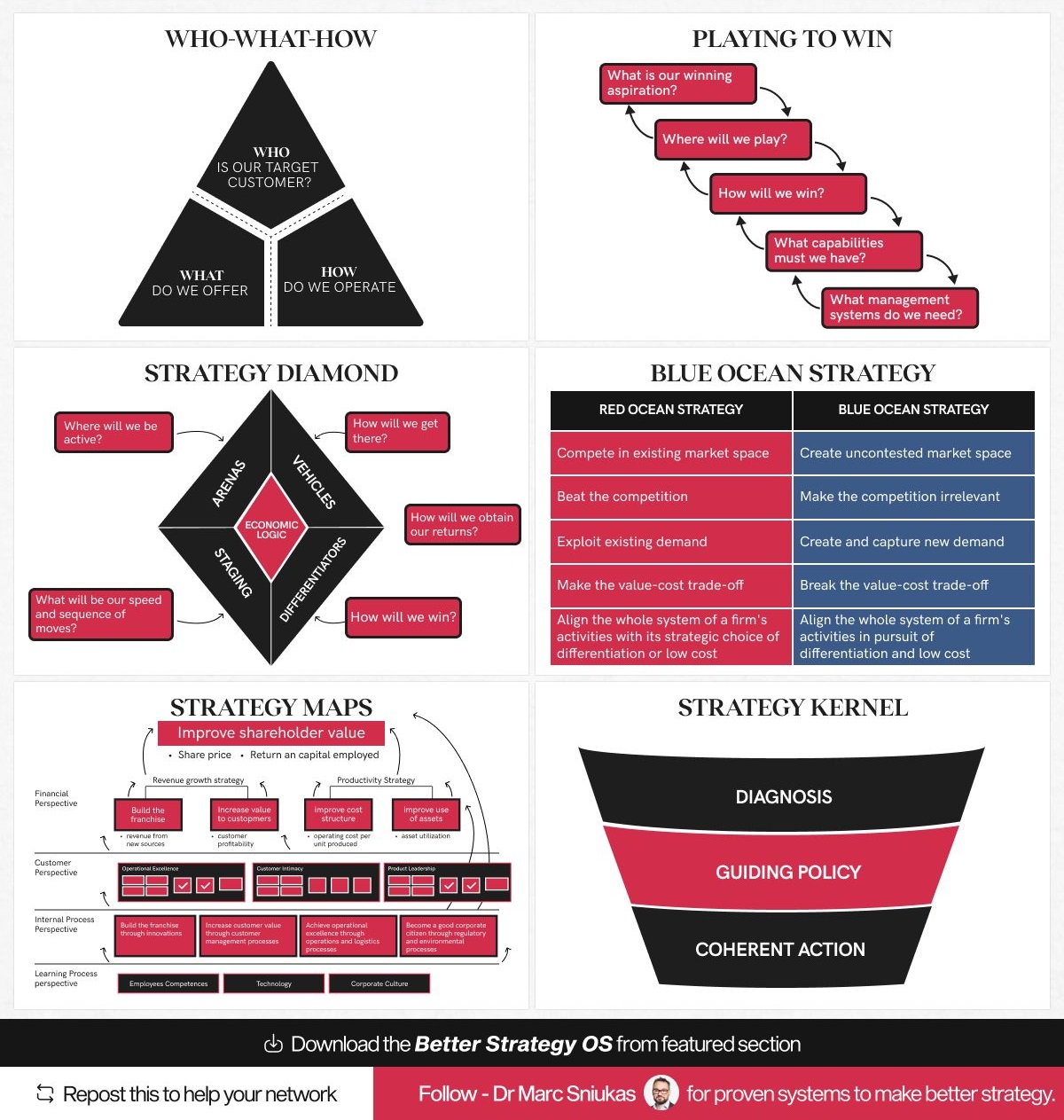

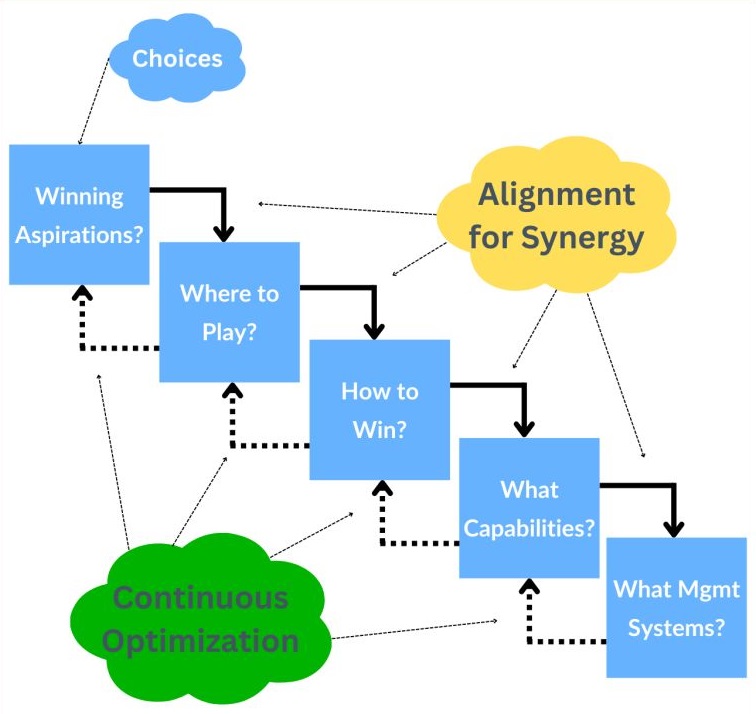

C-level Vision, choices in managing creating strategies

😉

tired of your strategy falling flat (2024)

In today's fast-paced business environment, having a clear and effective strategy is more crucial than ever.

Here are six strategy frameworks that can help you structure your thinking, explore innovative paths, and ultimately craft breakthrough strategies:

- who what how: (Costas Markides)

- Who should we target as our customers?

- What products and services should we offer?

- HOW can we best deliver this offering to these customers?

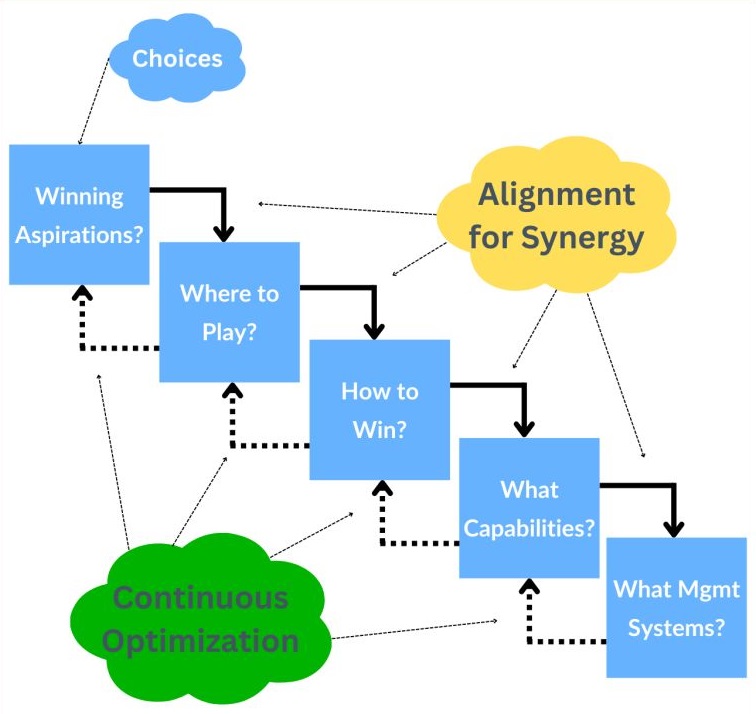

- PLaying To Win Strategy Choice Cascade (PtW): (Roger Martin)

- Winning Aspirations: Our ambitions

- Where to play: Customers, offerings, geographies, channels

- How to win: Low cost or differentiation

- Capabilities: Distinctive capabilities that underpin our HtW?

- Management System: How we build and maintain the capabilities

- Strategy diamond - Similar to the PtW cascade, with a couple of additions.

What I like about the Diamond is its distinction between "differentiators" and "economic logic."

Capabilities and Management Systems are referred to as „supporting organizational configuration“ in this framework, but outside of the Diamond.

(Donald Hambrick and James Frederickson)

- How to win with customers.

- How to win as a business.

- Blue Ocean Strategy ( Chan Kim and Renée Mauborgne)

I think of BOS as less of a strategy framework per se and more of a set of tools that helps you generate answers you can plug into PtW or the Strategy Diamond.

BOS is especially suited for creating new growth businesses, but it can also help you explore ideas for differentiation in your existing businesses.

Strategy is about being different, which requires innovation.

- Strategy Maps ( Robert Kaplan and David Norton )

As strategy needs to be coherent, Strategy Maps help to visualize how the various parts of your strategy fit together and how your choices reinforce each other.

They are good for checking whether your decisions make sense.

Also, think about the various perspectives as follows:

- Financials: Short-term

- Customers: External

- Operations: Internal

- Learning: Long-term

- The Kernel Of Good Strategy (Richard Rumelt)

The core of good strategy is focusing on the crucial challenges that must be solved to achieve your ambitions.

- Provides a diagnosis of that challenge

- Outlines how you're going to deal with the challenge

- A coherent set of actions to implement your solution

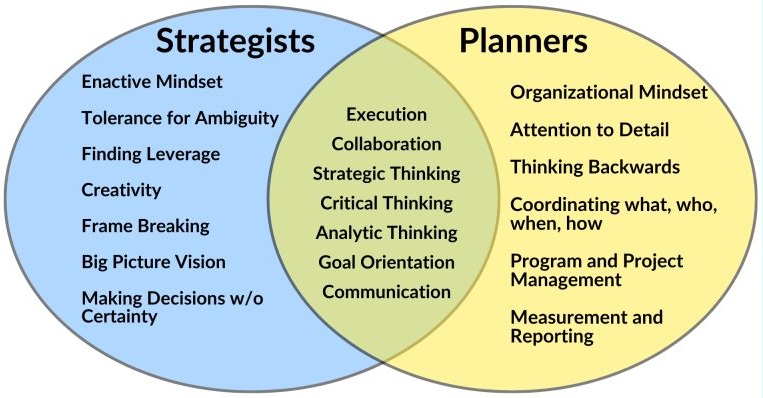

In a figure:

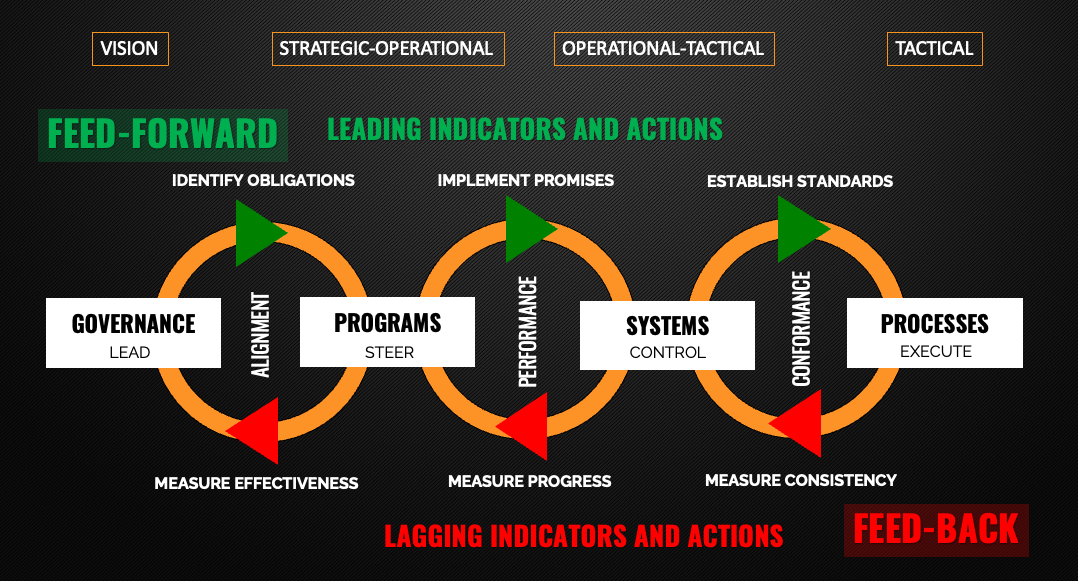

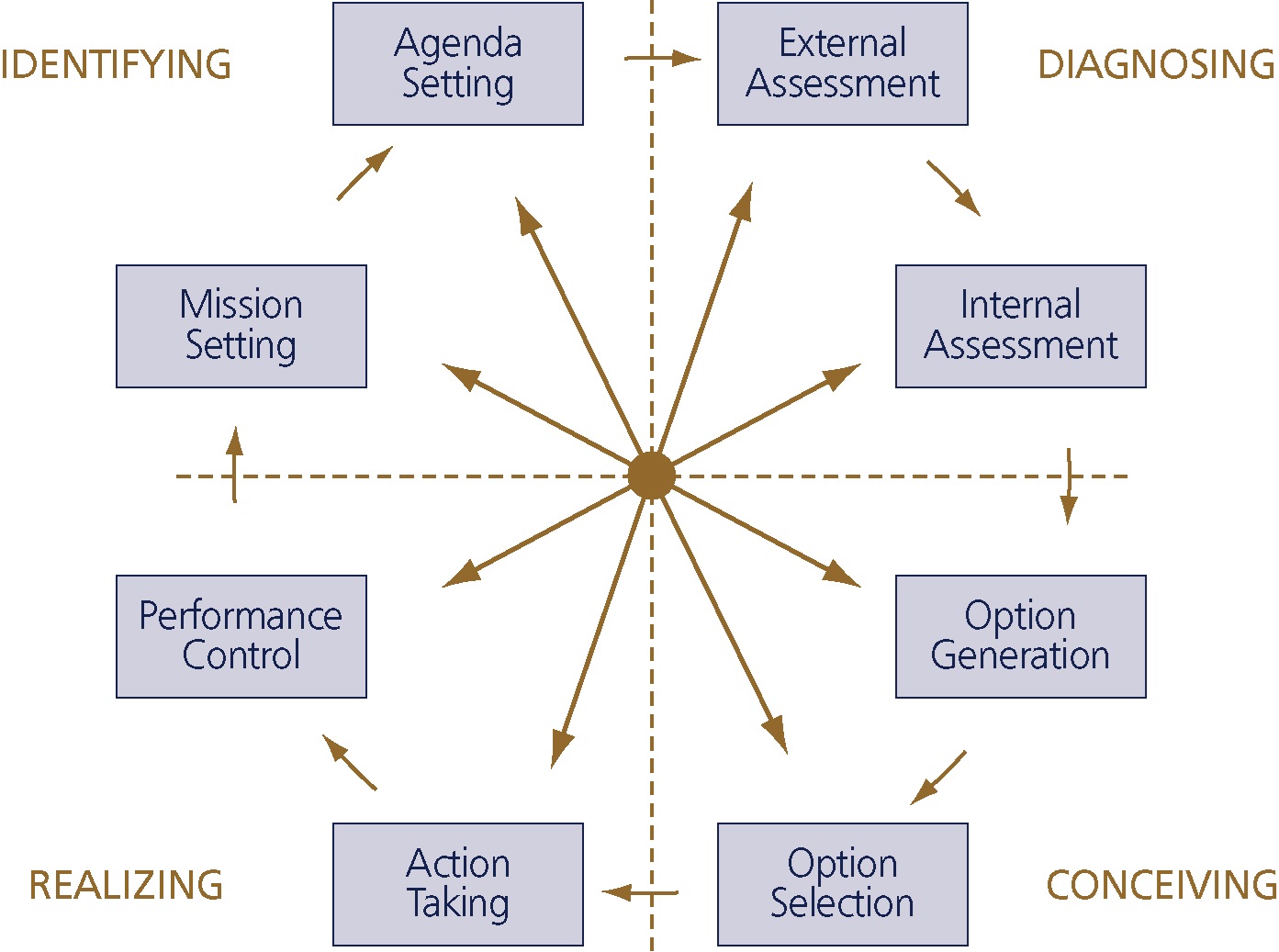

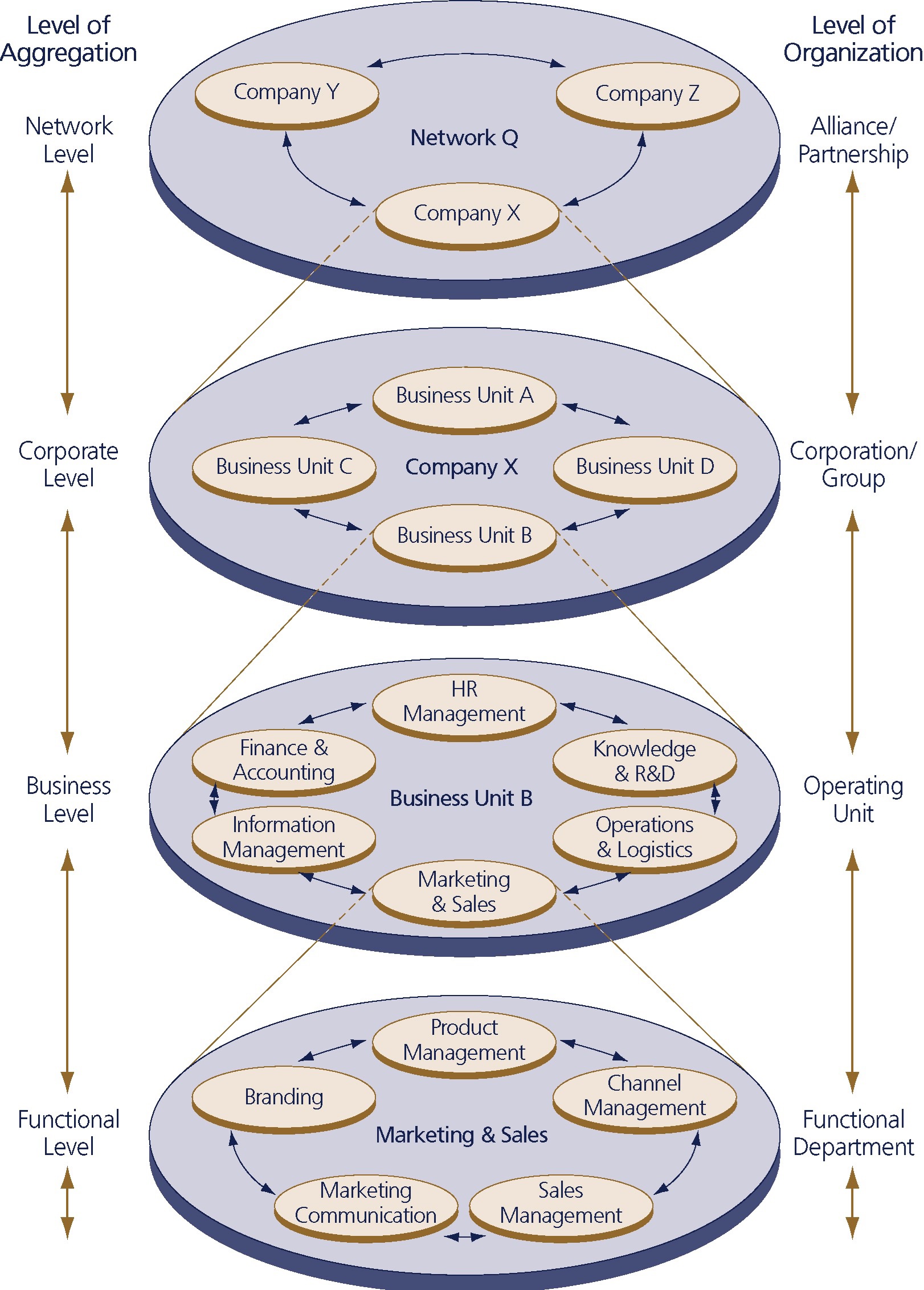

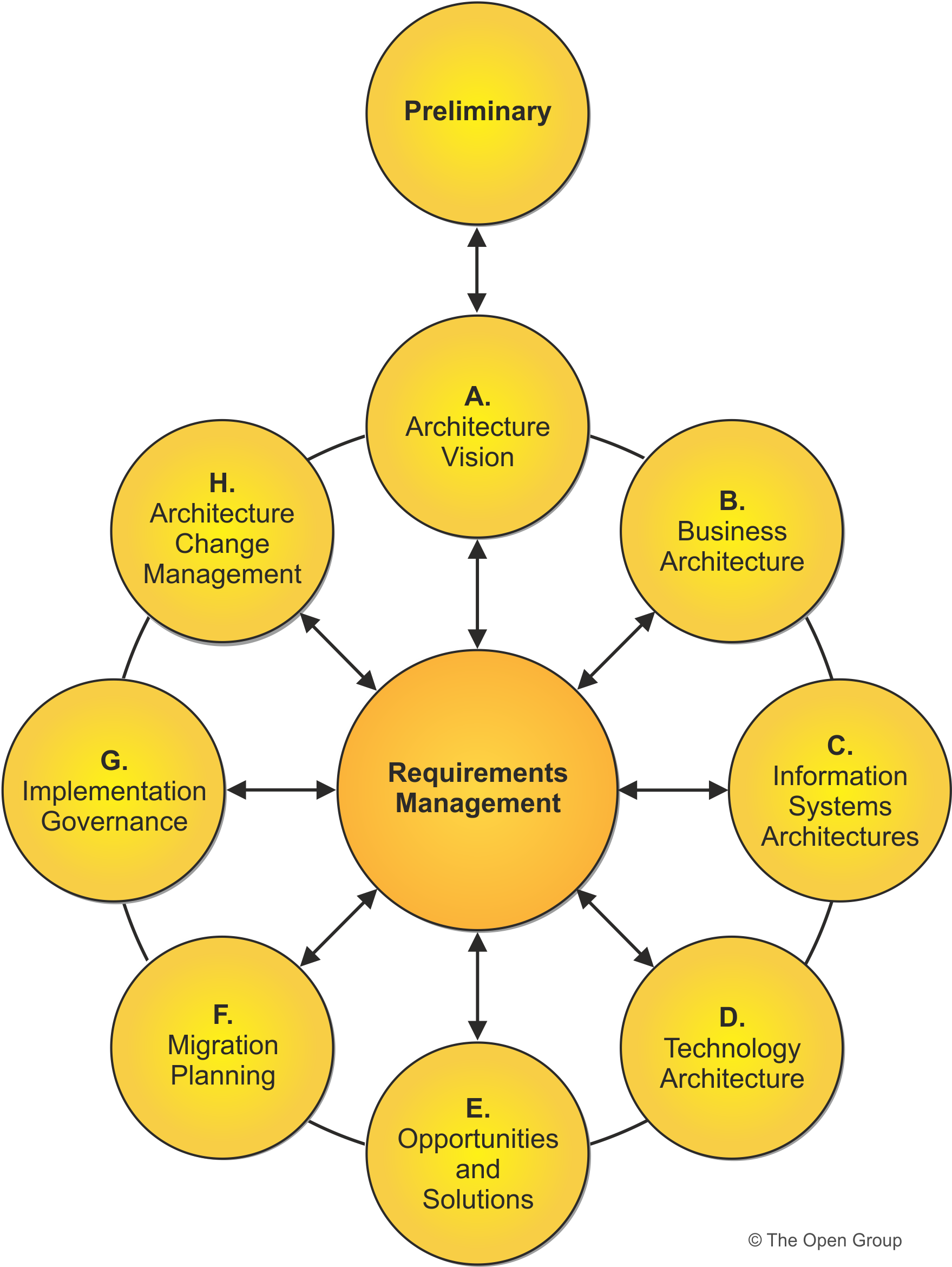

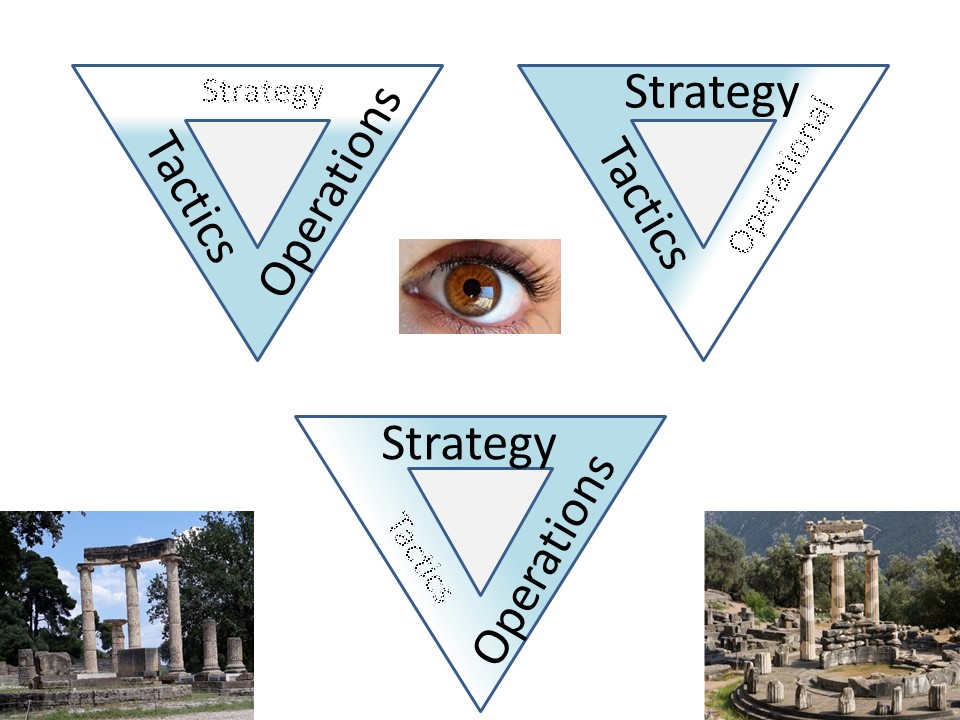

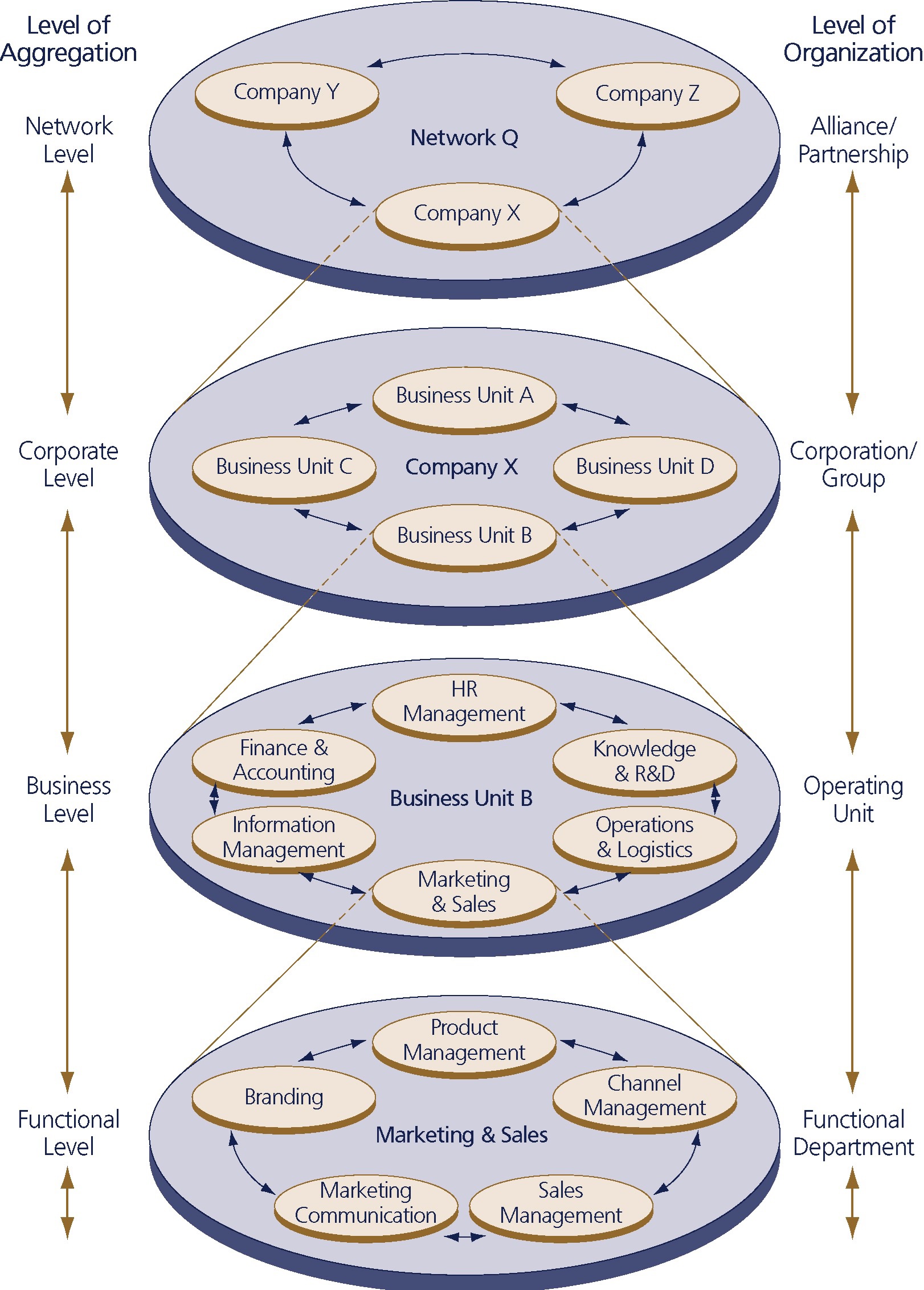

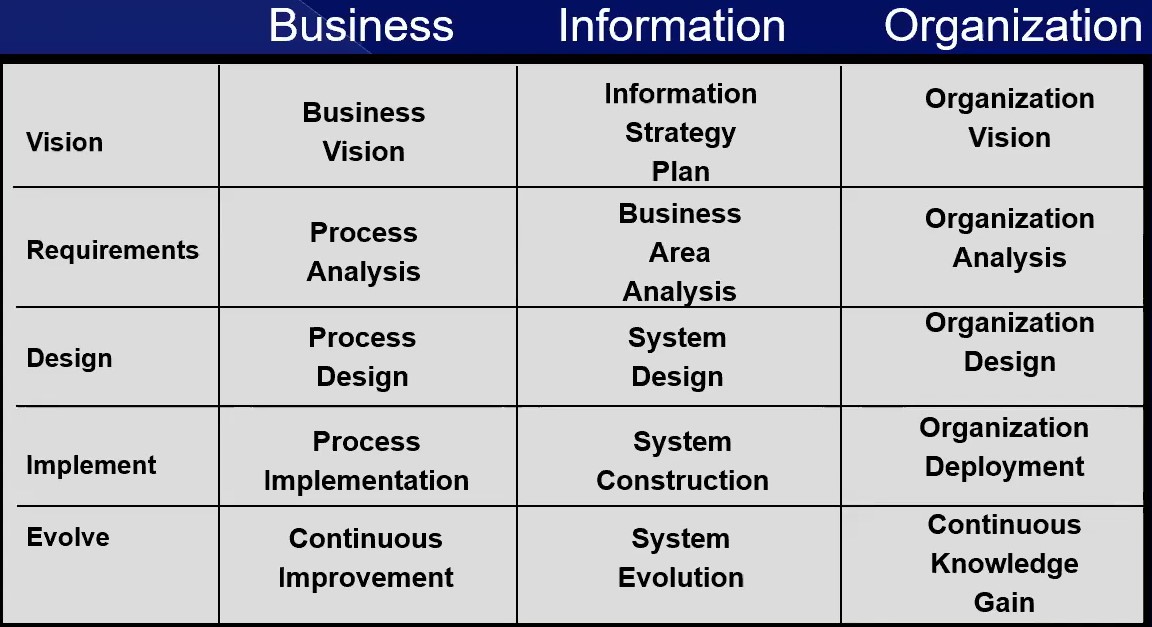

⚖ M-1.3.4 Strategy Levels in the organisations

Building a hierarchical strategy

Why Most Strategies Fail

😉 The Fundamental Questions You Need to Answer.

Your strategy discussions need to hit on these four levels:

- Ecosystem strategy

With whom do you compete? With whom do you collaborate?

- Corporate strategy

Which businesses should you be in? Do you diversify to spread risk, or go all-in on integrated synergies?

- Business strategy

How compete in your chosen markets? Identify your customers, define your value proposition, nail your business model, figure out how you'll make money.

- Functional strategy HR, IT, Sales, Marketing, Operations? how do these functions contribute to the overall strategy? Analyze their activities, focus areas, and challenges to ensure they align with the overall strategy.

Forget the fluff.

Answer these questions with precision and clarity.

There is a presentation "what is strategy" (2009 - 2011).

The theory is generic.

In a figure,

see right side.

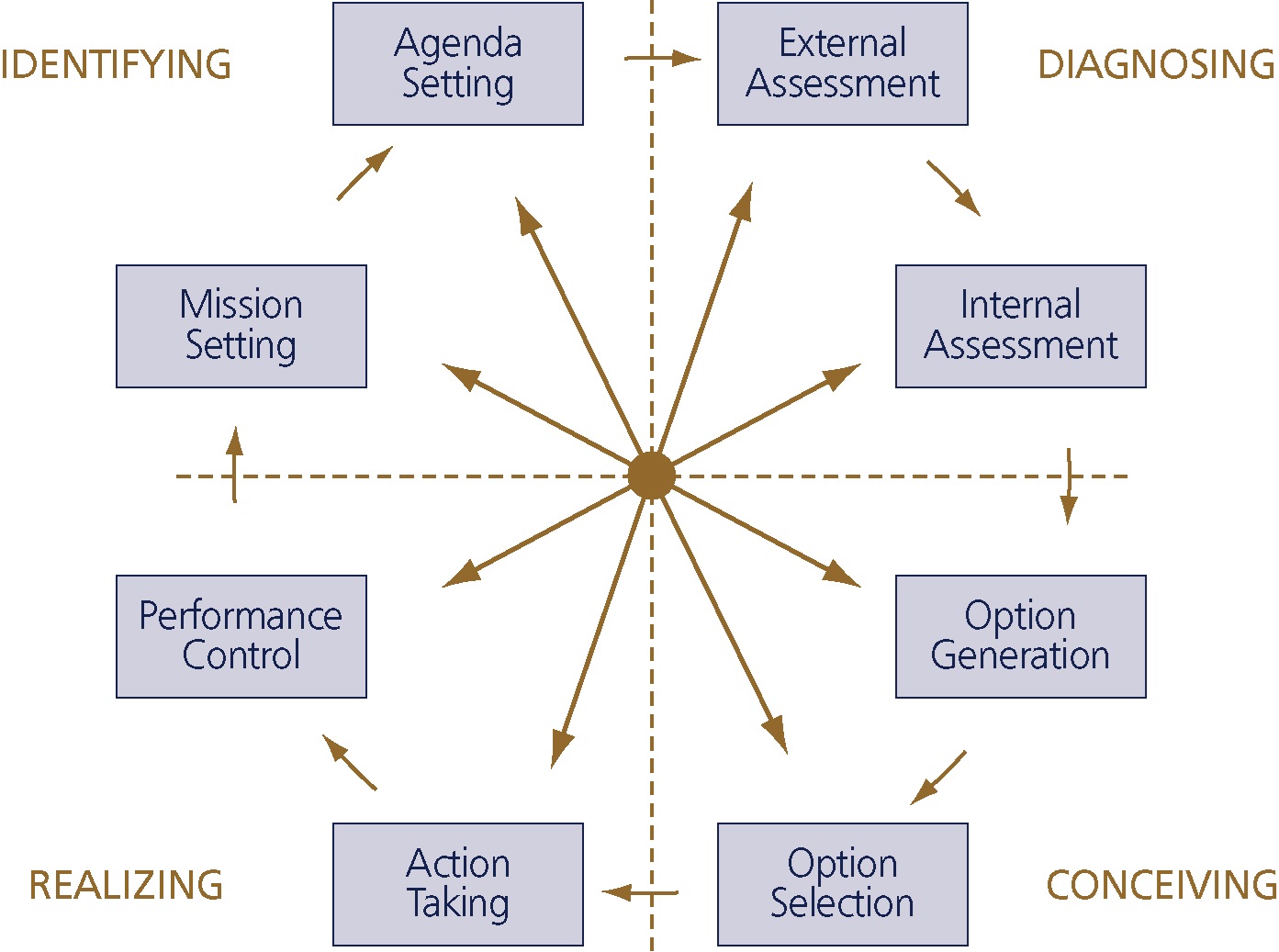

Building stratevy in a cycle

Strategic Learning

Organizations create their futures through the strategies they pursue.

These strategies may be developed in a thoughtful and systematic way or allowed to emerge haphazardly in a series of random, ad hoc decisions made in response to daily pressures.

But one way or another, the strategy a company follows—that is, the choices it makes—determines its likely success.

Astonishingly: only few executives are able to explain their company's strategy clear and compelling.

A successful strategy is not just a matter of open-ended choice-making.

It is choice-making in service of answering some specific and very important questions.

The answers to these questions will determine your destiny.

The cycle is the same as the PDCA and SIAR cycle, only the orientation is different.

Even the different context for diagonals and horizon vertical is there.

- materialised: Focus (define vision), Align (input) , Implement, Learn (situation)

- change: Insight implications, proposition priorities, Action plan, learning loop

Strategy is a process, not a playbook (A.Nesbiit 2024)

Willie Pietersen pragmatically describes strategy as a process and tool to help us build and lead adaptive organizations.

While the differences are subtle, it's important to remember that significant differences originate in small things.

But what resonated with me was the words he used to connect the elements.

- Insight, not data, drives strategic choice.

- A winning proposition, not just a value proposition, drives action.

- An action plan, not a strategic plan, drives execution

- A learning loop, not just feedback, drives learning.

A generic note:

Consultants love their concepts (the Tooligans) more than the living biotope they work for.

M-1.4 Culture building people

Managing the working force at any non trivial construction is moving to the edges.

The cultural changes are:

- Respect for people, learning investments at staff

- Accepting uncertainties and imperfections

- Trusting the working force while getting also well informed

Non trivial means it will be repeated for improved positions.

Managing the working force at processes, information is needed for understanding what is going on.

Without knowing the situation or direction there is no hope in achieving a destination by improvements.

⚖ M-1.4.1 Understanding, awareness of the situation

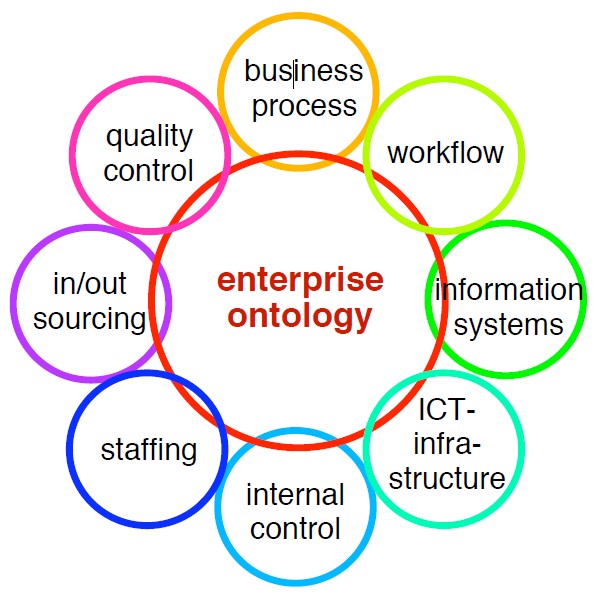

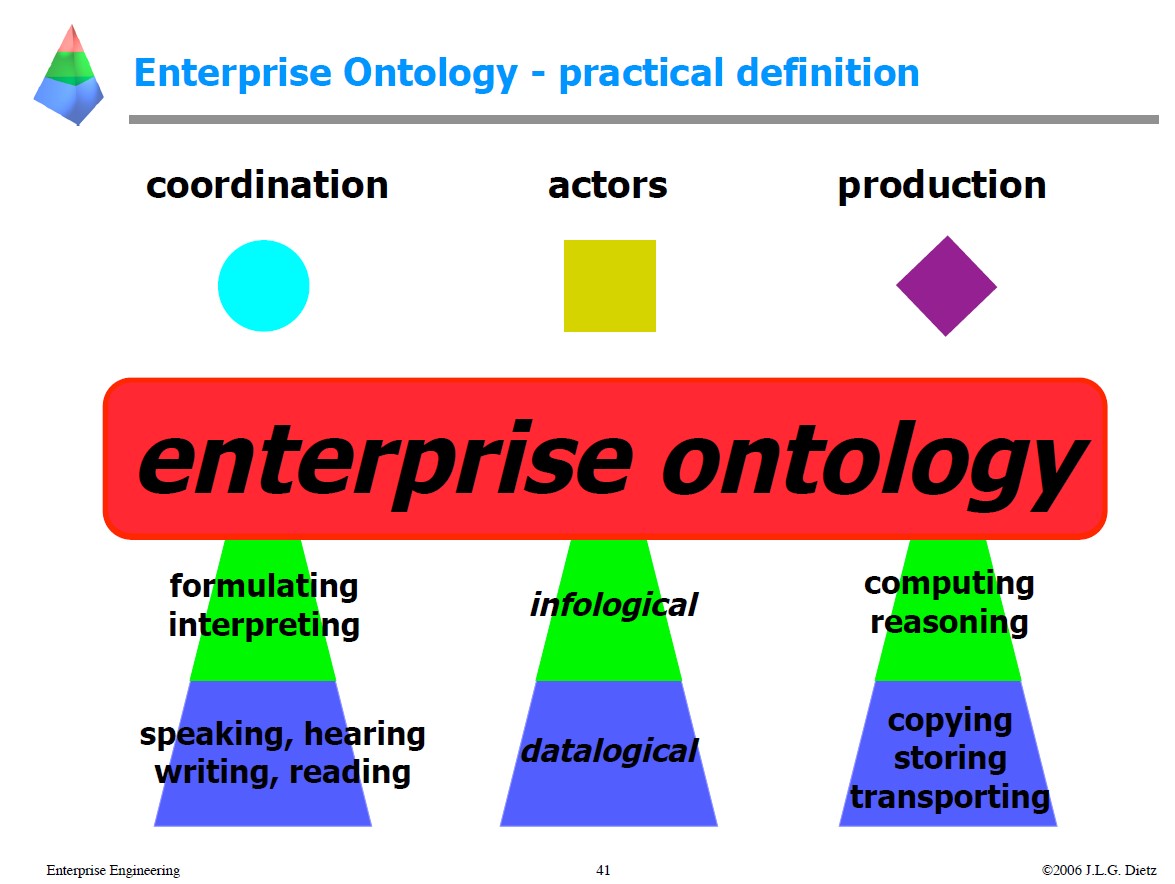

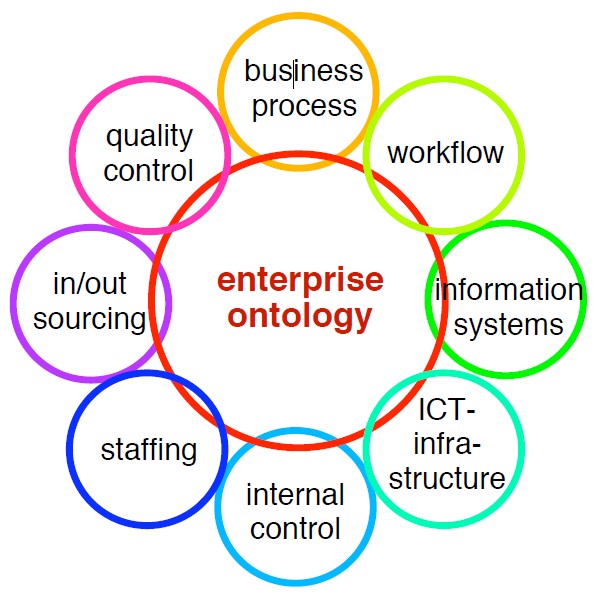

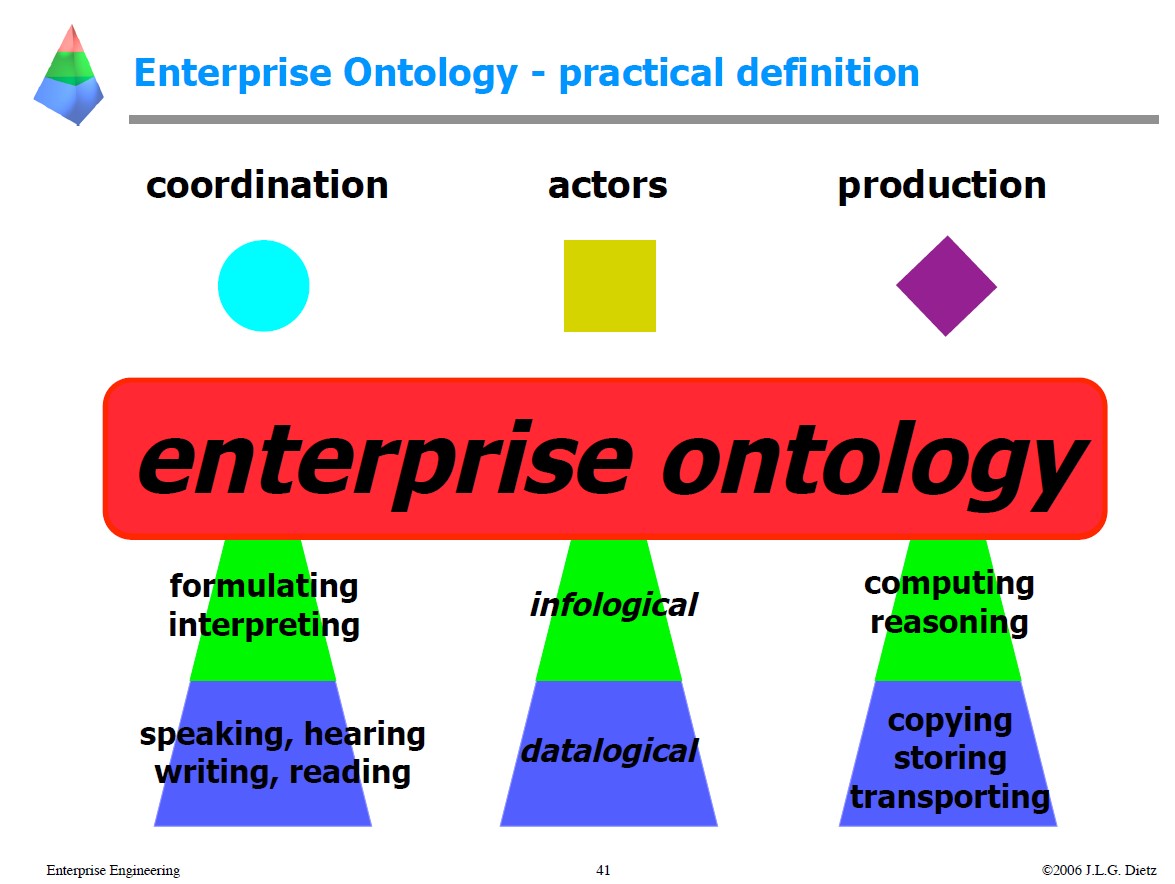

Enterprise Ontology

Enterprise Engineering Enterprise Ontology (2006 J Dietz)

The context of "Enterprise Ontology" is far beyond that of just "data - information" it is about most aspects of an enterprise, organisation.

Not just the data - information.

Langefors articulated the concern for the content of information, on top of its form ...

... we need to articulate the concern for the intention of information on top of its content, and develop the ontological view on enterprise.

This separation of intention and content will create a new field "enterprise engineering" and make that intellectually manageable.

The evolutionary levels:

- 1st wave: Data Systems Engineering (datalogical view on enterprise)

- 2nd wave: Information Systems Engineering (infological view on enterprise)

- 3rd wave: Enterprise Engineering (ontological view on enterprise)

😱 The current state of art is: everything is data, not even maturity level 1.

Conclusions in the paper are:

- Enterprise Ontology reveals the essence of an enterprise in a comprehensive, coherent, consistent, and concise way, while reducing complexity by well over 90%.

- Enterprise Architecture guides the (re)design and (re)engineering of an enterprise such that its operation is compliant with its mission and strategy, and with all other laws and regulations.

- The new point of view of EE is that enterprises are purposefully designed and engineered social systems.

The reference to a social system states that most issues in information processing are "organisational" based not, by technical matters.

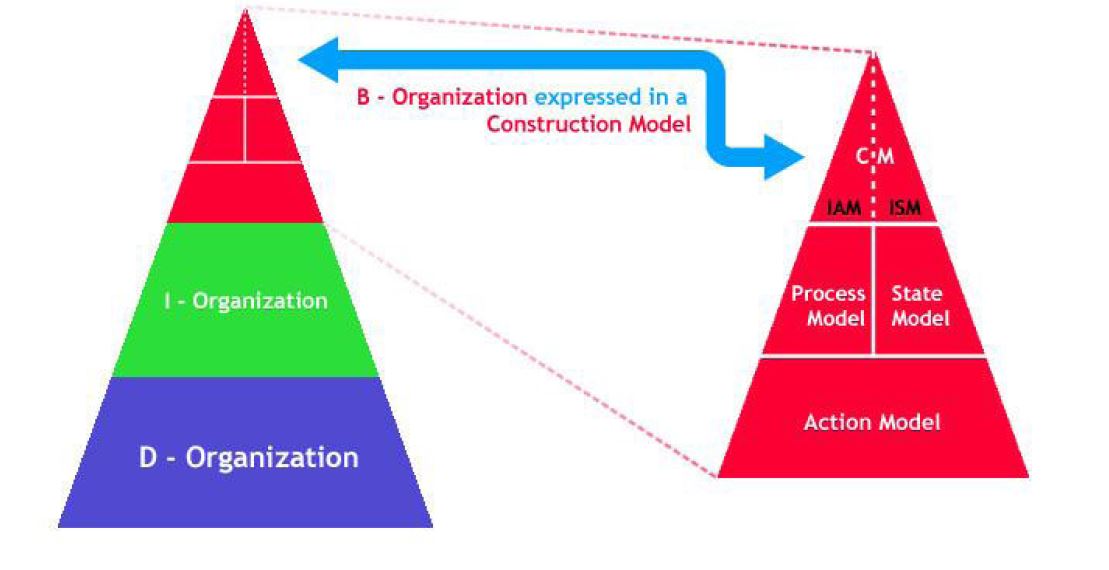

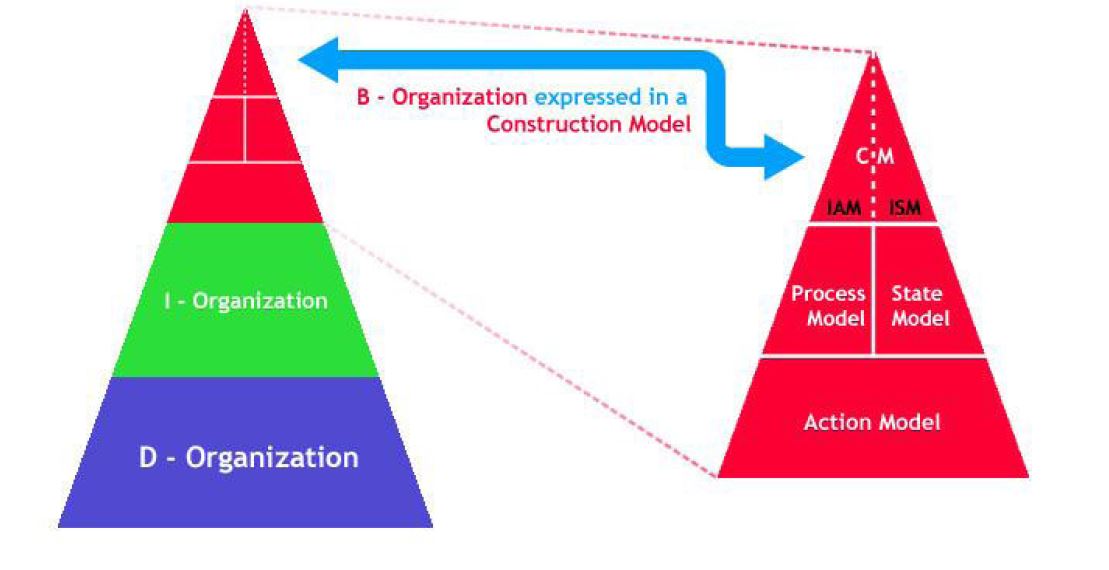

Separations of concerns.

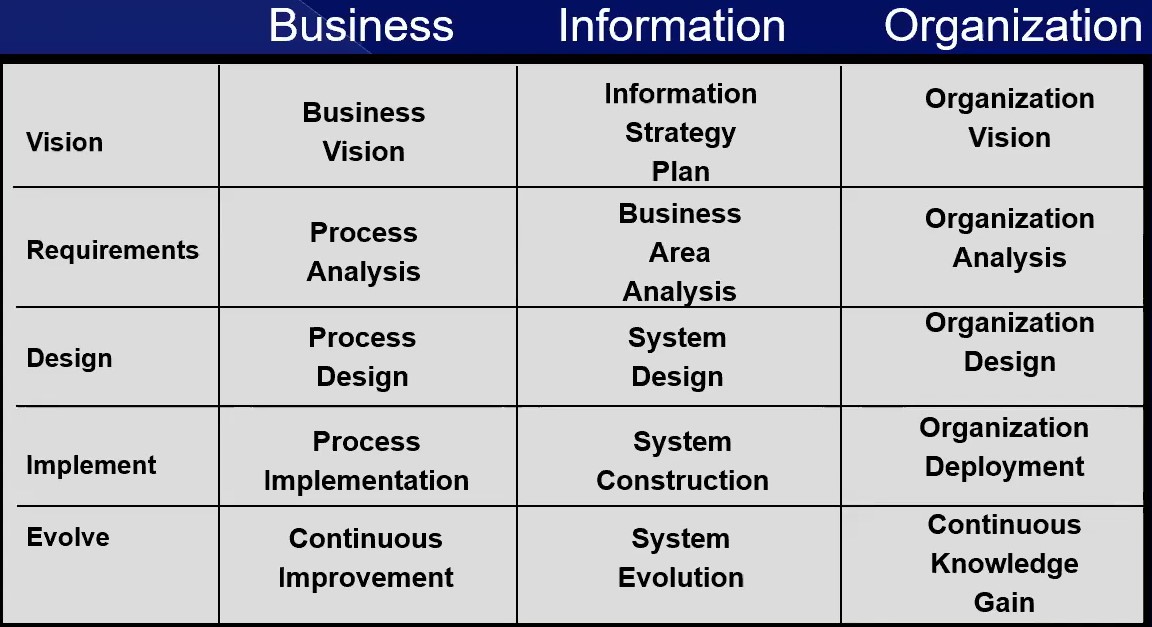

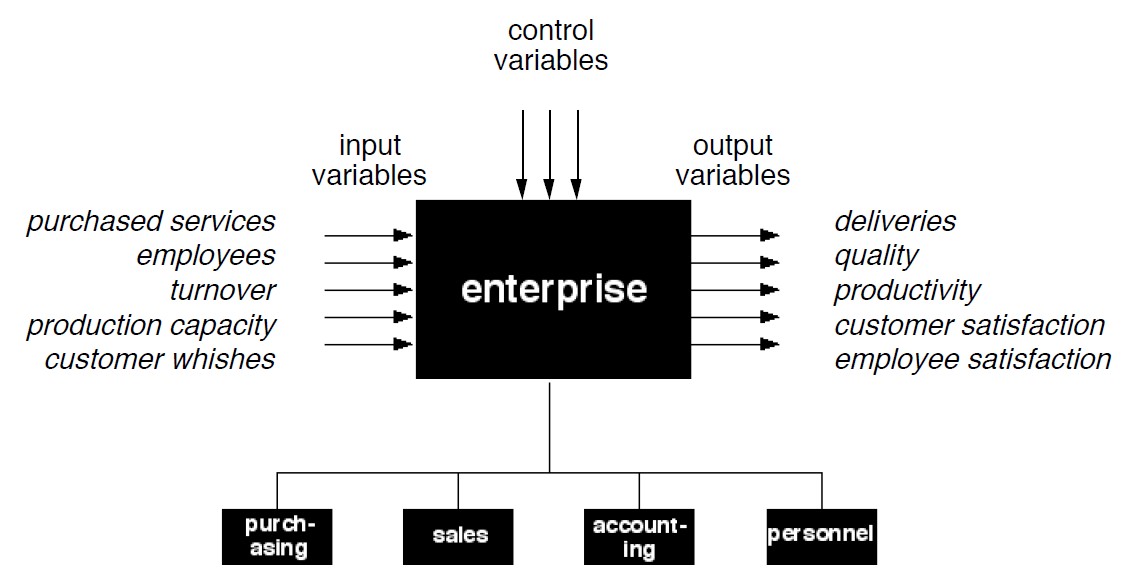

The figure shows a good way in separation of concerns with the focus on the core business.

There a several layers of processing the B-organisatoin is detailed:

- Action model

- Process model, State model

- splits in UAM ISM C M (?)

However, I-Organisation, D-Organisations, do not have good descriptions.

Ways in separation of concerns.

How the thinking developed to achieve this is not clear, but in a document dated 2006 that gap of information is disclosed.

The separtion of concerns is interesting,not having a clear definition on what those concerns are.

Three siloes:

- coordination, (structure)

- actor and (people)

- production. (processes)

What the ontology areas are from the figure:

| | Coordination | Actors | Production |

| Strategy | C&C | Managing ICT | floor product owner? |

| Tactical | formulating interpreting | Infological | computing reasoning |

| Operational | speaking, hearing, writing, reading | Datalogical | Copying, Storing transporting |

⚖ M-1.4.2 Having respect for people

Self organising teams

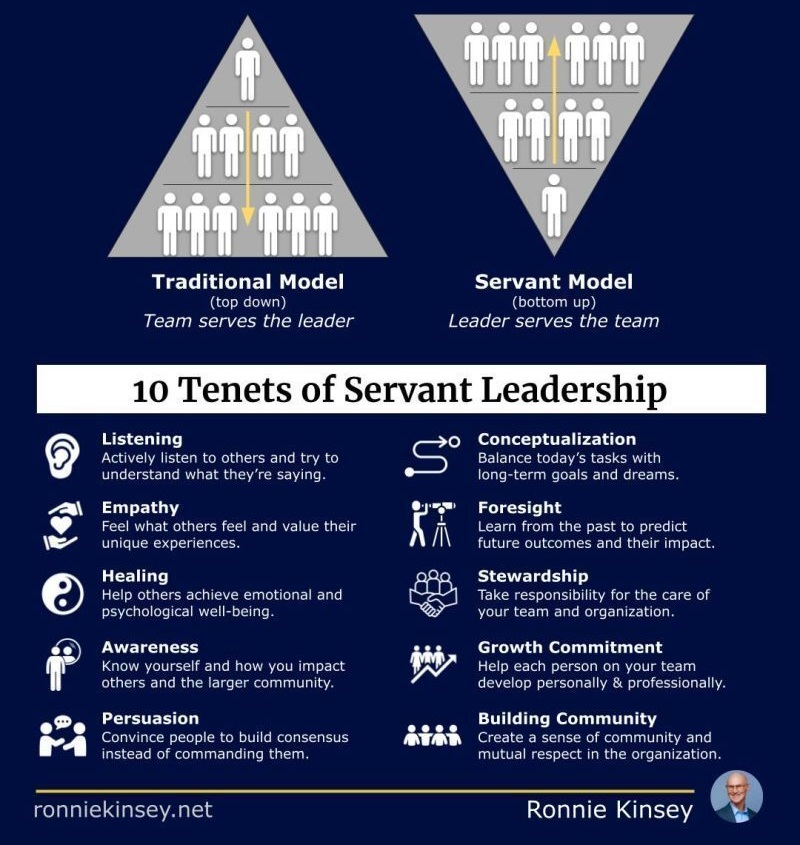

❸ In line with

Bring Power to the Edge (John J. Sviokla, HBR 2009)

The four fundamental principles of the agile, "edge-based" organization are:

- situational awareness,

- skills,

- values, and

- decision rights.

...

Unfortunately, despite the fact that both our governmental and commercial organizations are also facing a more dynamic and volatile environment, in the main our approach has been to create more monolithic, centralized institutions. ...

What we need is a modular, self-synchronizing system to deal with crises at the edges as they happen. ...

Due to our ruthless quest for efficiency we have squished out many of the buffers that could help blunt the propagation of bad effects on food, assets, and other supplies.

We don't notice if we have gone too far until we really need those buffers. ...

My worry is that in our exurban-super-individualistic-iPod-listening-video-game-playing culture, we have lost the local fabric of self-synchronization, and although the spirit may be there to help each other, the mechanisms to make that spirit effective in practice are lacking. The private sector has not done any better.

We need to reinvent what it means to put power to the edge both for our communities and our companies before the next major disruption hits.

🤔 The priorities are set by the enterprise, organisational missions not by technology.

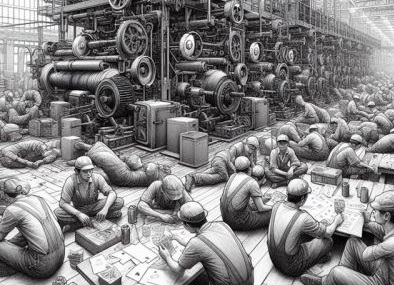

What is waste, value?

Can you see waste in your organization?

The reality is you cannot.

No one has any idea where an organization is performing non-value adding activities, and wasting capacity.

In small pockets we push for efficiency using mostly wrong metrics or cost as a goal.

In factories, if machines are broken down and people are sitting idle, we might see something is off

(Note: it's a different matter that running machines to full capacity is wrong. And even in factories invisible waste is everywhere).

In factories, if machines are broken down and people are sitting idle, we might see something is off

(Note: it's a different matter that running machines to full capacity is wrong. And even in factories invisible waste is everywhere).

So is in offices, services, and software development companies.

We have waste everywhere:

- But waste is like water to a fish. Or air to humans.

- It's everywhere. But we feel it only in it's absence.

In services and knowledge work, If we do not focus on removing waste, improving flow and delivering objective value to the customer, being busy becomes the measure of being value adding.

In services and knowledge work, If we do not focus on removing waste, improving flow and delivering objective value to the customer, being busy becomes the measure of being value adding.

Who are we serving and what are we focusing on?

If people are not serving the customer and not focusing on delivering value to them, we will drive sub-optimal human behavior in our teams and employees.

Being busy:

- Do you know what value is?

- Do you how and where it is delivered?

If everybody is busy all the time, calendars are double/ triple booked, and people are always rushing, most likely you cannot answer the above two questions.

😱 Status: statements about waste but no understanding on the state of value streams.

⚖ M-1.4.3 Understanding the strategy the goal

Value streams, what it is about

USM portal

What is the common denominator?

ChatGPT, when asked to define it, produces this:

A value stream can be defined as the sequence of activities or steps required to deliver a product, service, or solution to customers while adding value and minimizing waste.

It represents the end-to-end flow of processes, information, and resources needed to create and deliver the desired outcome.

All quotes in the previous sections acknowledge indeed that a value stream interfaces with the customer.

It starts and ends with the customer.

But then what is the difference with all the other things we have studied for over 100 years in the customer-provider relationship?

Were those not interactions that started with a customer request?

And didn't we describe those in terms of the realization, up to and including the delivery of the requested thing to the customer?

A value stream is a term that has been around for a while, but it has remained somewhat of a mystery to many people.

The truth is that a value stream is simply a practical workflow that has been used for decades.

It is essentially a way of mapping out the journey of a product or service from its inception to its delivery to the customer.

The term "value stream" originated in the manufacturing industry, specifically within the Toyota Production System (TPS) in the mid-1900s (as Michel Baudin documented).

It refers to the end-to-end process of creating a product or service, from raw materials to delivery to the customer, with an emphasis on identifying and eliminating waste.

The concept of value stream mapping was later developed as a visual tool to help identify and analyze the flow of materials and information through a process, enabling organizations to improve efficiency and reduce costs.

Value stream mapping has since been adopted by various industries, including healthcare and software development.

😱 Status: statements about processes services but no understanding on value streams.

The escape jumping on "The new shiny thing". No readiness for any maturity level (zero).

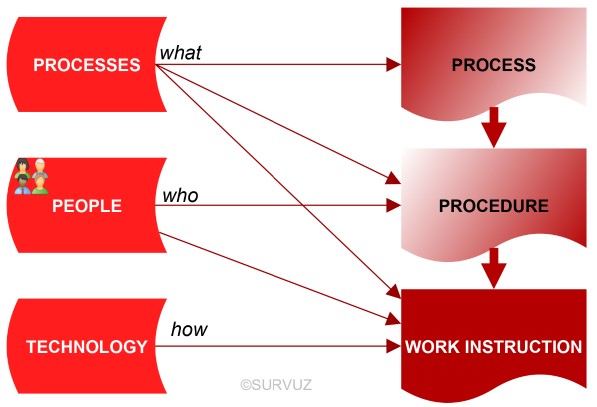

The USM Service, processes

Process model

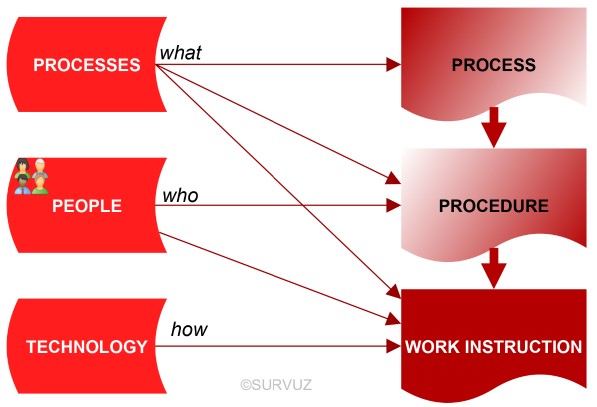

If we test the 'processes' from all current frameworks, standards, and reference architectures against these 10 requirements, it can be demonstrated that they all describe combinations of people, process and technology: the what, the who and the how.

Therefore, they all fail the very first of the 10 requirements.

They actually describe practices, and practices are not processes: practices are derived from processes by adding the who and the how to the what. ...

The difference between:

⚖ processes

⚙ Procedures practices

⚒ Work instructions

The detailed descriptions in different aspects for diffrences is more clear in a figure (see left side).

Confusion:

Most "processes" are internal affairs for the service provider, and as such, they are only part of the customer-facing processes.

🤔 Using this we can focus on avoiding the confusion.

What is not core value, overengineering

The Disaster of Naming Value Streams.

By definition:

- Value streams deliver direct value to the (organization's) customer

- That leads to value ($'s) flowing back to the organization

- There is only one judge of value: the customer. The one who pays for the value

Because of the above:

- A value stream is always triggered by the customer

- A value stream ends with the fulfillment of value to the customer

- A value stream always has an overlaying customer journey to it

Everything else, either:

- Supports one or more value streams, in which case it should be made as efficient as possible, or

- Is extra work, in which case it should be eliminated or justified and then minimized

A value stream is one of the most important components of an organization.

Being labeled as a value stream is not an ego play.

You don't need to be called a value stream:

- to think of and improve flow in your process

- to think end-to-end, and not functionally

- to use concepts and practices of TPS (lean)

- to use measures like flow efficiency in the work that your team does

Disagreeing with the above is like saying, "I am not working in a value stream?

Well, then I cannot focus on the customer right now because I have a product prioritization meeting to go to."

Value stream delivery needs a high degree of customer orientation and a relentless focus on execution

That is why, value streams are only customer value streams.

Focus on them can make an organization work like an elegant symphony or a Formula 1 team

Let's not create clutter and call anything else a value stream

That will just diffuse an already minimal focus on the customer

And it will be a disaster because it will ruin a simple and perfectly useful concept of value stream management

😉 Using this we can focus on the core values.

Everybody his own strategy

True Noth

When every team starts to make their True North ...

You end up, in the same organization, with:

- True North

- True North East

- True North North West

- True East

- True South East

- True South West

- True South West West

- True West

- True Up

- True Sideways

- True Parallel

- True Rear

- True Left

- True Inward

- True Somewhere

- True Oblique

- True Counterclockwise

- True Downstream

- True Right Here

- True Nowhere

Just because there is a nice-looking template, does not mean it is the right template to use.

I have seen enough product teams that have their true-north, strategy, and roadmap, with no or superficial alignment to the organizational strategy.

There is only one organizational strategy that every team, function, and person aligns with and delivers on.

There is no independent product strategy, IT strategy, Ops strategy, etc.

Organizational strategy is not a roll-up of functional, product, and country strategies.

There is an organizational True North. Everyone aligns with that.

There is no further directional clarity that every team needs to create.

There are only organizational customers, that everyone serves.

There are no internal customers, interim customers, or customers of customers.

Magnetic fields are invisible, but they can be observed through the motion of iron filings when in the presence of a magnet. The lines formed by the iron filings illustrate the magnetic field lines of force

That magnet for an organization is customer value streams.

Create alignment, and focus by organizing around customer value streams and managing through customer value streams.

😉 Using this we can focus on avoiding the confusion.

To go to a destination you must know tue North or true South where you are, what the destination is and what the possible routes are.

⚖ M-1.4.4 Strategy execution with insight

Do it correct the first time

hit an run? (A.Nesbitt)

I used to buy into the common wisdom -- that strategy is straightforward, and execution is where you hit the wall.

However, that perspective was completely overhauled when I had the chance to lead a strategy engagement for a major Japanese automaker.

What I expected to be a three-month sprint turned into a nine-month marathon.

Yet, when it was time to hit the ground running, they were off like a shot, smooth and efficient, akin to a finely tuned orchestra hitting every note.

An American CEO, one of the first to steer the US division of this automaker, explained it to me this way:

- In the Western business hustle, it's often a race to action decide now, figure out the fallout later. ...

-

The Japanese method works differently.

The Japanese method works differently.

It's a broader, deeper dive into planning, weaving strategy and execution together.

It's not just about making a list of to-dos;

- it's about shifting 'execution' issues up front,

- tackling uncertainties head-on,

- resolving conflicts early,

- and aligning the team from the get-go.

- ... figure out the fallout later.

But this 'Ready Fire Aim' approach can lead to chaos and misfires in execution.

It's like knowing where you want to go but running with your shoelaces untied.

In most Western companies, leadership has little patience for such thoughtful deliberation and is often critical of people who do.

It's like knowing where you want to go but running with your shoelaces untied.

In most Western companies, leadership has little patience for such thoughtful deliberation and is often critical of people who do.

The cost of that impatience is high; tough, sluggish, and costly execution phases.

In short, if strategy execution feels like a Herculean task, it's likely because your strategy and plan haven't been fully baked.

🤔 Using this we can focus on how to avoiding effects by cultural behaviour.

Source of the images, allaboutlean.com C.Roser:

Do it correct by design

Dark Lean, Dark scrum, Dark Taylorism

In order to do good lean, we need to understand why some lean projects are bad.

Or, in order for practitioners to reach the light side of lean, they need to understand more about “Dark Lean.”

Just like lean, Scrum, done correctly, can yield quite some benefits in project management.

Also just like lean, Scrum can be misused.

How good intentions turn into bad attitudes:

- Dark Scrum:

is the use of Scrum primarily to put pressure on the programmers or developers.

Rather than improving the product or software development process, (Dark) Scrum is used to push for deadlines and to micromanage the developers and programmers.

Rather than quality, the focus is solely on cost and time.

Anything that can be counted counts (i.e., cost and time), and anything that can’t does not count (quality, creativity, motivation…).

- Dark Lean:

A very similar situation can happen with lean manufacturing.

The pretense of lean can be misused sorely to push on cost and time.

Pressure is piled on the worker to work faster and better, without actually improving their workplace.

Lean is used as an excuse to fire people.

Rather than improving the processes by reducing waste, unevenness, and overburden (muda, mura, muri), the focus is mostly on cost.

Avoiding the dark approach, avoiding the bad experiences of dark approaches.

Similarly, nowadays you can find quite a few people who see lean as a modern form of Taylorism, used to exploit the workers.

I have been to quite a few plants where the word “lean” was burned and refused by operators based on prior experience.

Changing the mindset of the operators toward the benefits of lean is hard. Hence, try to avoid this Dark Lean as much as you can, and bring the people to the light.

Sorry for sounding like a preacher…

M-1.5 Sound underpinned theory, foundation

Knowing the position situation in by observing several types of associated information .

These are:

- Art of the role by observed input and results

- Art of the role by follow up interactions

- Kind of task in the process by role

Non trivial means it will be repeated for improved positions.

Command & control needs information for what understanding what is going on.

Without knowing the situation or direction there is no hope in achieving a destination by improvements.

⚖ M-1.5.1 Information processes basic lean requirements

Understanding, misundertanding lean

In the understandable logical lean 9-plane proposal:

- In the centre have a goal, there are also threats

- Three pillars, a/ people b/ technology /c process

- At each pillar simplicity is at the bottom, master of art at the top

- where to start?

At the bottom right side. For all levels of the goal something must be measurable at all levels (closed loops) when you want to see the effect of wanted improvements.

- How to proceed?

Go clockwise around in a cycle verify what is going on continuously.

- From right to left at the bottom (pull)

- Followed by left to right at the top (push)

In a figure,

see right side.

⚖ M-1.5.2 Ideas from an anatomy, routes by maps, usefulness

Awareness of positions

Leaving the industrial age and going into the information age is expecting more power at the edges, needing awareness of position and situation by stakeholders.

- 💡👁❗ Geo-mapped roles idea: defining the organisation

Goal: helping organisations in the mapping of what they are doing using the generic cubicle.

Activity: the high level abstraction of the cubicle making more relevant in their business world. Customization for the position awareness is needed.

- 💡👁❗ persons ⇄ geo-maps idea: organise who is who in geo-mapped roles.

Goal: helping organisations in organising, clearing up accountabilities responsibilities for persons and roles using the customized cubicle.

Activity: Using the customized cubicle with names helps in to see the need for understanding and recognizing the logical counterparts.

A person can be named at many roles. For very big organisations this will be hardly the case, at start-ups with a very few persons the same person, name, will popup everywhere.

These two ideas are understandable business cases, achievable opportunities.

Awareness of the orgnisation system and the components

Awareness of position and situation by stakeholders and having a power for decision at the edges opens new challenges for helping organisations.

In the information age there is a shift in powers.

The required communication for defining sharing the expectations and goals clear is not easy.

- 💡👁❗ Communication flows idea: useful interactions, understanding.

Goal: helping organisations defining master data, the mapping of a shared vocabulary, language in aspect of the customized cubicle.

Activity: There are preferred ways in how to communicate with logical counterparts and what language and/or visuals are positive for achieving effective results.

- 💡👁❗ Profitability flows idea insight for: value streams, theory of constraints.

Goal: helping organisations in a mindset change to accept the new change approach.

Activity: Constraints, waste, missing respect for people, insufficient information's by closed loops are improvement options are issues to discuss and solve.

These two ideas are understandable business cases but are with uncertainties by the impact for the required changes in mindsets. Unlearn learn is hard.

Changes at the floor level for processes

The 1/2 process floor is the operational value stream serviced by the foundation 0/1.

Doing a change at foundation for execution is requiring:

- Coordination in why and what to change.

- Coordination in how to change.

These are different steps in non-trivial systems where safety continuity is important.

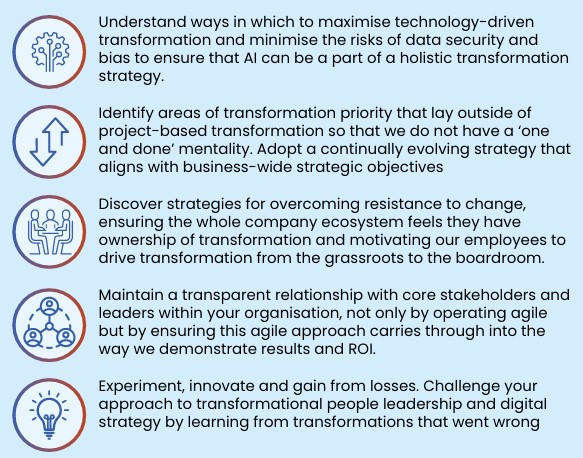

- 💡👁❗ Technology changes idea: improve functionality with profitability.

Goal: help in understanding the assembly line and how to optimize this.

Activity: Focussing on the operational assembly machines the technology that is used.

That technology can be improved by the way they are used or being replaced for other options.

- 💡👁❗ Safety changes idea: improve safety not hurting functionality.

Goal: good practice safety, cyber security, in a risk based value setting acknowledging mandatory legal obligations for the whole local organisational system.

Activity: When focussing on the assembly part of operations machines technology is used. Cyber security, the safety of using technology can be improved.

The assembly part is not the only that needed to be safe but the risk are very likely different.

Segmentation in the important lines in the customized cubicle should give help in strategic decisions.

These ideas are business understandable but when going for realisations will get into manage challenges at more complex situations to get solved.

Opportunities for the methodology of doing changes, how managing products

Leaving the industrial age and going into the information age is a direction with a overwhelming number of products and each of those products can be overwhelming in the number of components with specifications.

A system that is capable of managing that all is a missing link. A start is describing what it should do.

- 💡👁❗ Portfolio registration idea: Have a portfolio with product specifications.

Goal: Help organisations in documenting understanding the the products in their portfolio by the specifications of the products.

Activity: Getting an inventory of the value streams and reverse engineer the product for understanding what the intentions and specifications are.

- 💡👁❗ Managed Portfolios idea: use a tool, product that manages the complete product lifecycle.

Goal: Help organisations managing the changing portfolio from suggestions, backlog, to requirements, to validations to specifications.

Activity: There is no product in the market for this.

There should be created a standard for the product structure in the complete lifecycle so all activities are easy understandable created while the needed documentation is created with minimal effort.

These ideas are goals organisations are searching for, have a desire for, but by not being available in an easy understandable way.

Everbody is getting lost in isolated partial siloed approaches.

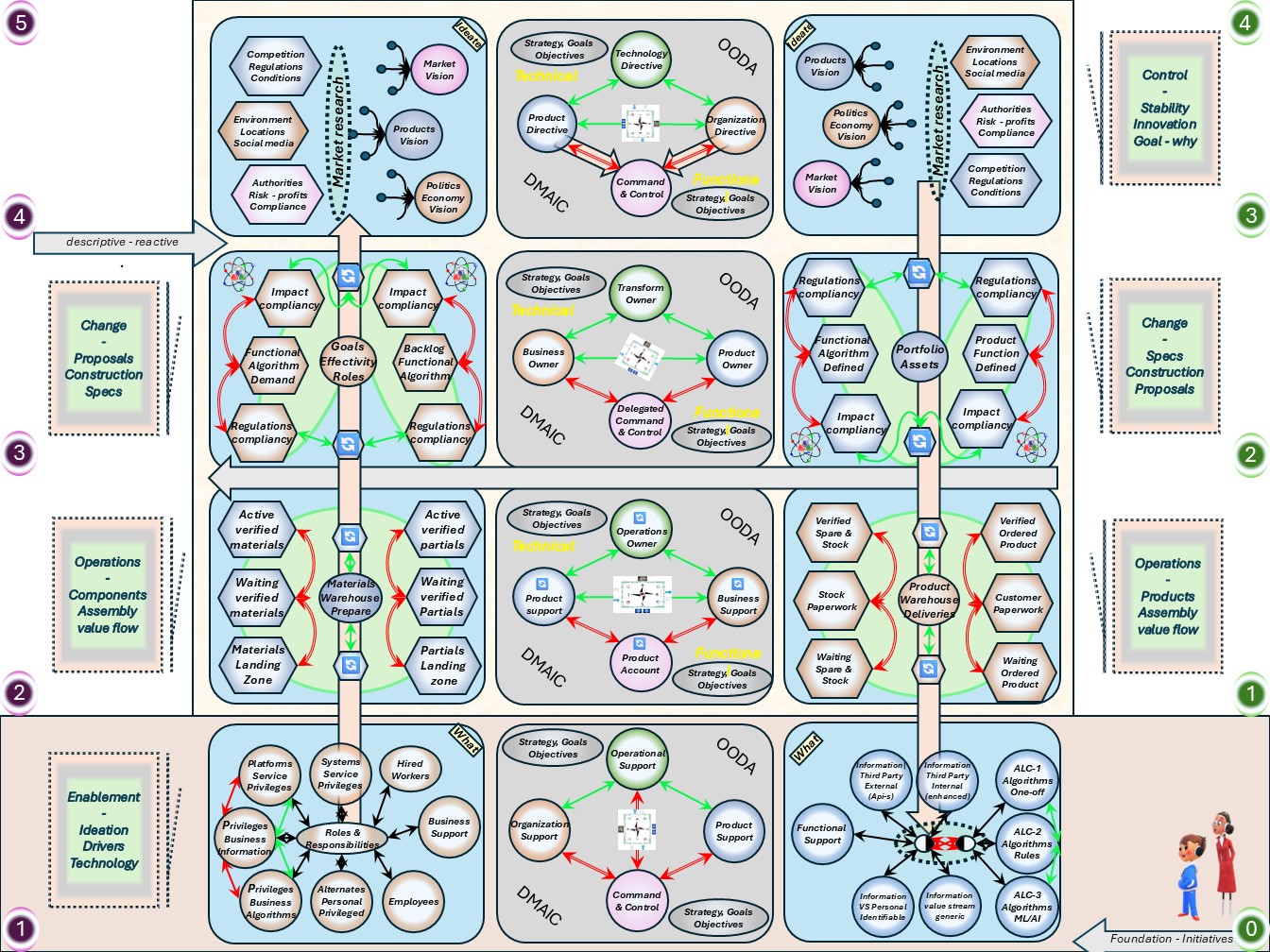

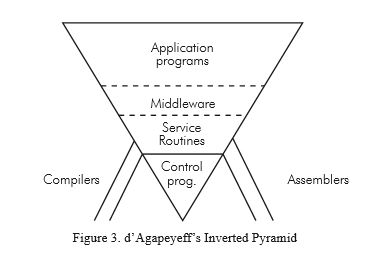

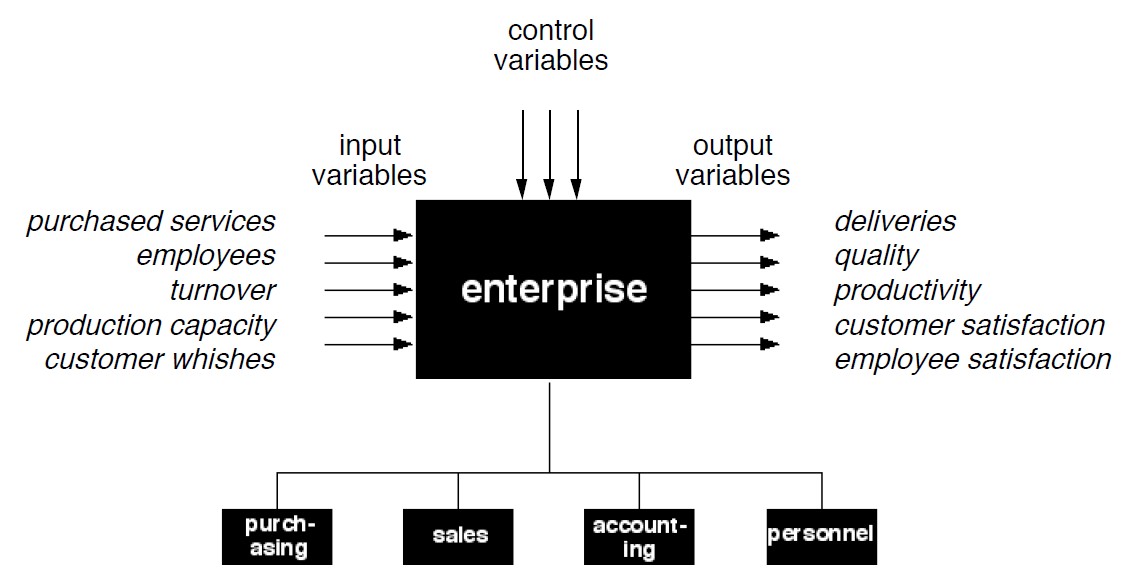

⚖ M-1.5.3 Process foundation, the fundaments basic creation

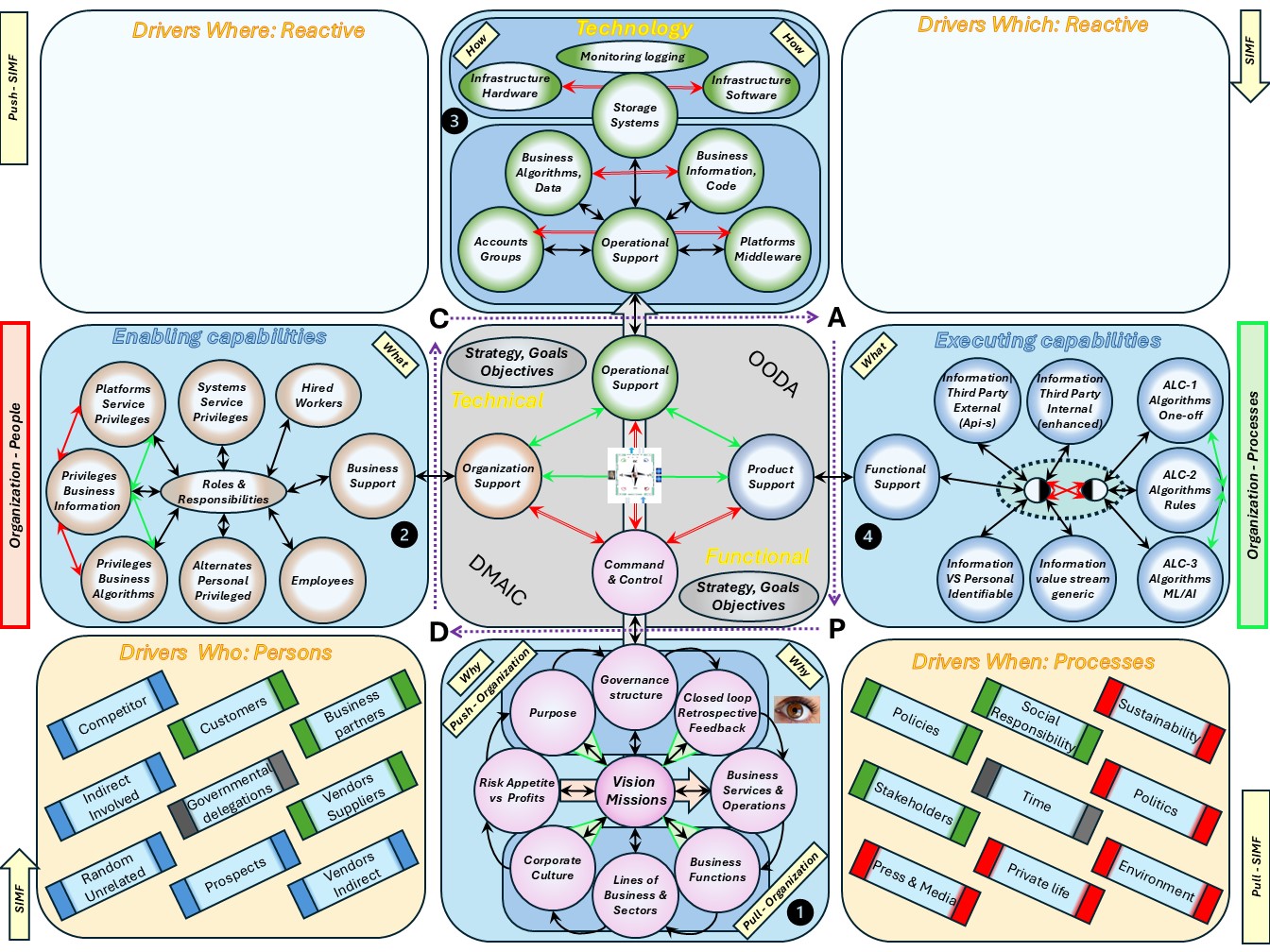

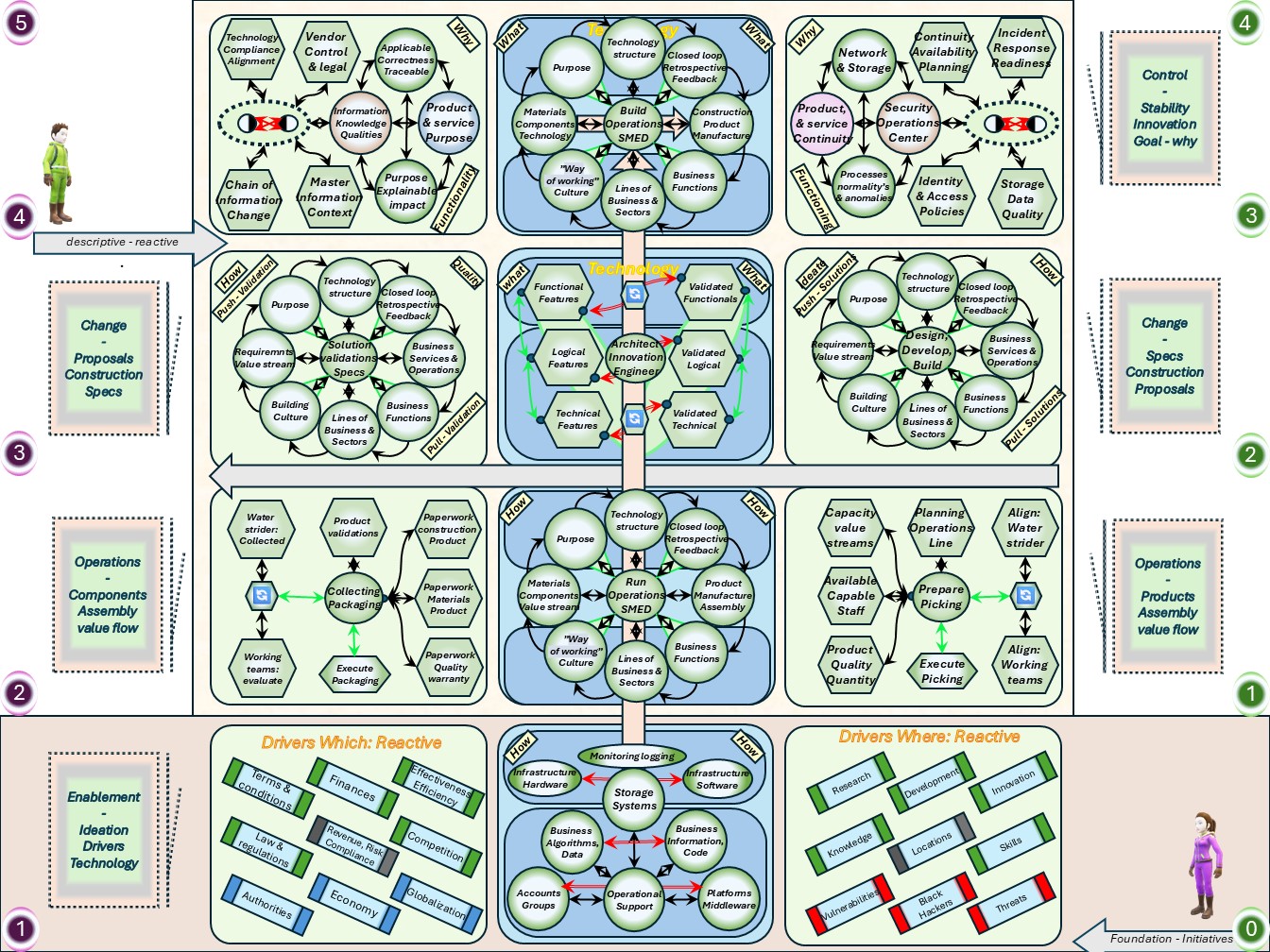

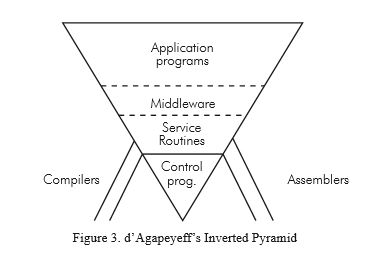

Getting the basics by drivers

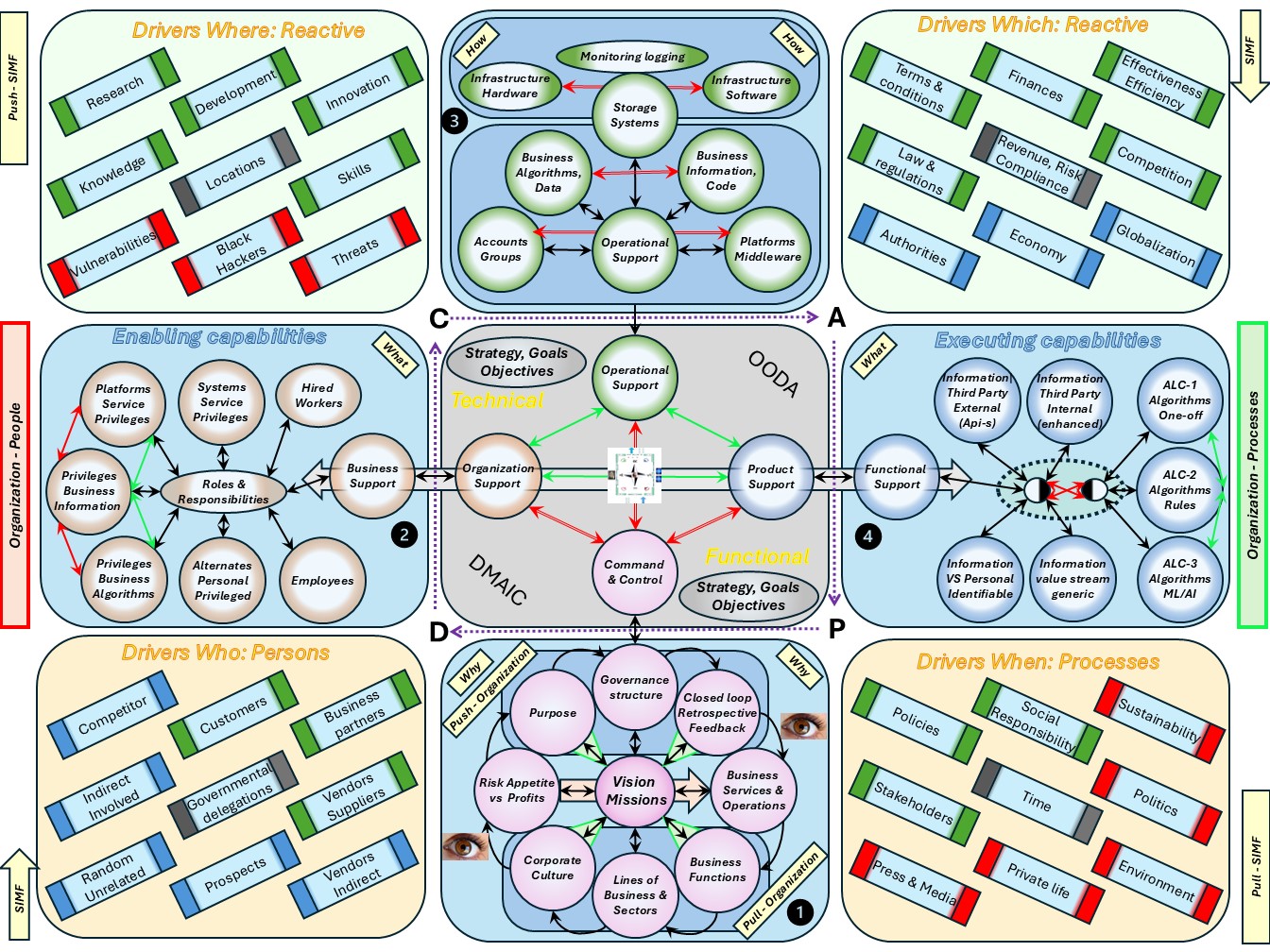

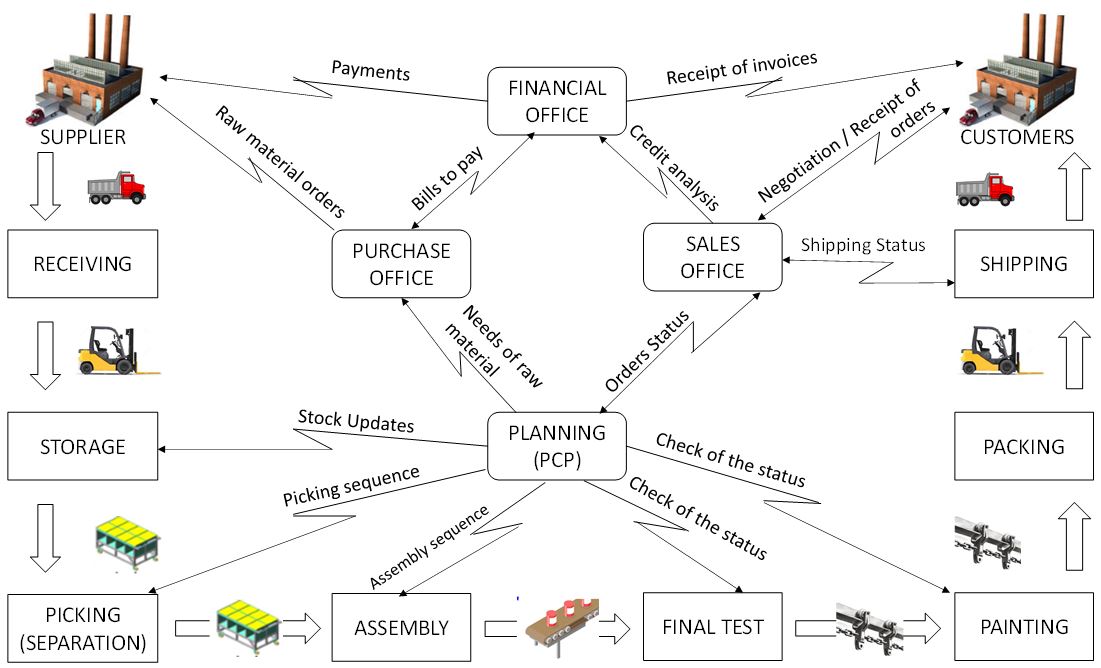

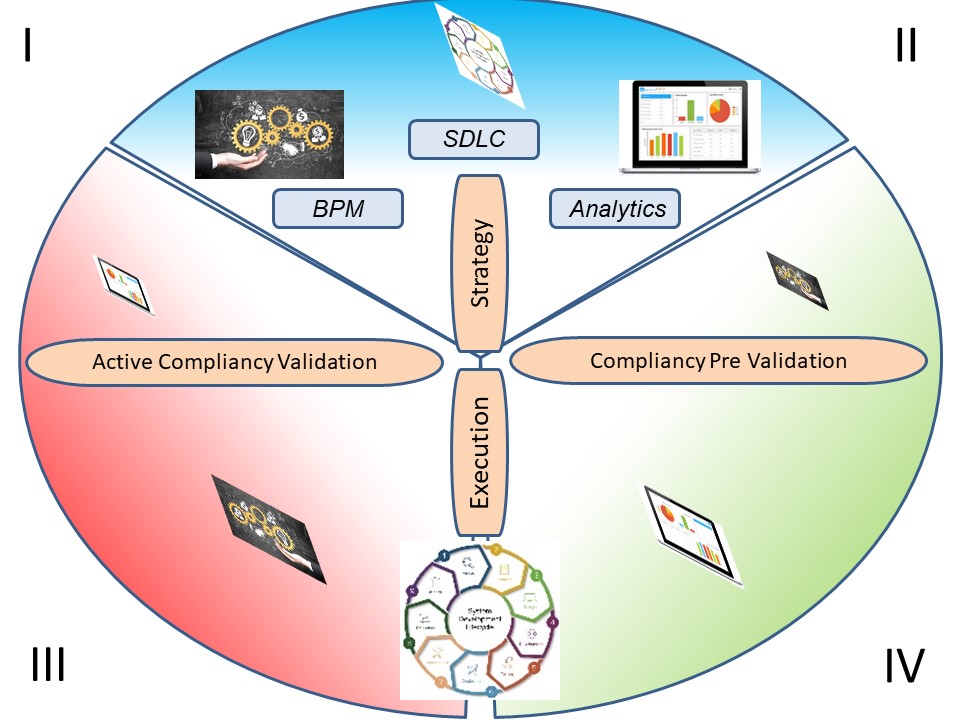

The Secure Information Management Framework (SIMF) initiative.

Start

ask: what you can do for technology.

You must construct your technology in a way that reduces risks to the information it processes, bringing them down to an acceptable level.

And that starts with understanding the information, not the tech. and

Why Information Security Starts with Information : effective information security begins with understanding the information itself. ...

The owners of information are the business functions that create and use it.

Initiation by an entrepreneur

In the beginning there is nothing, only a vision with an idea for a product, how to control:

- processes, the way products services are created

- machines, technology that are needed

- people acting within the processes using technology

In a figure:

Foundation for information processing, expected stability

When the vision for the product is information technology related, the three categories are having a standard state by best practices.

In a figure:

⚖ M-1.5.4 Information processes controlled evaluated changes

SIMF the foundation for inforamtion processing

The foundation is influenced by many drivers. Reactive reactions in attempts to follow is what is only possible.

This type in the dimensions is not ready to be agile or do proactive actions.

In a figure:

viable system model (ViSM), viable systems approach

I hesitated connecting observations, signals direct to a process cycle.

My belief was that only consciousness decisions from a central point are valid in a system, however, for an autonomic system in viable system this is valid.

A

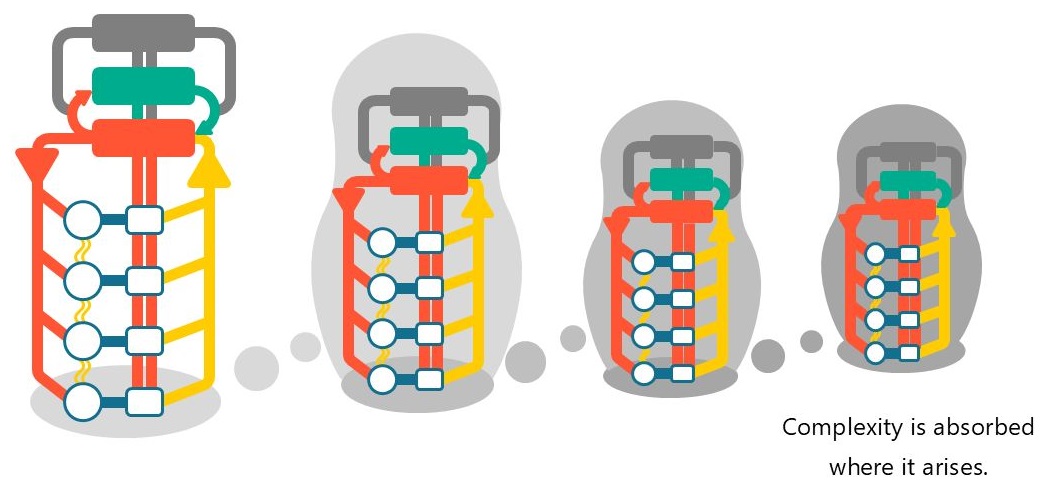

viable system is any system organised in such a way as to meet the demands of surviving in the changing environment.

One of the prime features of systems that survive is that they are adaptable.

The VSM expresses a model for a viable system, which is an abstracted cybernetic (regulation theory) description that is claimed to be applicable to any organisation that is a viable system and capable of autonomy.

The model was developed by operations research theorist and cybernetician Stafford Beer in his book Brain of the Firm (1972).

Together with Beer's earlier works on cybernetics applied to management, this book effectively founded management cybernetics.

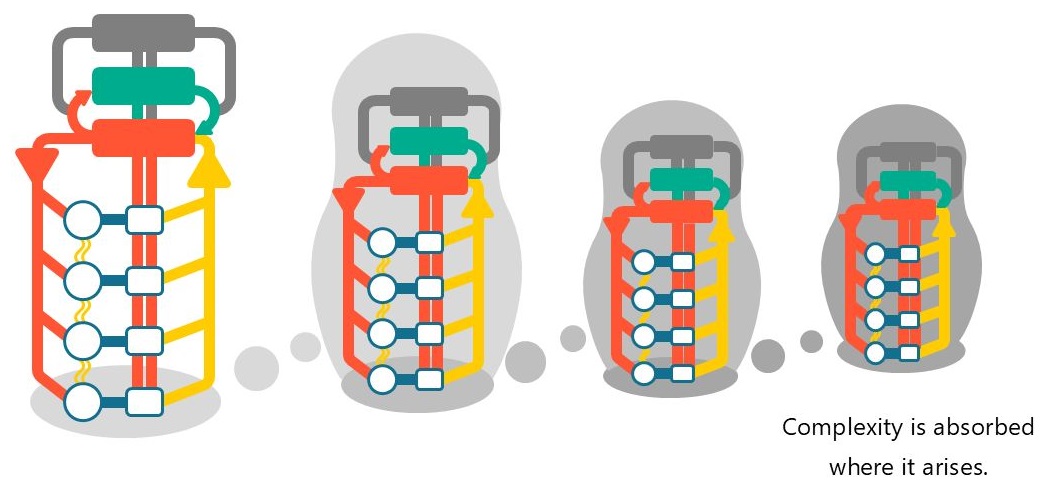

The first thing to note about the cybernetic theory of organizations encapsulated in the VSM is that viable systems are recursive;

➡ viable systems contain viable systems that can be modeled using an identical cybernetic description as the higher (and lower) level systems in the containment hierarchy (Beer expresses this property of viable systems as cybernetic isomorphism).

There is more to state:

- An organic system has autonomic nervous system.

The technology environment services are expected to act like an autonomic system.

- An autonomic system needs to be taken care for.

For the needed functionalities there should be the capacities and capabilities.

- It is possible to stress an autonomic system in a way it will become dysfunctional

SIMF the foundation antipode, controlled change.

When C&C gets more mature there are options in proactive start activities in risk evaluated changes.

This is a complete different dimension than the reactive one, it ignores operations and the product development, in between.

In a figure:

M-1.6 Maturity 0: Strategy impact understood

From the three PPT, People, Process, Technology interrelated areas in scopes.

- ❌ P - processes & information

- ❌ P - People Organization optimization

- ❌ T - Tools, Infrastructure

Only having the focus on others by Command and Control is not complete understanding of all laysers, not what Comand & Control should be.

Each layer has his own dedicated characteristics.

⚖ M-1.6.1 Determining the position, the situation

People Process Technology

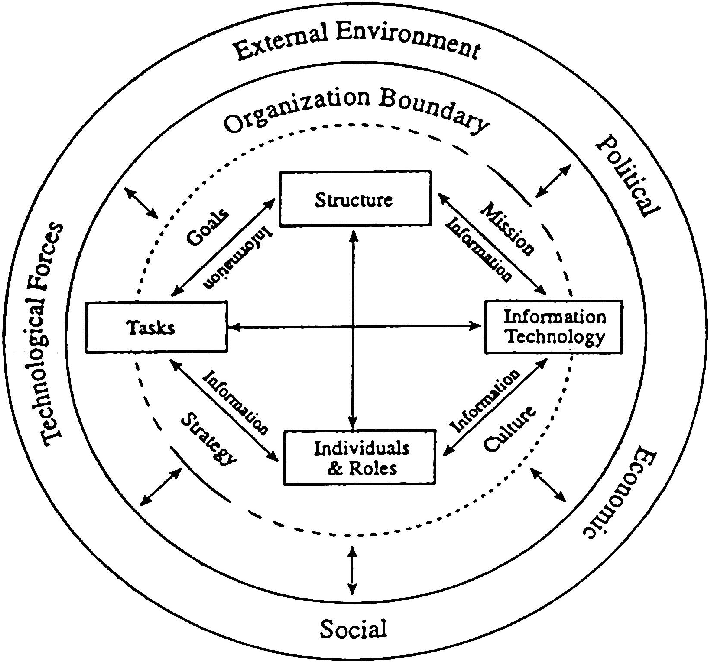

❶ The everlasting software crisis in the news:

Is The 60-Year-Old 'People Process Technology' Framework Still Useful?

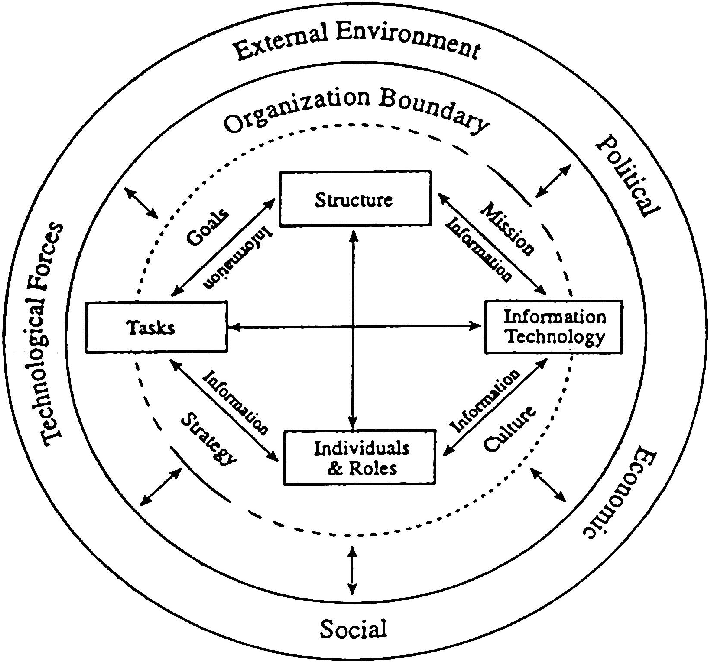

Virtually every business leader has used the popular People Process Technology framework to help manage change in their organization. Its roots date back to the early 1960s when business management expert Harold Leavitt developed his "Diamond Model".

A framework representing the interdependencies among four components present in every organization: structure, people, technology and tasks.

The model represents that changes to one component will likely affect the other three. So, for change to be successful, the business must evaluate and manage the impacts across all four areas.

... the components most commonly used today: people, process and technology.

That is a strange limitation, reduction to review.

Those three are the ones managed by command & control and ignoring command &control (structure) ignoring the C&C itself.

Leavitt

dealt with the analysis of patterns of interaction and communication in groups, and also interferences in communication. He examined the personality characteristics of leaders. He distinguished three types of managers:

Leavitt

dealt with the analysis of patterns of interaction and communication in groups, and also interferences in communication. He examined the personality characteristics of leaders. He distinguished three types of managers:

- The visionary and charismatic leader is characterized by being original, witty, and uncompromising.

He is often eccentric and seeks to break with status quo, and embarking on a new path.

- The rational and analyzing leader is holding to the facts supported by numbers.

He is systematic and can effectively control.

- The pragmatist - The contractor of established plans, skillfully solving problems.

Leaders of this type are typically not visionary.

A more structured review:

E-government Designing IT Operating Model for Managing IT Services (Hamdi Riady, Nur Sultan Salahuddin - aug 2019 )

🤔 Structure and approach of C&C to involve in retrospective.

Context confusing: business - cyber technology

❷ There is a lot of misunderstanding between the normal humans and their cyber counterpart colleagues.

Not that cyber people are not humans but they are using another language, jargon, in communication.

That culture of misunderstandings is not necessary and should be eliminated.

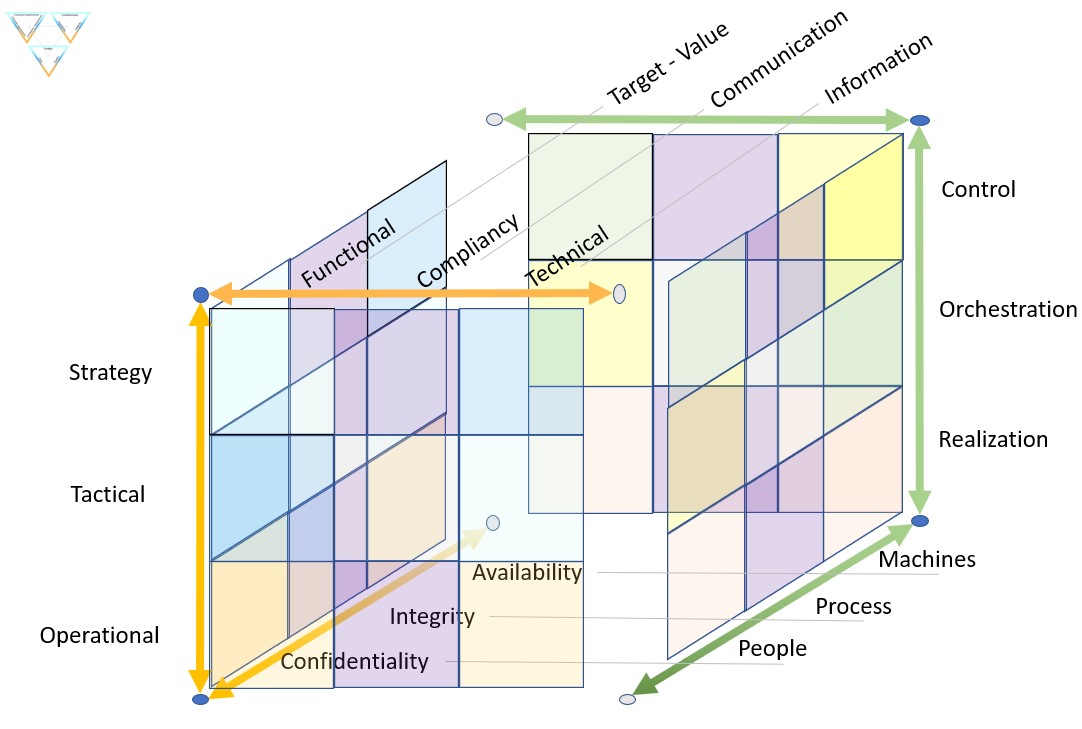

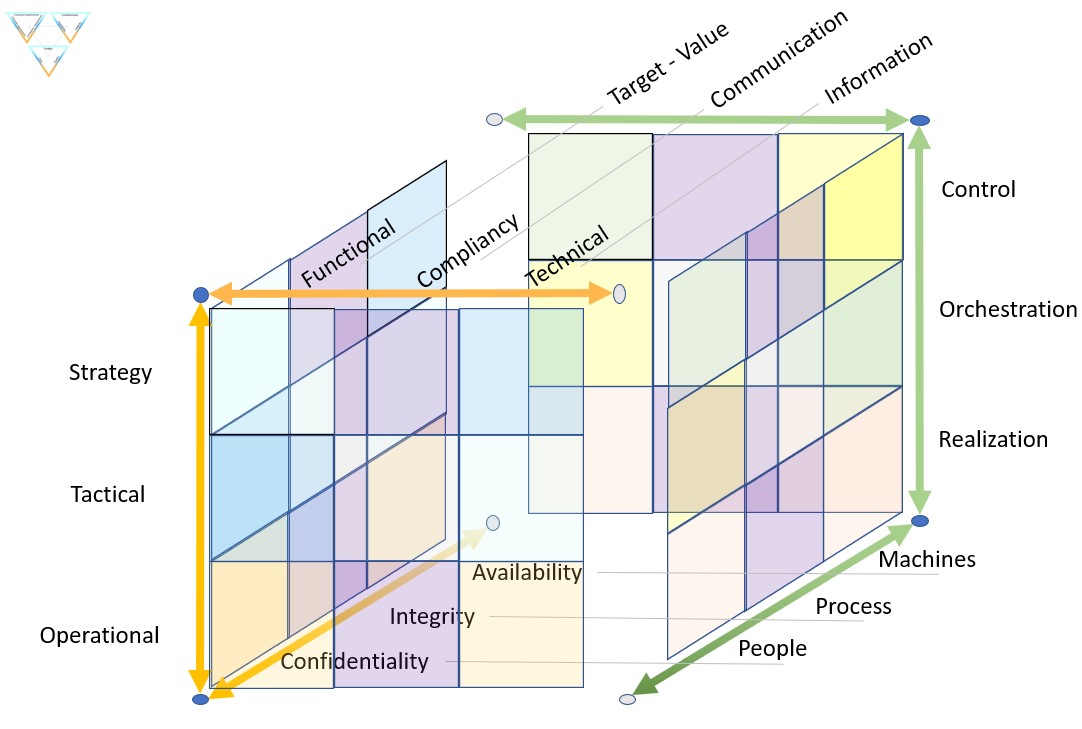

🤔 A translation of words to start:

| ICT | Business | | ICT | Business | | ICT | Business |

| Strategy | Control | - | Functional | Target-Value | - | Confidentiality | People |

| Tactical | Orchestration | - | Compliancy | Communication | - | Integrity | Processes |

| Operational | Realization | - | Technical | Information | - | Availability | Machines |

A figure:

See right side

There are several paradigm shifts:

- Leadership: "Strategy, Tactical, Operational" and "Control, Orchestration, Realisation" are both used for justifying hierarchical power.

Although that is a fit, both are incorrect. Hierarchy is just another dimension.

- At the floor: "confidentiality, Integrity,Availablity"

a good fit with: "people, Process, Machines"

- At the roof "functional, Compliancy, Technicality"

a good fit with: "Target - value, Communication, Information"

- Information : the asset "Information" is a business asset not something to be pushed off as incomprehensible "cyber".

Information processing is enabled by technology, technology acting as a service.

- Compliancy : The communication, accoutablity is placed at business context.

What is in demand by risk management considerations, to be deliverd in a service approach.

There focus is on task activies in lines to design / develop and realize / operate a product.

Out of scope are dimensions for:

- Leadership: hierachical lines of power and control.

- Time: In what a product / hierachry: was (paste) is (now) and would be (future).

- ....

❸ In the figure the cubicle bottom and top did not get context.

What could be there?

- Left side = technology (service operations), right side organisation

- front side = technology (service functions), back side processes

- 🤔 bottom people personal interests, top people group interests

⚖ M-1.6.2 Determining the destination, strategy

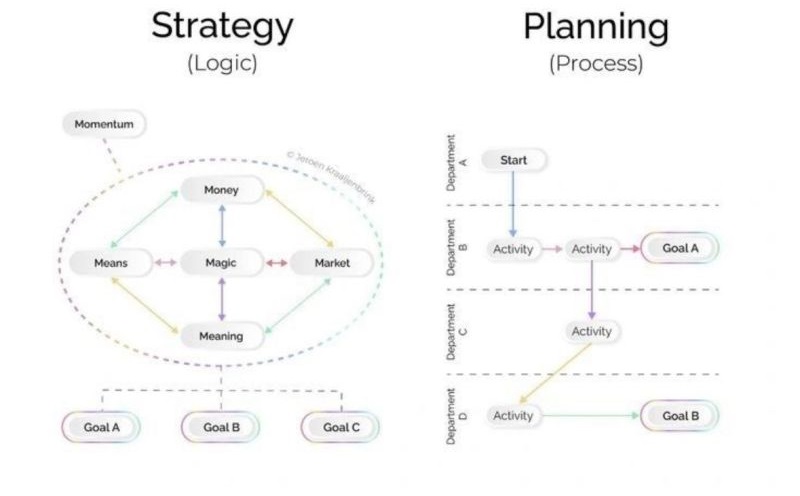

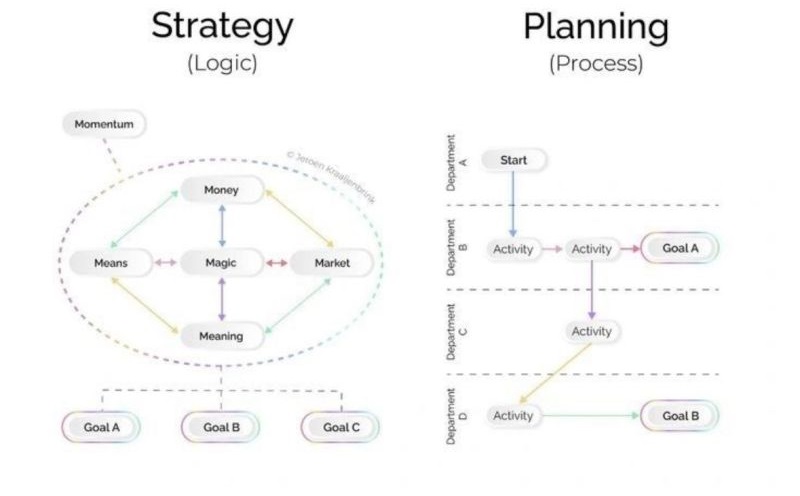

Strategy vs. planning -- what's the difference?

❹ Confusion in expectations and goals.

Why a Plan is NOT a Strategy

Strategy vs. planning -- what's the difference?

- Strategy: choices that position you to win.

- Planning: who does what by when.

Many organizations treat planning as a substitute for strategy.

Their "strategic plan" is just a list of projects and initiatives.

They won't lead to winning. Because they have no coherence.

The result is not bigger than the sum of all initiatives.

👉🏾 Strategy is a theory of how you win.

The few priorities that - together - are More than the sum of its initiatives.

A successful business needs both: Strategy and planning.

Mistaking one for the other or avoiding strategy because planning feels easier is a sure way to failure.

Graphic by Jeroen Kraaijenbrink

An IT strategy or an IT plan

❺ Statement: We don't need a separate IT strategy; our overall strategy is sufficient.

The reasoning:

- Information Technology (TT, ICT, ITC ) is a fundament of your organization.

- Your IT systems determine how good, efficient - reliable, your services are.

- They ensure that your stakeholders, that includes your customers, are and remain happy.

- ICT is not a goal for itself. It is a means to support and strengthen your organizational strategy.

They help you to fulfil your social mission and to fulfil your legal obligations.

🤔 That's why you need an IT plan that aligns with your organizational strategy.

The missing link is C "Communication".

An IT plan that:

- makes clear what you need, what you want and what you can do.

- helps you make smart choices, save costs and prevent problems.

An ICT strategy or a ICT service plan

❻ A general ICT strategy, on the other hand, is possible, but that will be a completely different world than the current ICT.

The big difference is: focus on Technology vs focus on Provision as a Service.

If you don't do anything with your strategy, you might as well not have a strategy.

It only costs time, in the end it doesn't yield anything. Hoshin Kanri: a few nice A4 sheets with some text.

"There is nothing quite so useless, as doing with great efficiency, something that should not be done at all." and "Culture eats strategy for breakfast." (P Drucker)

The goal of a strategy

🤔 A reference to a checklist:

what is good strategy often not easy to activity (2024)

A good strategy must first envision what the world will look like in the time frame you are aiming at, 5 years, 10 years, 15 years, etc. then the strategy must envision what the needs will be at that time.

Then a good strategy will understand the major technology, world order, and group think trends at that period. It goes way beyond good planning.

There is a misleading trend going on that says that strategy is separate from futurism. They are intertwined. The naysayers continue by saying that nobody can see the future, which says that Dick Tracy, Startrek, and 2001 A Space Odyssey never happened. The data points and trends are all around us, just not the precision.

Tradional strategic planning that extrapolates on yesterday’s strengths and weaknesses is useless and dangerous in an exponential world. (Bill McClain)

Here are 9 pointers to get you started.

❼ Where to start and how to proceed for these 9 pointers in the figure by their positions is unintended a good fit for the SIAR model that extended into Lean understanding.

⚖ M-1.6.3 Managing Uncertainties, confusion, ambiguities (MUCA)

Misunderstanding by missing standards

❽ The complexity in the volatile world has a root cause in disorder and muddle.